How Do Language Models Understand Tables? A Mechanistic Analysis of Cell Location

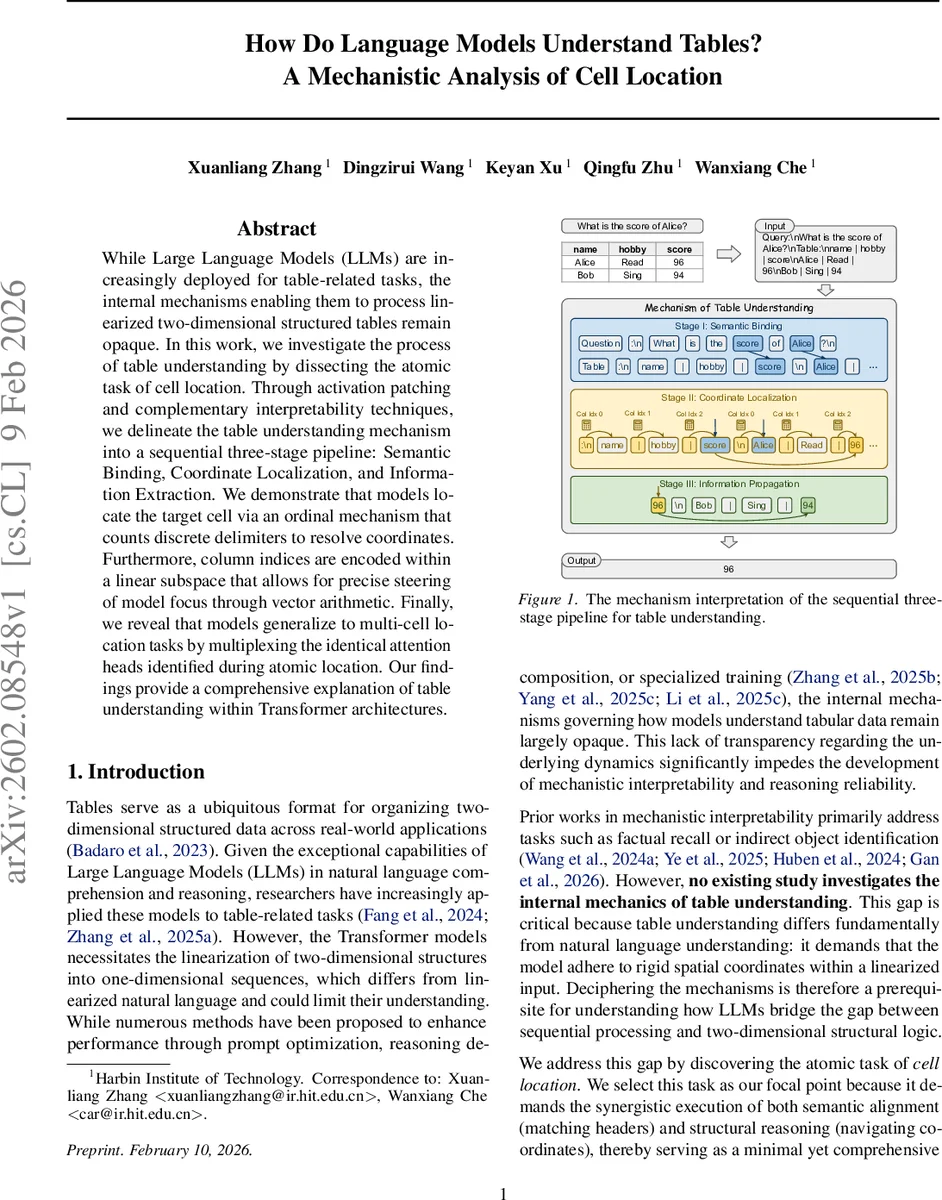

While Large Language Models (LLMs) are increasingly deployed for table-related tasks, the internal mechanisms enabling them to process linearized two-dimensional structured tables remain opaque. In this work, we investigate the process of table understanding by dissecting the atomic task of cell location. Through activation patching and complementary interpretability techniques, we delineate the table understanding mechanism into a sequential three-stage pipeline: Semantic Binding, Coordinate Localization, and Information Extraction. We demonstrate that models locate the target cell via an ordinal mechanism that counts discrete delimiters to resolve coordinates. Furthermore, column indices are encoded within a linear subspace that allows for precise steering of model focus through vector arithmetic. Finally, we reveal that models generalize to multi-cell location tasks by multiplexing the identical attention heads identified during atomic location. Our findings provide a comprehensive explanation of table understanding within Transformer architectures.

💡 Research Summary

The paper investigates how large language models (LLMs) process tabular data that has been linearized into a one‑dimensional token sequence. Rather than focusing on downstream performance, the authors isolate the atomic task of cell location: given a natural‑language query that specifies a row header and a column header, the model must output the value found at the intersection of those headers. To ensure that the model cannot rely on memorized facts, cell values are sampled randomly from an entity pool, making the task purely contextual.

A diverse set of open‑weight decoder‑only Transformers is examined, including Qwen‑3‑4B (the primary model, achieving ~90 % accuracy), Qwen‑2.5‑32B, and Llama‑3.2‑3B/Llama‑3.1‑8B. The authors apply a suite of mechanistic interpretability tools: activation patching (both layer‑wise and head‑wise), linear probing of the residual stream, and targeted attention ablation. For activation patching they construct two counterfactual inputs: “column patching” replaces the queried column header with a different one, shifting the target cell; “row patching” does the same for the row header. By swapping activations from a clean run with those from a corrupted run and measuring the change in logit difference (LD), they compute an Effect Score that quantifies causal importance (scores near 1 indicate a pivotal component).

The causal analysis reveals a three‑stage pipeline:

-

Semantic Binding (early layers 1‑16).

Cosine similarity between hidden states of query tokens and all table headers quickly rises, and the top‑20 attention heads that attend from query tokens to the correct headers are essential: zero‑ablating them collapses performance from 94 % to ~5 %. This stage aligns the natural‑language constraints with the appropriate header tokens. -

Coordinate Localization (middle layers 17‑23).

After semantic alignment, the model shifts focus from header tokens to the region of the target cell. Linear probes trained on the residual stream predict row and column indices with high R² (>0.85), indicating that explicit coordinate information is linearly encoded. The authors further show that column indices reside in a linear subspace: injecting RoPE‑consistent shift vectors into the residual stream moves the model’s attention to a different column in a predictable, additive manner. This demonstrates an ordinal counting mechanism that treats pipe delimiters as discrete steps to compute coordinates. -

Information Extraction (late layers 24+).

At this point the target value is already resolved; intervening on any token has negligible effect on the final logit, confirming that the answer has been propagated to the decoder’s output stream.

The study also extends to multi‑cell queries (e.g., “What are Alice’s and Bob’s scores?”). The same set of semantic‑binding and coordinate‑localization heads are reused in parallel, showing that the model multiplexes the atomic cell‑location operation rather than learning a new, more complex mechanism.

Overall, the work provides the first detailed mechanistic account of how transformer‑based LLMs internally construct and manipulate a coordinate system for tables. It shows that despite the necessity of linearizing a 2‑D structure, the model builds an explicit, manipulable representation of row and column indices, enabling precise steering via vector arithmetic. These insights have practical implications for designing more interpretable table‑based prompts, improving reliability of table QA systems, and guiding future architecture modifications that could make structural reasoning more transparent and controllable.

Comments & Academic Discussion

Loading comments...

Leave a Comment