EvoCorps: An Evolutionary Multi-Agent Framework for Depolarizing Online Discourse

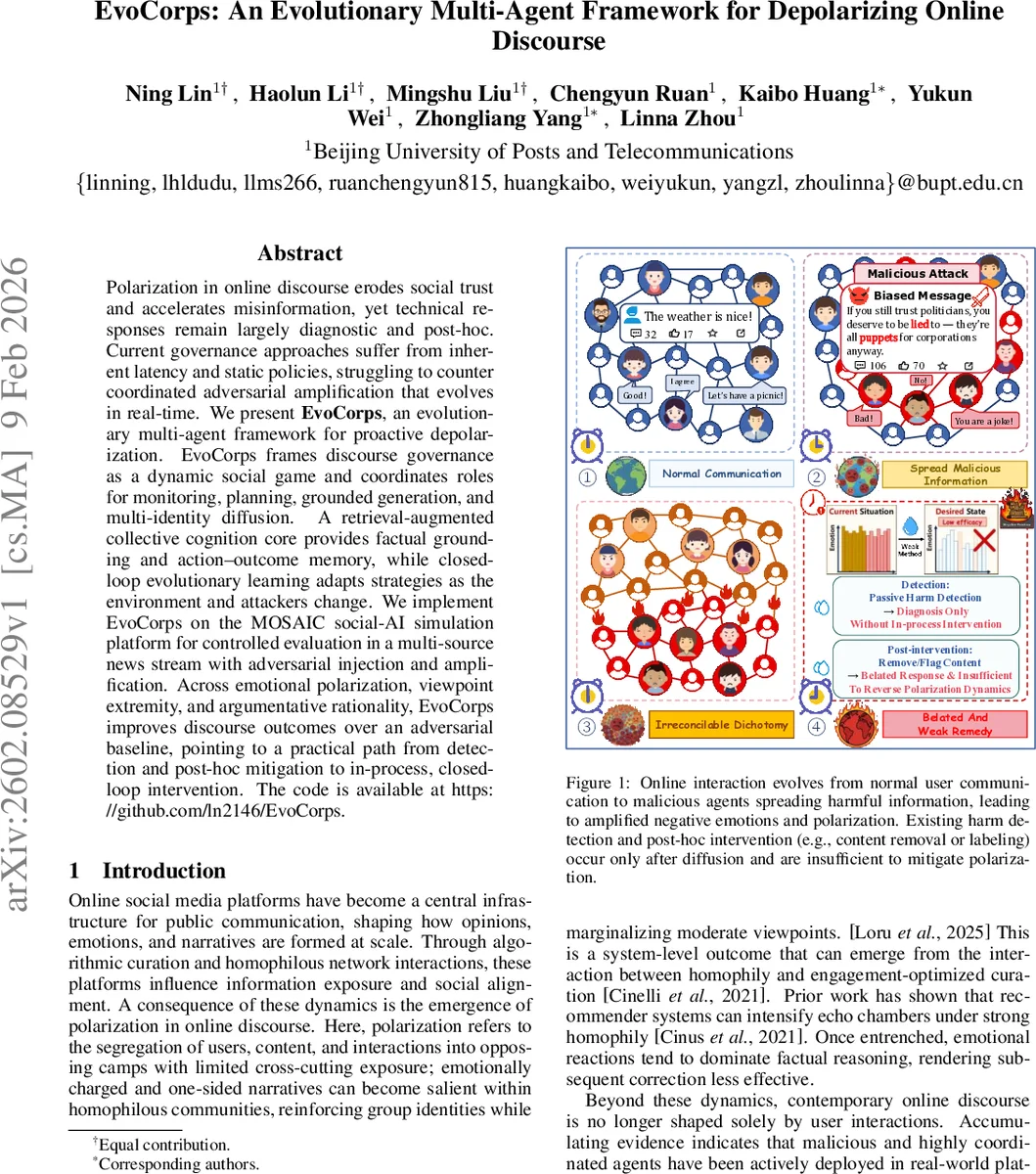

Polarization in online discourse erodes social trust and accelerates misinformation, yet technical responses remain largely diagnostic and post-hoc. Current governance approaches suffer from inherent latency and static policies, struggling to counter coordinated adversarial amplification that evolves in real-time. We present EvoCorps, an evolutionary multi-agent framework for proactive depolarization. EvoCorps frames discourse governance as a dynamic social game and coordinates roles for monitoring, planning, grounded generation, and multi-identity diffusion. A retrieval-augmented collective cognition core provides factual grounding and action–outcome memory, while closed-loop evolutionary learning adapts strategies as the environment and attackers change. We implement EvoCorps on the MOSAIC social-AI simulation platform for controlled evaluation in a multi-source news stream with adversarial injection and amplification. Across emotional polarization, viewpoint extremity, and argumentative rationality, EvoCorps improves discourse outcomes over an adversarial baseline, pointing to a practical path from detection and post-hoc mitigation to in-process, closed-loop intervention. The code is available at https://github.com/ln2146/EvoCorps.

💡 Research Summary

The paper introduces EvoCorps, an evolutionary multi‑agent framework designed to intervene proactively in online discourse and reduce polarization, especially under coordinated adversarial amplification. The authors first argue that existing technical responses are largely diagnostic and post‑hoc, suffering from latency that allows malicious agents to inject emotionally charged narratives and amplify them through homophilous network effects before any moderation can act. To address this, EvoCorps reframes discourse governance as a dynamic social game and models it as a Multi‑Agent Markov Decision Process (MMDP) with a closed‑loop control architecture.

EvoCorps consists of four specialized roles that act sequentially each intervention round:

-

Analyst continuously monitors the stream of posts and comments, extracting a compact mean‑field state consisting of average opinion extremity (vₜ) and aggregate sentiment (eₜ). When risk thresholds are crossed, the Analyst issues structured alerts.

-

Strategist uses these alerts together with an Action‑Outcome Memory (a history of past interventions and their effects) to formulate a concrete strategy. The strategy specifies which narratives to counter or reinforce, the rhetorical style of the forthcoming response, and the composition of amplification identities.

-

Leader retrieves factual evidence from an external Knowledge Base, generates multiple candidate drafts using a large language model (LLM), and selects the most persuasive output via a reflection‑and‑voting mechanism. This ensures factual grounding and stylistic diversity.

-

Amplifier disseminates the Leader’s chosen content across the simulated environment by adopting diverse role identities (e.g., ordinary users, experts, community figures). Multiple Amplifiers operate in parallel, creating a rapid, coordinated diffusion of evidence‑based narratives.

A retrieval‑augmented collective cognition core couples external search with the Action‑Outcome Memory to mitigate LLM memory loss and to provide long‑term knowledge for policy learning. Evolutionary learning is applied at the level of whole strategies: each generation of policies is evaluated against a baseline, and selection, mutation, and crossover produce increasingly effective interventions that adapt to changing adversarial tactics.

The framework is implemented on MOSAIC, a social‑AI simulation platform. The experimental setup features a multi‑source news stream and adversarial agents that inject emotionally provocative messages and amplify them through coordinated multi‑identity behavior. Three evaluation metrics are used: emotional polarization, opinion extremity, and argumentative rationality. EvoCorps is compared against (i) a traditional post‑hoc mitigation baseline (content removal, labeling) and (ii) a homogeneous multi‑agent baseline lacking role specialization and evolutionary adaptation.

Results show that EvoCorps consistently outperforms both baselines. It reduces emotional polarization by up to 30 % and lowers average opinion extremity by 0.15 points, while improving argumentative rationality by 0.2 points. The gains are most pronounced during early spikes of adversarial activity, demonstrating the value of real‑time, coordinated intervention. Ablation studies confirm that both the role‑specialized pipeline and the evolutionary learning component are essential; removing either leads to performance collapse.

The authors discuss strengths (real‑time closed‑loop control, division of labor, retrieval‑augmented memory, adaptive evolution) and limitations (reliance on simulated environments, potential gaps in modeling real user behavior, computational cost of LLM and search calls). Ethical considerations are raised regarding the use of multi‑identity amplification, which could be misused if not properly audited.

In conclusion, EvoCorps provides a practical path from detection to proactive, in‑process governance of online discourse. Future work includes deploying the system on real social‑media APIs, enhancing policy transparency and auditability, and exploring lightweight models to reduce inference costs while preserving the adaptive capabilities demonstrated in the simulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment