GeoFocus: Blending Efficient Global-to-Local Perception for Multimodal Geometry Problem-Solving

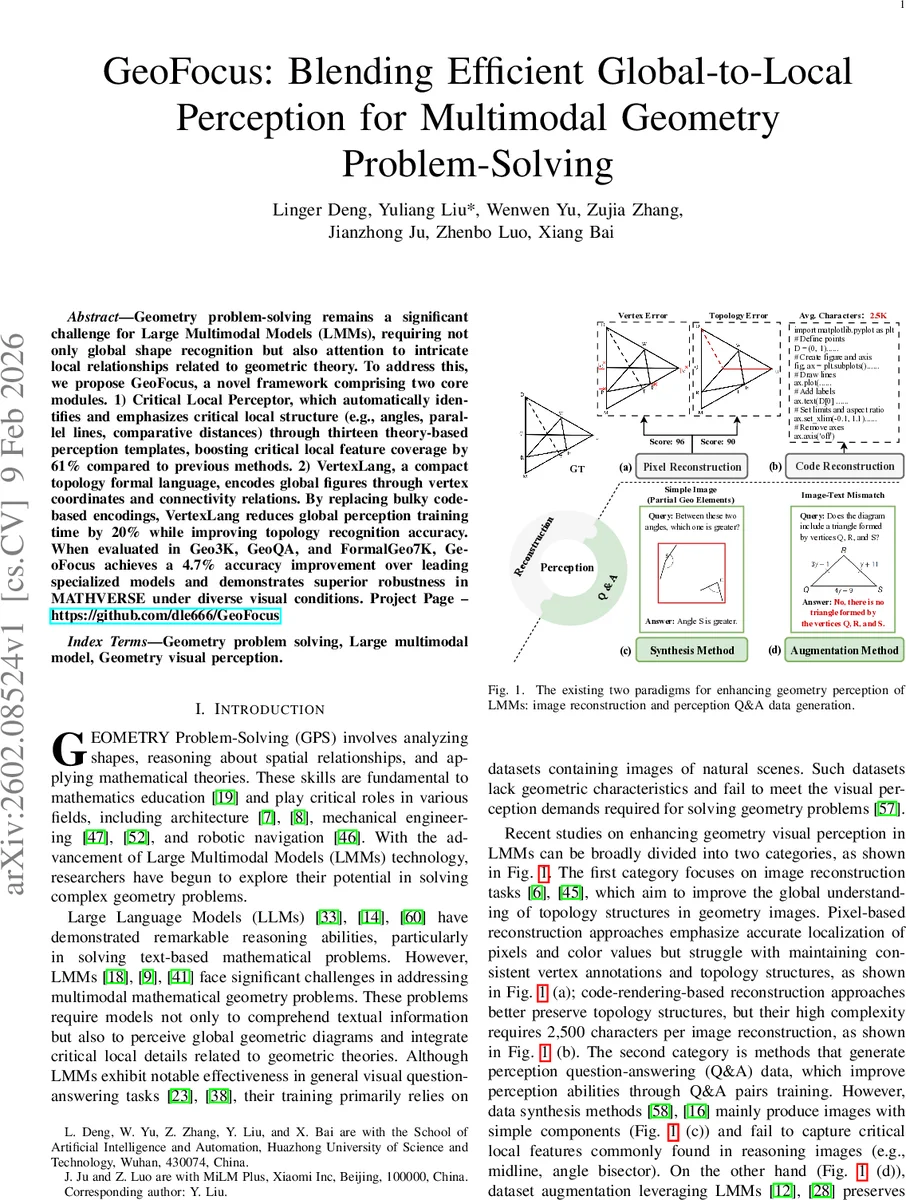

Geometry problem-solving remains a significant challenge for Large Multimodal Models (LMMs), requiring not only global shape recognition but also attention to intricate local relationships related to geometric theory. To address this, we propose GeoFocus, a novel framework comprising two core modules. 1) Critical Local Perceptor, which automatically identifies and emphasizes critical local structure (e.g., angles, parallel lines, comparative distances) through thirteen theory-based perception templates, boosting critical local feature coverage by 61% compared to previous methods. 2) VertexLang, a compact topology formal language, encodes global figures through vertex coordinates and connectivity relations. By replacing bulky code-based encodings, VertexLang reduces global perception training time by 20% while improving topology recognition accuracy. When evaluated in Geo3K, GeoQA, and FormalGeo7K, GeoFocus achieves a 4.7% accuracy improvement over leading specialized models and demonstrates superior robustness in MATHVERSE under diverse visual conditions. Project Page – https://github.com/dle666/GeoFocus

💡 Research Summary

GeoFocus addresses a longstanding bottleneck in large multimodal models (LMMs) when solving geometry problems: the need to simultaneously perceive global diagram structures and extract critical local relationships dictated by geometric theory. The authors propose a two‑module framework. The first module, Critical Local Perceptor, automatically discovers and emphasizes essential local features such as angles, lengths, parallelism, perpendicularity, and collinearity. It does so by defining 13 categories of “critical local structures” and constructing theory‑grounded question‑answer templates for each. Using a corpus of 5,000 middle‑school geometry Q&A pairs, the system generates noise‑free perception Q&A data where each template provides a “Chosen” (correct) and a “Rejected” (incorrect) answer, thereby teaching the LMM to discriminate subtle local cues. This approach expands coverage of critical local features by 61 % compared with prior synthetic data methods.

The second module, VertexLang Topology Percepter, introduces a compact formal language—VertexLang—that encodes a diagram solely via vertex coordinates and a connectivity dictionary. Unlike earlier code‑based reconstruction methods that require roughly 2.5 k characters per image, VertexLang reduces the representation to an average of 0.3 k characters, preserving full topological information while cutting training time by 20 %. The language’s JSON‑like syntax (radius, coordinates, connection_dict) is easy for LMMs to parse, enabling efficient global topology learning.

Experiments span three standard geometry benchmarks (Geo3K, GeoQA, FormalGeo7K) and the robustness‑focused MATHVERSE suite. GeoFocus achieves an average 4.7 percentage‑point accuracy gain over the strongest specialized baselines, with particularly large improvements on problems that combine multiple relational constraints. On MATHVERSE, the model maintains stable performance under diverse visual perturbations (noise, rotation, color shifts), showing less than a 2 % drop where prior models suffer 6–8 % degradation. Ablation studies reveal that each module contributes roughly 2 % of the total gain, and their combination yields a synergistic effect.

Strengths of the work include a principled, theory‑driven template generation pipeline that supplies explicit correct/incorrect pairs, and a lightweight yet expressive formal language that dramatically reduces the overhead of global reconstruction. The dual‑focus design mirrors human problem‑solving strategies, providing a clear conceptual advantage.

Limitations are also acknowledged. The 13‑template set, while covering many elementary relationships, may not capture higher‑order geometric constructs such as circles, arcs, or complex polygonal constraints. VertexLang currently lacks a mechanism for representing continuous curves, which could hinder extension to richer diagram domains. Moreover, evaluations are confined to middle‑school level datasets; generalization to university‑level geometry remains to be demonstrated.

Future directions suggested by the authors involve (1) expanding the template library via meta‑learning or automated discovery to cover a broader theory space, (2) augmenting VertexLang with curve parameters (e.g., Bézier control points) to encode non‑linear elements, and (3) applying the GeoFocus paradigm to other visual‑reasoning domains such as engineering schematics or physics diagrams.

In summary, GeoFocus presents a compelling solution to the global‑local perception imbalance in geometry problem‑solving for LMMs, delivering measurable accuracy gains, reduced training cost, and improved robustness, while opening avenues for further research in multimodal symbolic reasoning.

Comments & Academic Discussion

Loading comments...

Leave a Comment