A Sketch+Text Composed Image Retrieval Dataset for Thangka

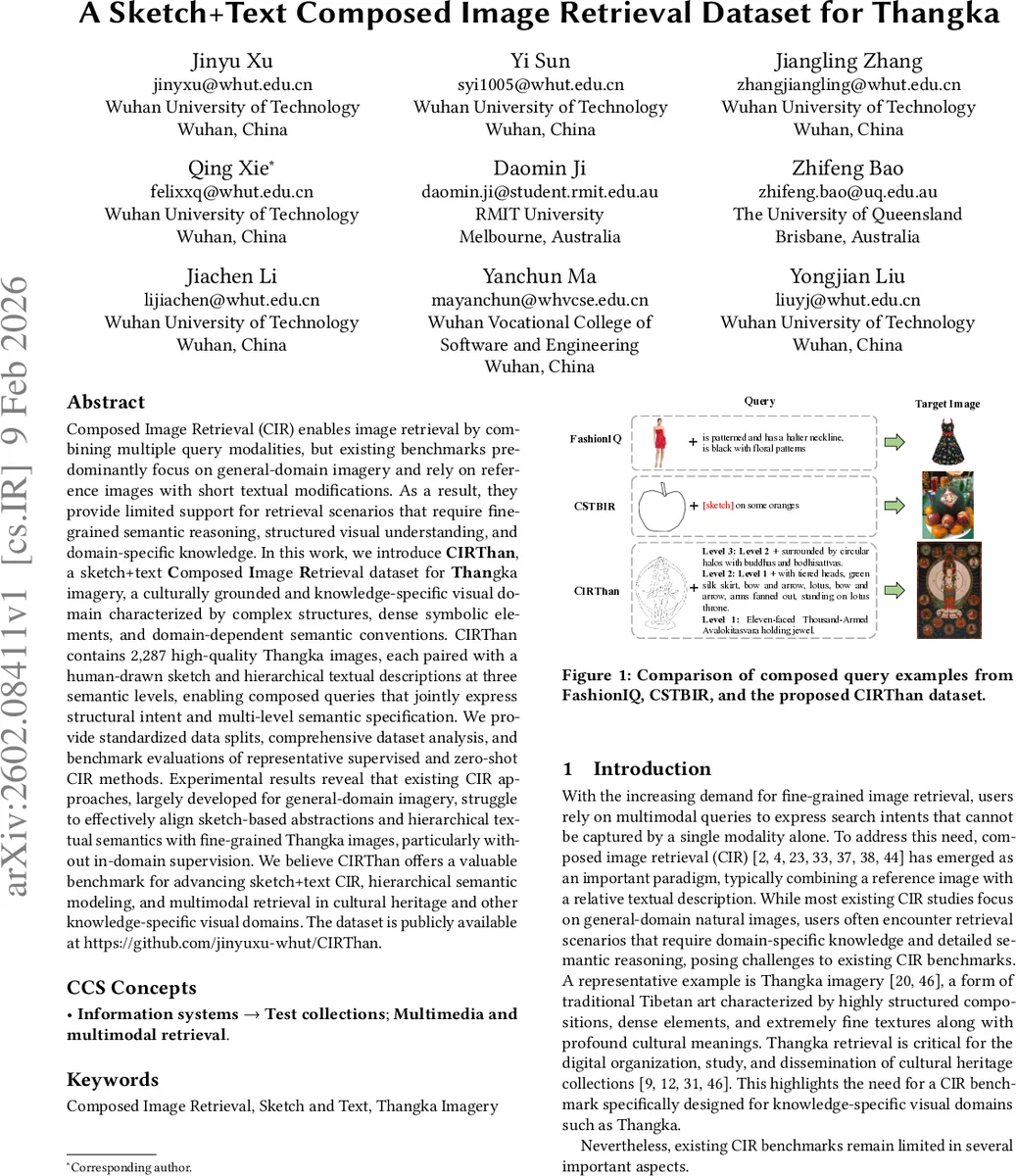

Composed Image Retrieval (CIR) enables image retrieval by combining multiple query modalities, but existing benchmarks predominantly focus on general-domain imagery and rely on reference images with short textual modifications. As a result, they provide limited support for retrieval scenarios that require fine-grained semantic reasoning, structured visual understanding, and domain-specific knowledge. In this work, we introduce CIRThan, a sketch+text Composed Image Retrieval dataset for Thangka imagery, a culturally grounded and knowledge-specific visual domain characterized by complex structures, dense symbolic elements, and domain-dependent semantic conventions. CIRThan contains 2,287 high-quality Thangka images, each paired with a human-drawn sketch and hierarchical textual descriptions at three semantic levels, enabling composed queries that jointly express structural intent and multi-level semantic specification. We provide standardized data splits, comprehensive dataset analysis, and benchmark evaluations of representative supervised and zero-shot CIR methods. Experimental results reveal that existing CIR approaches, largely developed for general-domain imagery, struggle to effectively align sketch-based abstractions and hierarchical textual semantics with fine-grained Thangka images, particularly without in-domain supervision. We believe CIRThan offers a valuable benchmark for advancing sketch+text CIR, hierarchical semantic modeling, and multimodal retrieval in cultural heritage and other knowledge-specific visual domains. The dataset is publicly available at https://github.com/jinyuxu-whut/CIRThan.

💡 Research Summary

The paper addresses a critical gap in the field of Composed Image Retrieval (CIR) by introducing a new benchmark specifically designed for a knowledge‑rich visual domain: Tibetan Thangka paintings. Existing CIR datasets such as FashionIQ, CIRR, and CIRCO focus on general‑domain photographs and rely on a reference image plus a short textual modification. This paradigm assumes that users can easily locate a semantically close reference image and that a brief textual cue suffices to describe the desired change. Both assumptions break down when dealing with culturally complex, highly structured artworks like Thangka, where visual semantics are intertwined with symbolic meaning, intricate composition, and domain‑specific terminology.

CIRThan (Sketch+Text Composed Image Retrieval for Thangka) is built to overcome these limitations. It contains 2,287 high‑resolution Thangka photographs collected from monasteries, museums, and individual artists, ensuring cultural authenticity and visual diversity. For each photograph, a human annotator produces a free‑form sketch that captures salient contours, structural layouts, and distinctive shape cues while abstracting away unnecessary texture details. The sketch serves as a flexible visual reference that mirrors realistic user behavior in fine‑grained retrieval tasks.

In addition to the sketch, annotators write a detailed natural‑language description (Level 3) averaging 34 words. This description enumerates characters, symbolic objects, colors, ornaments, and relational information that are difficult to convey through sketches alone. To model varying user expertise, the authors employ a large language model (Qwen‑3) under strict constraints to generate two simplified versions of the text: Level 2 (≈23 words) removes background and decorative details while preserving core relations, and Level 1 (≈13 words) provides a concise summary focusing on the primary subject and its most distinctive attributes. All three textual levels retain the same core semantics; only peripheral details are pruned. Expert Thangka scholars then verify the consistency across sketch, text, and image, ensuring terminological standardization and cross‑modal alignment.

Statistical analysis shows an average image resolution of 1700 × 1200 px, balanced distributions of sketch complexity, and a roughly even subject distribution across Buddhist deities, bodhisattvas, mythic creatures, and narrative scenes. The dataset is split into 1,868 training and 419 test images, and the authors release the full package publicly.

Benchmarking includes six representative CIR approaches: three supervised methods (CLIP‑Fusion, TIRG, SCAN) trained on the CIRThan training set, and three zero‑shot or large‑model baselines (CLIP‑ZeroShot, BLIP‑2, LLaVA) that rely on pre‑trained vision‑language models without any domain‑specific fine‑tuning. Evaluation uses Recall@1, @5, and @10. Supervised models achieve a best R@1 of 52.03 %, demonstrating that with sufficient in‑domain supervision the task is tractable. However, zero‑shot methods lag dramatically, all staying below 8 % R@1, highlighting the difficulty of aligning sketch‑text queries with domain‑specific semantics when the model has never seen Thangka data.

A key finding is the systematic performance gain when moving from Level 1 to Level 3 textual descriptions across all methods, confirming that richer hierarchical text provides valuable semantic cues. Moreover, combining sketch with text consistently outperforms text‑only queries by 7–10 percentage points in R@1, indicating that sketches effectively complement textual information by supplying structural cues that text alone cannot express.

The authors draw several implications. First, in knowledge‑specific visual domains, sketches are a more realistic and expressive reference modality than natural images, especially when users lack a suitable reference photograph. Second, hierarchical textual specifications accommodate users with varying levels of domain knowledge while still enabling models to exploit fine‑grained semantics. Third, current large‑scale multimodal models lack the cultural and symbolic knowledge required for fine‑grained Thangka retrieval, suggesting a need for domain‑adapted pre‑training or targeted fine‑tuning.

Future research directions proposed include: (1) designing cross‑modal attention mechanisms that more tightly fuse sketch and hierarchical text representations; (2) constructing domain‑specific vocabularies and relational graphs for Thangka to enrich model understanding; (3) developing prompt engineering strategies that enable zero‑shot large language models to reason about cultural symbols and compositional structure; and (4) extending the sketch‑text CIR paradigm to other heritage domains such as Chinese ink paintings, Islamic miniatures, or ancient pottery.

In summary, CIRThan provides a rigorously curated, multimodal benchmark that challenges existing CIR methods to handle fine‑grained structural reasoning, hierarchical semantic modeling, and domain‑specific knowledge. It opens a pathway for advancing sketch‑text retrieval techniques and for applying them to the preservation, study, and dissemination of cultural heritage assets.

Comments & Academic Discussion

Loading comments...

Leave a Comment