UrbanGraphEmbeddings: Learning and Evaluating Spatially Grounded Multimodal Embeddings for Urban Science

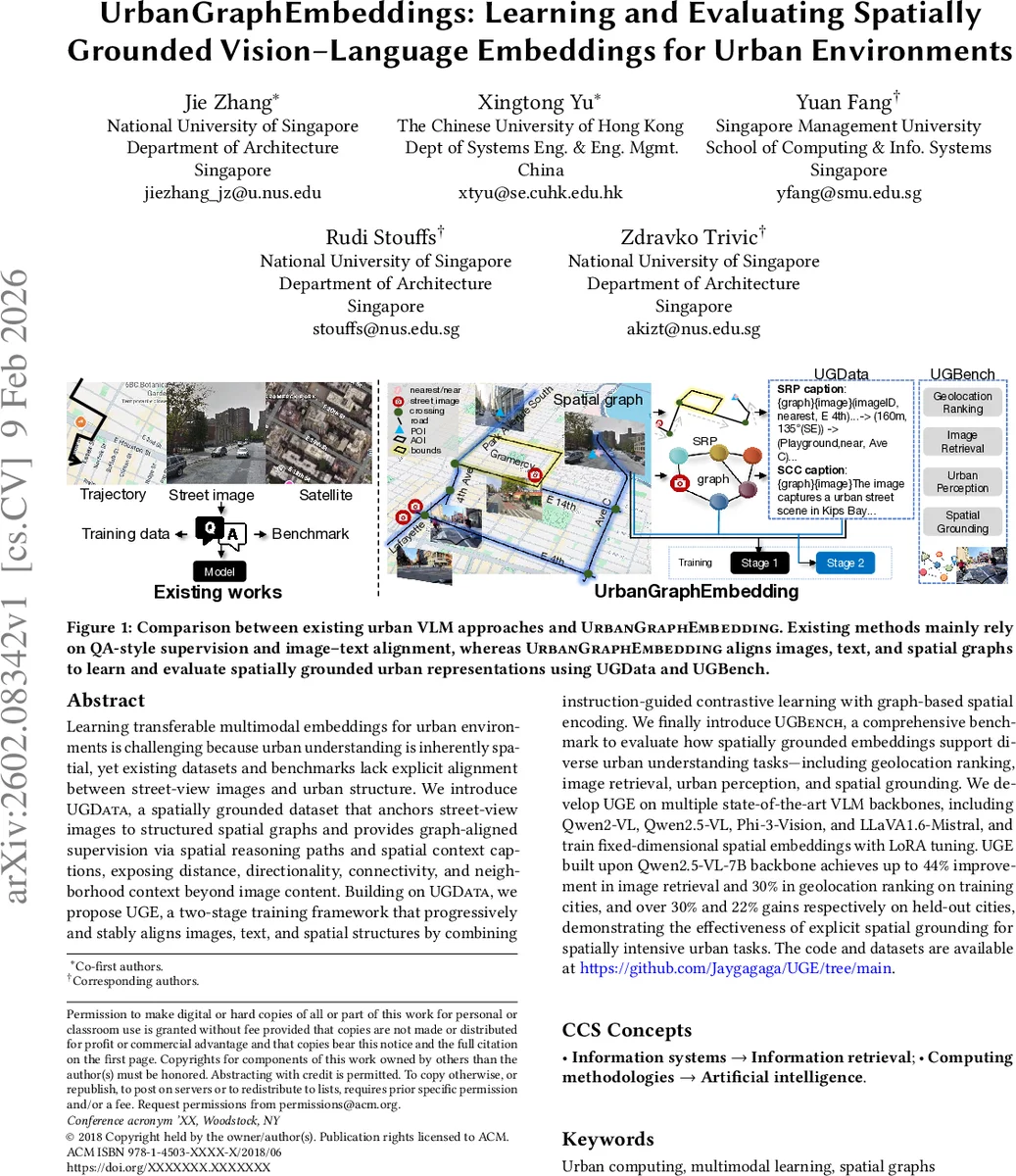

Learning transferable multimodal embeddings for urban environments is challenging because urban understanding is inherently spatial, yet existing datasets and benchmarks lack explicit alignment between street-view images and urban structure. We introduce UGData, a spatially grounded dataset that anchors street-view images to structured spatial graphs and provides graph-aligned supervision via spatial reasoning paths and spatial context captions, exposing distance, directionality, connectivity, and neighborhood context beyond image content. Building on UGData, we propose UGE, a two-stage training strategy that progressively and stably aligns images, text, and spatial structures by combining instruction-guided contrastive learning with graph-based spatial encoding. We finally introduce UGBench, a comprehensive benchmark to evaluate how spatially grounded embeddings support diverse urban understanding tasks – including geolocation ranking, image retrieval, urban perception, and spatial grounding. We develop UGE on multiple state-of-the-art VLM backbones, including Qwen2-VL, Qwen2.5-VL, Phi-3-Vision, and LLaVA1.6-Mistral, and train fixed-dimensional spatial embeddings with LoRA tuning. UGE built upon Qwen2.5-VL-7B backbone achieves up to 44% improvement in image retrieval and 30% in geolocation ranking on training cities, and over 30% and 22% gains respectively on held-out cities, demonstrating the effectiveness of explicit spatial grounding for spatially intensive urban tasks.

💡 Research Summary

The paper tackles the problem of learning spatially grounded multimodal embeddings for urban environments, where visual, textual, and geographic information must be jointly represented. It introduces UGData, a large‑scale dataset that explicitly anchors street‑view images to city‑scale spatial graphs built from OpenStreetMap and municipal data. For each image, two types of supervision are generated automatically: Spatial Reasoning Paths (SRPs), which are ordered sequences of relational triples enriched with distance and bearing information, and Spatial Context Captions (SCCs), natural‑language descriptions of the surrounding subgraph. This pipeline provides low‑cost, scalable alignment between images, text, and structured spatial knowledge.

To learn from UGData, the authors propose UrbanGraphEmbedding (UGE), a two‑stage training framework. Stage 1 uses instruction‑guided contrastive learning (InfoNCE) to align images, text, and SRPs, thereby injecting coarse spatial awareness while preserving the original vision‑language alignment of the backbone. Stage 2 adds a graph encoder that processes node attributes, edge types, and positional encodings of the subgraph; the graph representation is fused with the visual‑language model via LoRA adapters, allowing stable fine‑tuning without updating the full backbone. This progressive approach mitigates instability that would arise from directly adding a third modality.

UGBench, a unified benchmark, evaluates the resulting embeddings on four urban‑science tasks: geolocation ranking, image retrieval, urban perception (e.g., sentiment of a location), and spatial grounding (path‑finding queries). All tasks are cast as embedding‑based ranking problems, supporting zero‑shot evaluation with either image‑only or graph‑augmented inputs.

Experiments on multiple state‑of‑the‑art VLM backbones (Qwen2‑VL, Qwen2.5‑VL, Phi‑3‑Vision, LLaVA‑Mistral) show that UGE built on Qwen2.5‑VL‑7B improves image retrieval by up to 44 % and geolocation ranking by up to 30 % on cities seen during training, and still yields 30 % and 22 % gains respectively on held‑out cities. These results demonstrate that explicit spatial grounding substantially benefits tasks that require understanding of city topology beyond the camera’s field of view.

The paper’s contributions are significant: (1) a novel, automatically generated dataset that aligns visual data with structured urban graphs; (2) a stable two‑stage training recipe that progressively injects spatial knowledge; (3) a comprehensive benchmark that measures spatial representation quality across diverse tasks. Limitations include reliance on the completeness of OSM data, potential expressiveness constraints of LoRA‑only adaptation, and evaluation confined to ranking‑style zero‑shot settings. Future work could explore dynamic graphs capturing temporal changes, integration of 3D point‑cloud data, richer graph encoder architectures, and downstream applications such as navigation planning or disaster response.

Comments & Academic Discussion

Loading comments...

Leave a Comment