Who Deserves the Reward? SHARP: Shapley Credit-based Optimization for Multi-Agent System

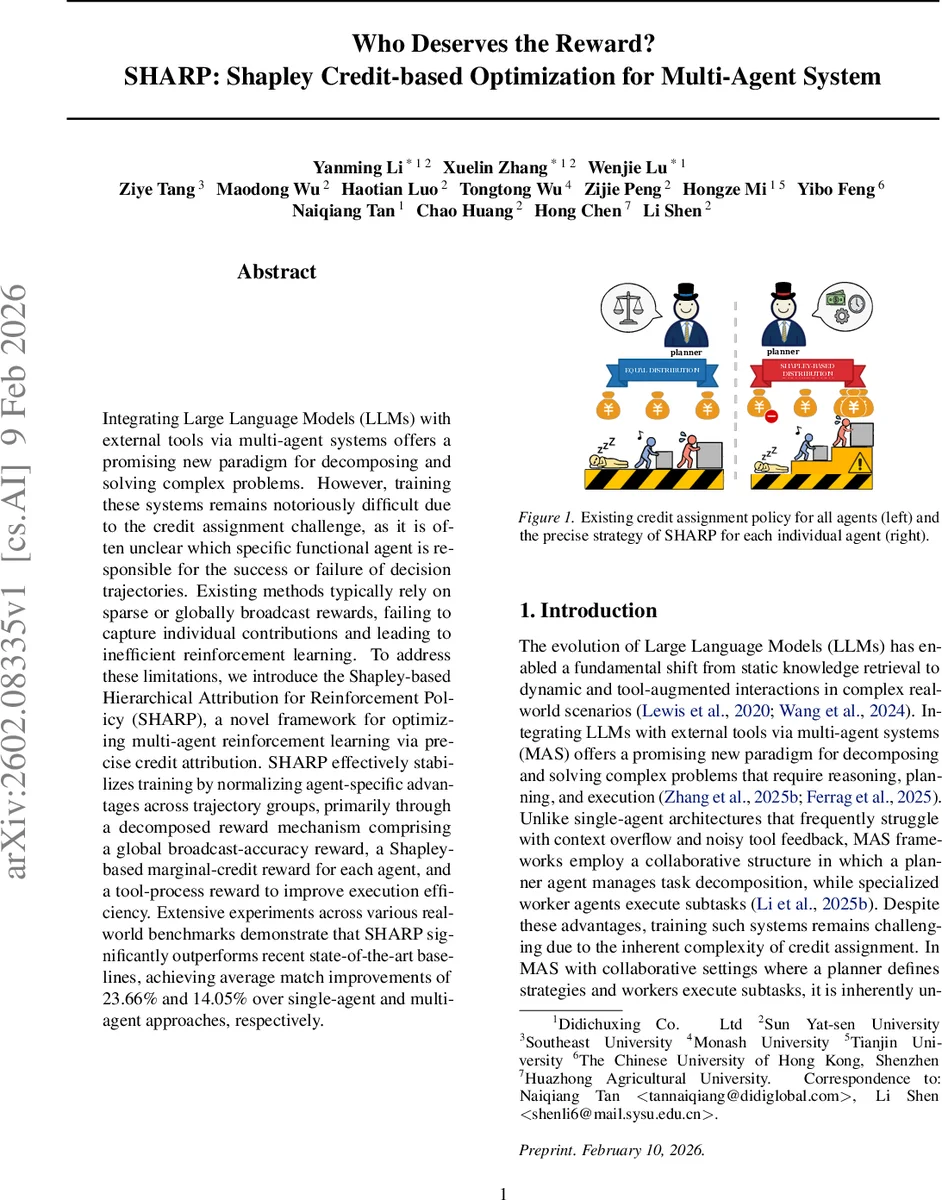

Integrating Large Language Models (LLMs) with external tools via multi-agent systems offers a promising new paradigm for decomposing and solving complex problems. However, training these systems remains notoriously difficult due to the credit assignment challenge, as it is often unclear which specific functional agent is responsible for the success or failure of decision trajectories. Existing methods typically rely on sparse or globally broadcast rewards, failing to capture individual contributions and leading to inefficient reinforcement learning. To address these limitations, we introduce the Shapley-based Hierarchical Attribution for Reinforcement Policy (SHARP), a novel framework for optimizing multi-agent reinforcement learning via precise credit attribution. SHARP effectively stabilizes training by normalizing agent-specific advantages across trajectory groups, primarily through a decomposed reward mechanism comprising a global broadcast-accuracy reward, a Shapley-based marginal-credit reward for each agent, and a tool-process reward to improve execution efficiency. Extensive experiments across various real-world benchmarks demonstrate that SHARP significantly outperforms recent state-of-the-art baselines, achieving average match improvements of 23.66% and 14.05% over single-agent and multi-agent approaches, respectively.

💡 Research Summary

The paper addresses a core challenge in large‑language‑model (LLM)‑driven multi‑agent systems (MAS) that integrate external tools: the credit‑assignment problem. While recent work has introduced planner‑worker hierarchies to decompose complex queries, training remains difficult because it is unclear which agent (planner or specific worker) is responsible for a successful or failed trajectory. Existing approaches typically rely on sparse, globally broadcast rewards, which obscure individual contributions and lead to inefficient policy updates.

To solve this, the authors propose SHARP (Shapley‑based Hierarchical Attribution for Reinforcement Policy), a reinforcement‑learning framework that provides fine‑grained, mathematically grounded credit attribution. SHARP decomposes the reward signal into three components: (1) a global broadcast‑accuracy reward that reflects whether the final answer matches the ground truth; (2) a marginal‑credit reward derived from Shapley values, estimating each agent’s average contribution by measuring performance changes when the agent is ablated (counterfactual masking); and (3) a tool‑process reward that evaluates the correctness and executability of each tool call. The three terms are combined with tunable weights (α, β, γ) to balance overall task success, credit faithfulness, and execution quality.

A key technical innovation is the use of a counterfactual masking mechanism to approximate Shapley values without enumerating all coalitions. The authors integrate this with Group‑Relative Policy Optimization (GRPO) to normalize agent‑specific advantages across groups of trajectories, thereby reducing gradient variance and stabilizing training. The framework adopts a parameter‑sharing self‑play formulation: a single shared LLM policy πθ is instantiated as planner or worker via role‑specific system prompts, allowing a unified model to learn both high‑level planning and low‑level execution while keeping the architecture scalable to different model sizes.

Extensive experiments are conducted on four real‑world benchmarks—MuSiQue, GAIA‑text, WebWalkerQA, and FRAMES—as well as the DocMathEval dataset for mathematical reasoning. Using the Qwen‑3‑8B backbone, SHARP achieves an average match‑score improvement of 23.66 % over single‑agent baselines and 14.05 % over existing multi‑agent methods. On an 8B model, SHARP yields a 14.41‑point absolute gain. Additionally, analysis of agent interactions shows a reduction in the proportion of “harmful” sub‑agents from 5.48 % to 4.40 %, indicating more effective collaboration.

The paper’s contributions are: (1) introducing a Shapley‑based marginal credit assignment that precisely isolates each agent’s impact; (2) designing a tripartite reward decomposition that simultaneously aligns global objectives, individual contributions, and tool‑execution quality; (3) presenting a flexible, parameter‑sharing self‑play architecture applicable to various hierarchical MAS configurations; and (4) providing comprehensive empirical evidence of superior performance and training stability compared to state‑of‑the‑art baselines.

Overall, SHARP offers a principled and practical solution to the credit‑assignment bottleneck in LLM‑augmented multi‑agent systems, enabling more efficient learning, better coordination, and higher task success rates.

Comments & Academic Discussion

Loading comments...

Leave a Comment