CAE-AV: Improving Audio-Visual Learning via Cross-modal Interactive Enrichment

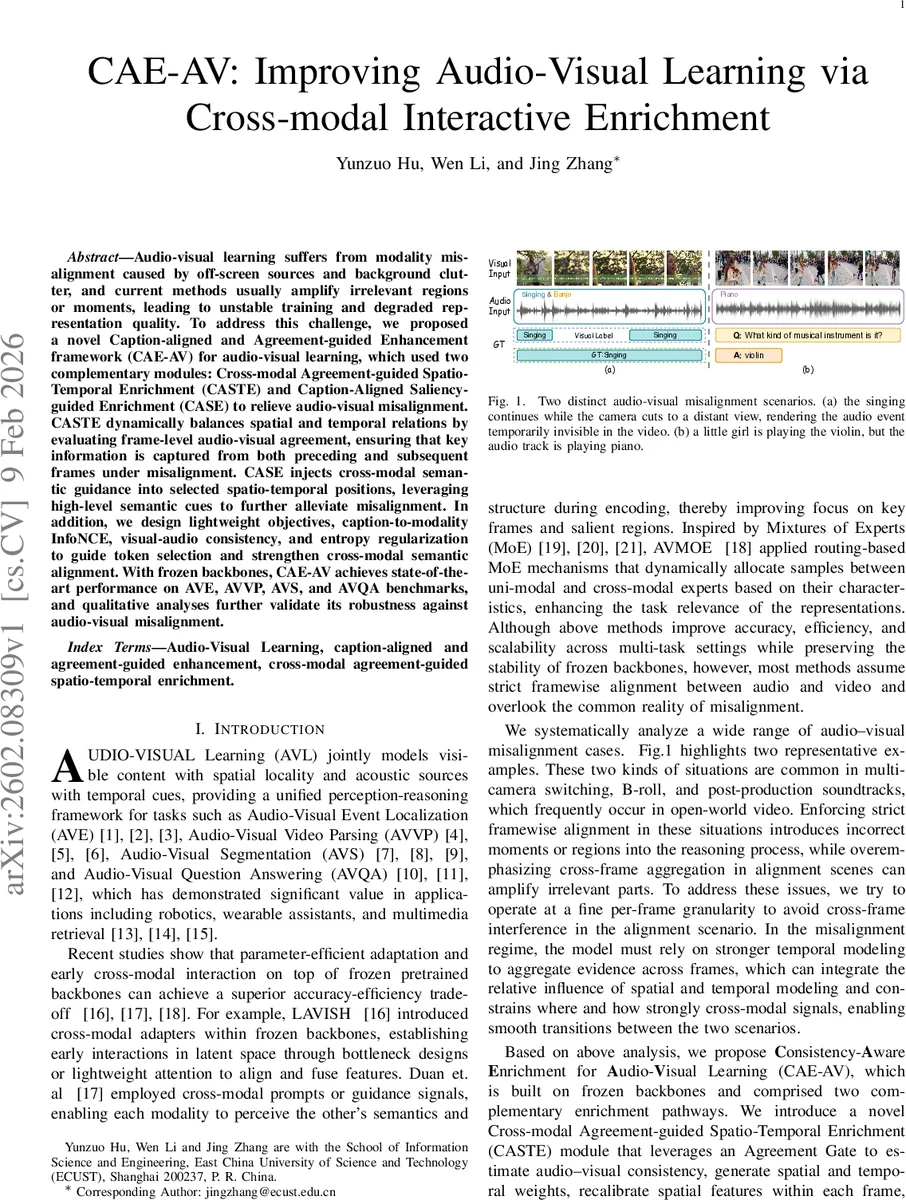

Audio-visual learning suffers from modality misalignment caused by off-screen sources and background clutter, and current methods usually amplify irrelevant regions or moments, leading to unstable training and degraded representation quality. To address this challenge, we proposed a novel Caption-aligned and Agreement-guided Enhancement framework (CAE-AV) for audio-visual learning, which used two complementary modules: Cross-modal Agreement-guided Spatio-Temporal Enrichment (CASTE) and Caption-Aligned Saliency-guided Enrichment (CASE) to relieve audio-visual misalignment. CASTE dynamically balances spatial and temporal relations by evaluating frame-level audio-visual agreement, ensuring that key information is captured from both preceding and subsequent frames under misalignment. CASE injects cross-modal semantic guidance into selected spatio-temporal positions, leveraging high-level semantic cues to further alleviate misalignment. In addition, we design lightweight objectives, caption-to-modality InfoNCE, visual-audio consistency, and entropy regularization to guide token selection and strengthen cross-modal semantic alignment. With frozen backbones, CAE-AV achieves state-of-the-art performance on AVE, AVVP, AVS, and AVQA benchmarks, and qualitative analyses further validate its robustness against audio-visual misalignment.

💡 Research Summary

The paper introduces CAE‑AV, a novel framework designed to mitigate modality misalignment in audio‑visual learning, a problem that arises frequently in real‑world videos due to off‑screen sound sources, background clutter, and multi‑camera cuts. Unlike prior parameter‑efficient approaches such as LAVISH, DG‑SCT, and AVMoE, which assume strict frame‑wise alignment and therefore suffer when this assumption is violated, CAE‑AV explicitly models and adapts to both aligned and misaligned regimes.

CAE‑AV consists of two complementary enrichment pathways that sit on top of frozen pretrained backbones (Swin‑Transformer for vision and HTS‑AT for audio). The first pathway, Cross‑modal Agreement‑guided Spatio‑Temporal Enrichment (CASTE), begins with an Agreement Gate that computes a frame‑level similarity score between visual and audio prototypes. Each frame’s visual and audio tokens are averaged to form prototypes, projected into a shared semantic space, and combined into a four‑dimensional feature vector (including the prototypes themselves, their difference, and element‑wise product). A two‑layer MLP processes this vector to produce raw spatial and temporal preference scores, which are then biased by the similarity score (positive bias for spatial emphasis, negative for temporal emphasis) and normalized with a softmax to obtain per‑frame weights w_sp and w_tm.

These weights control two lightweight enrichment modules. Spatial enrichment uses a MobileViT‑style depth‑wise separable attention that computes a global context vector per frame and recalibrates token values, thereby suppressing noise while highlighting salient regions. Temporal enrichment applies a depth‑wise 1‑D convolution (kernel size 3) followed by a point‑wise 1‑D convolution to capture short‑range dynamics across frames with minimal parameters. The spatial and temporal outputs are combined as w_sp·v_sp + w_tm·v_tm, and a token‑level saliency mask (Top‑K based on ℓ₂ norm) selects the most informative tokens for final injection, keeping the parameter budget low.

The second pathway, Caption‑Aligned Saliency‑guided Enrichment (CASE), injects high‑level semantic guidance derived from captions generated by a large language‑vision model (LLM). Captions are encoded with a frozen CLIP encoder, aligned to visual and audio tokens via cross‑modal attention, and projected back into the backbone feature space. This textual anchor supplies semantic priors that are especially useful when audio and visual streams are temporally out of sync, helping the model to reconcile meaning across modalities.

Training employs three lightweight objectives: (1) a caption‑to‑modality InfoNCE loss that pulls together matching text‑modality pairs and pushes apart mismatches, (2) a visual‑audio consistency loss that maximizes cosine similarity between enriched visual and audio tokens, and (3) an entropy regularization term that prevents the token selection mask from collapsing to trivial solutions. These losses stabilize optimization despite the frozen backbones and encourage the enrichment modules to focus on semantically relevant information.

Extensive experiments on four benchmark tasks—Audio‑Visual Event Localization (AVE), Audio‑Visual Video Parsing (AVVP), Audio‑Visual Segmentation (AVS), and Audio‑Visual Question Answering (AVQA)—show that CAE‑AV consistently outperforms prior state‑of‑the‑art methods. Notably, in scenarios with off‑screen audio or mismatched audio‑visual pairs, CASTE dynamically shifts emphasis from spatial to temporal modeling, while CASE supplies textual grounding that restores semantic alignment. Qualitative visualizations illustrate frame‑wise gating behavior and the effect of caption‑driven attention, confirming that the model adapts gracefully between aligned and misaligned conditions.

In summary, CAE‑AV advances audio‑visual learning by (i) explicitly estimating cross‑modal agreement to guide adaptive spatio‑temporal enrichment, (ii) leveraging caption‑derived semantic priors for robust alignment, (iii) maintaining a lightweight, frozen‑backbone architecture, and (iv) delivering superior performance across diverse tasks, thereby establishing a new paradigm for handling modality misalignment in multimodal perception.

Comments & Academic Discussion

Loading comments...

Leave a Comment