JUSTICE: Judicial Unified Synthesis Through Intermediate Conclusion Emulation for Automated Judgment Document Generation

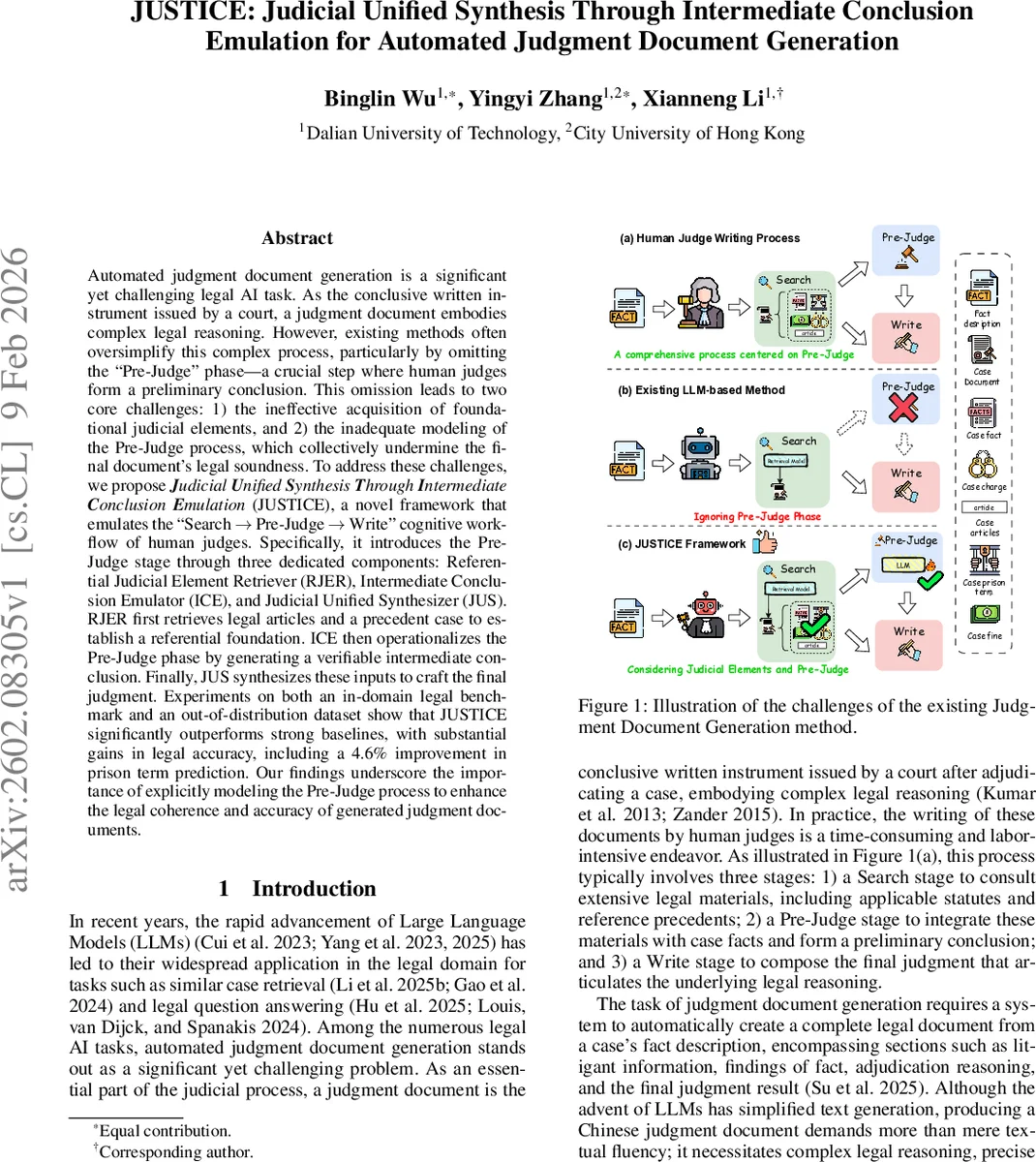

Automated judgment document generation is a significant yet challenging legal AI task. As the conclusive written instrument issued by a court, a judgment document embodies complex legal reasoning. However, existing methods often oversimplify this complex process, particularly by omitting the Pre-Judge'' phase, a crucial step where human judges form a preliminary conclusion. This omission leads to two core challenges: 1) the ineffective acquisition of foundational judicial elements, and 2) the inadequate modeling of the Pre-Judge process, which collectively undermine the final document's legal soundness. To address these challenges, we propose \textit{\textbf{J}udicial \textbf{U}nified \textbf{S}ynthesis \textbf{T}hrough \textbf{I}ntermediate \textbf{C}onclusion \textbf{E}mulation} (JUSTICE), a novel framework that emulates the Search $\rightarrow$ Pre-Judge $\rightarrow$ Write’’ cognitive workflow of human judges. Specifically, it introduces the Pre-Judge stage through three dedicated components: Referential Judicial Element Retriever (RJER), Intermediate Conclusion Emulator (ICE), and Judicial Unified Synthesizer (JUS). RJER first retrieves legal articles and a precedent case to establish a referential foundation. ICE then operationalizes the Pre-Judge phase by generating a verifiable intermediate conclusion. Finally, JUS synthesizes these inputs to craft the final judgment. Experiments on both an in-domain legal benchmark and an out-of-distribution dataset show that JUSTICE significantly outperforms strong baselines, with substantial gains in legal accuracy, including a 4.6% improvement in prison term prediction. Our findings underscore the importance of explicitly modeling the Pre-Judge process to enhance the legal coherence and accuracy of generated judgment documents.

💡 Research Summary

The paper tackles the challenging task of automated judgment document generation, a problem that requires not only fluent text generation but also rigorous legal reasoning, accurate citation of statutes, and precise determination of penalties. Existing large‑language‑model (LLM) approaches typically follow a simplified “search → write” pipeline: they retrieve relevant legal materials and then directly generate the judgment, completely ignoring the intermediate “Pre‑Judge” phase that human judges perform. This omission leads to two major shortcomings: (1) insufficient acquisition of foundational judicial elements (statutes and precedent cases) and (2) inadequate modeling of the analogical reasoning judges use to form a preliminary conclusion before drafting the final opinion. Both issues degrade the legal soundness of the generated documents.

To remedy this, the authors propose JUSTICE (Judicial Unified Synthesis Through Intermediate Conclusion Emulation), a three‑stage framework that explicitly mirrors the cognitive workflow of a human judge: Search → Pre‑Judge → Write. The framework consists of three dedicated components:

-

Referential Judicial Element Retriever (RJER) – RJER simultaneously retrieves statutory articles and a single most relevant precedent case. For statutes, a bi‑encoder first produces a set of candidate articles, which are then re‑ranked by a cross‑encoder to select the top‑k most relevant ones. For case retrieval, a structure‑aware model (SAILER) identifies the most similar prior case, and a rule‑based extractor pulls out key elements (facts, charge, applicable articles, term, fine) from that case. The retrieved statutes are split into those already present in the precedent (A_case) and external statutes (A_ext). RJER finally outputs a composite reference set E_ref = {E_case, A_ext, C_doc}.

-

Intermediate Conclusion Emulator (ICE) – ICE implements the Pre‑Judge stage. It constructs a structured prompt that concatenates the current fact description, the extracted precedent elements, and the external statutes. A fine‑tuned LLM then generates a structured tuple J_pre = (p_articles, p_charge, p_term, p_fine). This process is framed as an analogical reasoning task: the model must infer the outcome for the current case by analogy to the precedent case while taking into account the additional statutes. The output is a verifiable intermediate conclusion that serves as a bridge between retrieval and final writing.

-

Judicial Unified Synthesizer (JUS) – JUS is the writer. It builds a final prompt that includes the original facts, the intermediate conclusion J_pre, the full precedent document C_doc, and a document template. A second fine‑tuned LLM generates the complete judgment D, optimizing a standard language‑modeling loss. Because JUS receives the intermediate conclusion, it can produce a judgment with coherent logical flow, correct statutory citations, and consistent penalty calculations.

The authors evaluate JUSTICE on two datasets: the in‑domain Chinese JuDGE benchmark (≈2 k training samples) and an out‑of‑distribution test set derived from LeCaRDv2 (LeCaRDv2‑Doc). They adopt a multi‑dimensional evaluation framework covering legal accuracy (charge F1, article F1), penalty accuracy (correctness of prison term and fine), and textual quality (METEOR, BERTScore). Baselines span three categories: (1) CVG methods (C3VG, EGG, EGG‑free), (2) LJP methods (TopJudge, MBPFN, Neur‑Judge), and (3) LLM‑based methods (in‑context learning, supervised fine‑tuning, MRAG). Across all metrics, JUSTICE significantly outperforms every baseline. Notably, prison‑term prediction improves by 4.6 percentage points, and statutory citation F1 scores increase by 3–5 pp. Ablation studies reveal that removing RJER harms the quality of retrieved statutes and precedent elements, leading to a drop in ICE’s analogical predictions; omitting ICE forces JUS to generate judgments without an explicit intermediate conclusion, resulting in lower legal coherence.

Further analysis shows a strong correlation between the richness of RJER’s retrieved elements and ICE’s prediction accuracy: more diverse external statutes (A_ext) and higher similarity of the retrieved precedent boost the correctness of p_articles and p_charge. The structured intermediate conclusion also makes error diagnosis easier, as mismatches between predicted and ground‑truth tuples can be directly inspected.

The paper acknowledges several limitations. The current implementation is confined to Chinese legal texts; extending to multilingual or common‑law jurisdictions would require additional retrieval and reasoning components. ICE’s tuple format may struggle with cases involving multiple charges or concurrent penalties, where a more expressive representation would be needed. Moreover, the system relies heavily on retrieval quality; if the retrieved precedent is weakly related, the analogical reasoning may propagate errors. Finally, the LLMs used are fine‑tuned but not explicitly trained to understand the semantics of statutes, limiting deeper legal interpretation.

Future directions proposed include: (1) enriching ICE with multi‑turn dialogic reasoning to handle complex multi‑charge scenarios, (2) integrating statute summarization or semantic parsing to enable deeper comprehension beyond surface citation, (3) developing interactive interfaces where human judges can review and edit the intermediate conclusion before final drafting, and (4) evaluating the framework on other legal systems and languages to test its generality.

In summary, JUSTICE introduces a novel, cognitively inspired architecture that explicitly models the Pre‑Judge phase, thereby bridging the gap between raw legal retrieval and coherent judgment writing. By decomposing the task into retrieval, analogical intermediate conclusion, and synthesis, the framework achieves superior legal accuracy and textual quality, setting a new benchmark for automated judgment generation and opening avenues for more transparent, interpretable legal AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment