Cross-Modal Bottleneck Fusion For Noise Robust Audio-Visual Speech Recognition

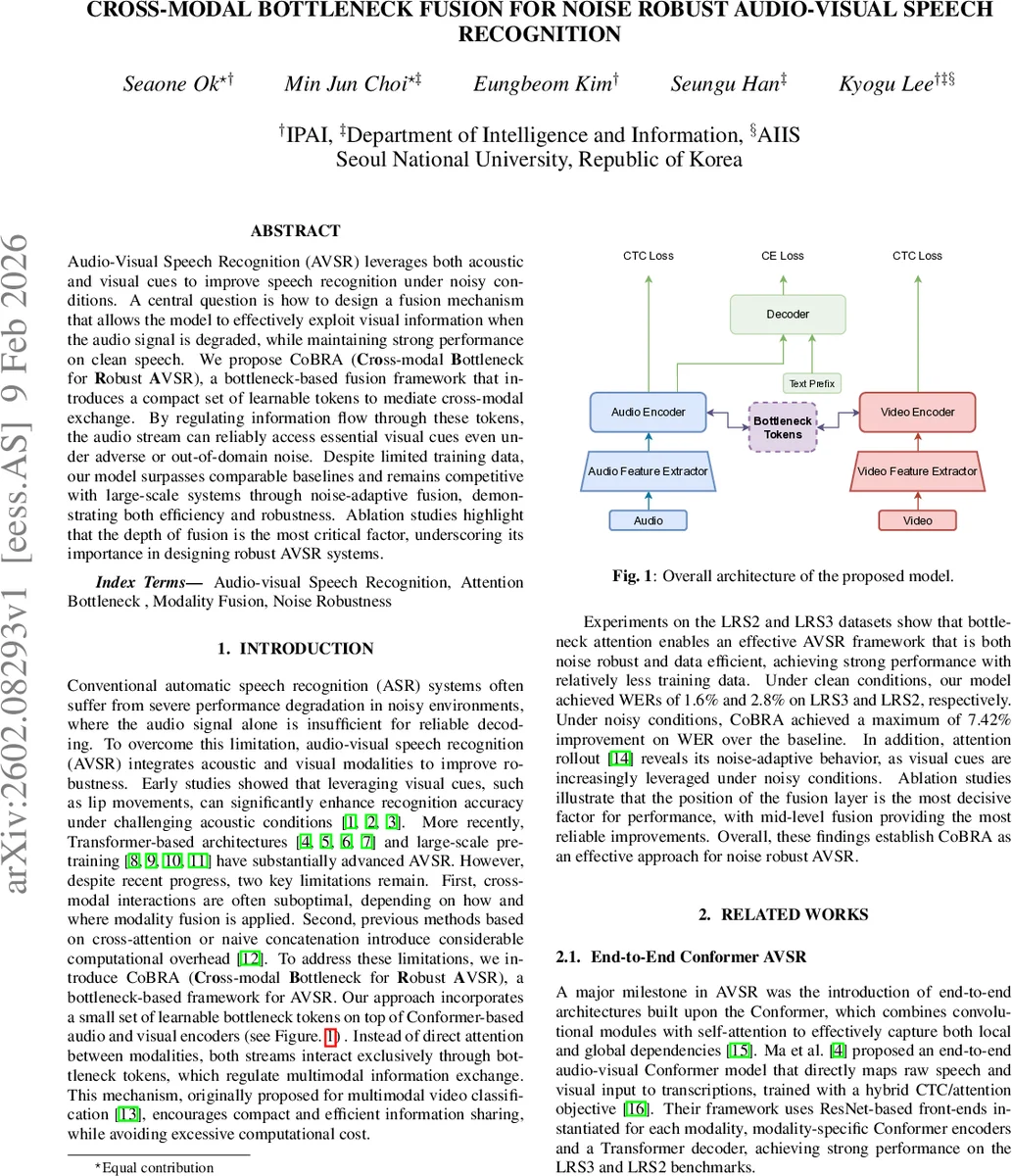

Audio-Visual Speech Recognition (AVSR) leverages both acoustic and visual cues to improve speech recognition under noisy conditions. A central question is how to design a fusion mechanism that allows the model to effectively exploit visual information when the audio signal is degraded, while maintaining strong performance on clean speech. We propose CoBRA (Cross-modal Bottleneck for Robust AVSR), a bottleneck-based fusion framework that introduces a compact set of learnable tokens to mediate cross-modal exchange. By regulating information flow through these tokens, the audio stream can reliably access essential visual cues even under adverse or out-of-domain noise. Despite limited training data, our model surpasses comparable baselines and remains competitive with large-scale systems through noise-adaptive fusion, demonstrating both efficiency and robustness. Ablation studies highlight that the depth of fusion is the most critical factor, underscoring its importance in designing robust AVSR systems.

💡 Research Summary

The paper introduces CoBRA (Cross‑modal Bottleneck for Robust Audio‑Visual Speech Recognition), a novel fusion framework that leverages a small set of learnable bottleneck tokens to mediate information exchange between audio and visual streams. Traditional AVSR systems either concatenate modalities or employ cross‑attention, both of which can be computationally expensive and may pass redundant information. CoBRA addresses these issues by inserting 32 learnable tokens (dimension 768) at a specific depth (the 4th Conformer layer) of each modality’s encoder. The audio encoder processes log‑Mel filter‑bank features through a 1‑D ResNet front‑end and a 12‑layer Conformer, while the visual encoder uses a 3D + 2D ResNet to extract mouth ROI sequences followed by an identical Conformer stack.

Two update strategies for the bottleneck are explored: (a) sequential fusion, where audio‑bottleneck‑video updates occur in order, and (b) mean fusion, where both modalities update the bottleneck simultaneously. Experiments show both strategies outperform a strong baseline, with sequential fusion yielding slightly lower word error rates (WER). After the bottleneck interaction, a 6‑layer Transformer decoder generates the transcription, trained with a hybrid CTC/attention loss that also includes a video‑CTC term to enforce temporal alignment for both modalities.

The authors evaluate on the LRS2 and LRS3 benchmarks, training on only 664 hours of data (≈30 % of the full corpora). During training, babble noise from NOISEX is mixed at SNRs ranging from –5 dB to 20 dB; evaluation adds pink and white noise at controlled SNRs (12.5 dB to –7.5 dB). In clean conditions CoBRA achieves 1.6 % WER on LRS3 and 2.8 % on LRS2, comparable to large‑scale models such as Whisper‑Flamingo and Auto‑AVSR that use several thousand hours of data. Under severe noise (–7.5 dB babble), CoBRA reduces WER by up to 40 % relative to the baseline, demonstrating strong noise robustness.

Ablation studies dissect three key factors: bottleneck size, fusion depth, and update strategy. Increasing the token count from 4 to 16 and 32 progressively improves performance, with 32 tokens offering the most stable results across clean and noisy settings. Varying the fusion layer reveals that mid‑level fusion (L_f = 4) consistently yields the lowest WER, while early (L_f = 0) and late (L_f = 8) fusion provide limited gains, likely due to excessive redundancy or insufficient cross‑modal interaction, respectively. Both sequential and mean updates outperform the baseline, with only marginal differences between them.

To quantify cross‑modal influence, the authors extend attention rollout analysis. The normalized video‑to‑audio influence ( f̄_v→a ) rises sharply as noise intensifies, while audio‑to‑video influence ( f̄_a→v ) declines, indicating that the model dynamically relies more on visual cues when the acoustic signal is degraded. This adaptive behavior aligns with human lip‑reading strategies under noisy conditions.

In summary, CoBRA contributes (1) a lightweight bottleneck‑based fusion mechanism that curtails computational cost while preserving essential cross‑modal information, (2) empirical evidence that the depth of fusion is the most critical hyper‑parameter for robust AVSR, and (3) a demonstration that strong noise robustness can be achieved with limited training data. Future work may explore dynamic bottleneck sizing, multi‑speaker extensions, and integration with large‑scale pre‑training to further enhance generalization.

Comments & Academic Discussion

Loading comments...

Leave a Comment