G-LNS: Generative Large Neighborhood Search for LLM-Based Automatic Heuristic Design

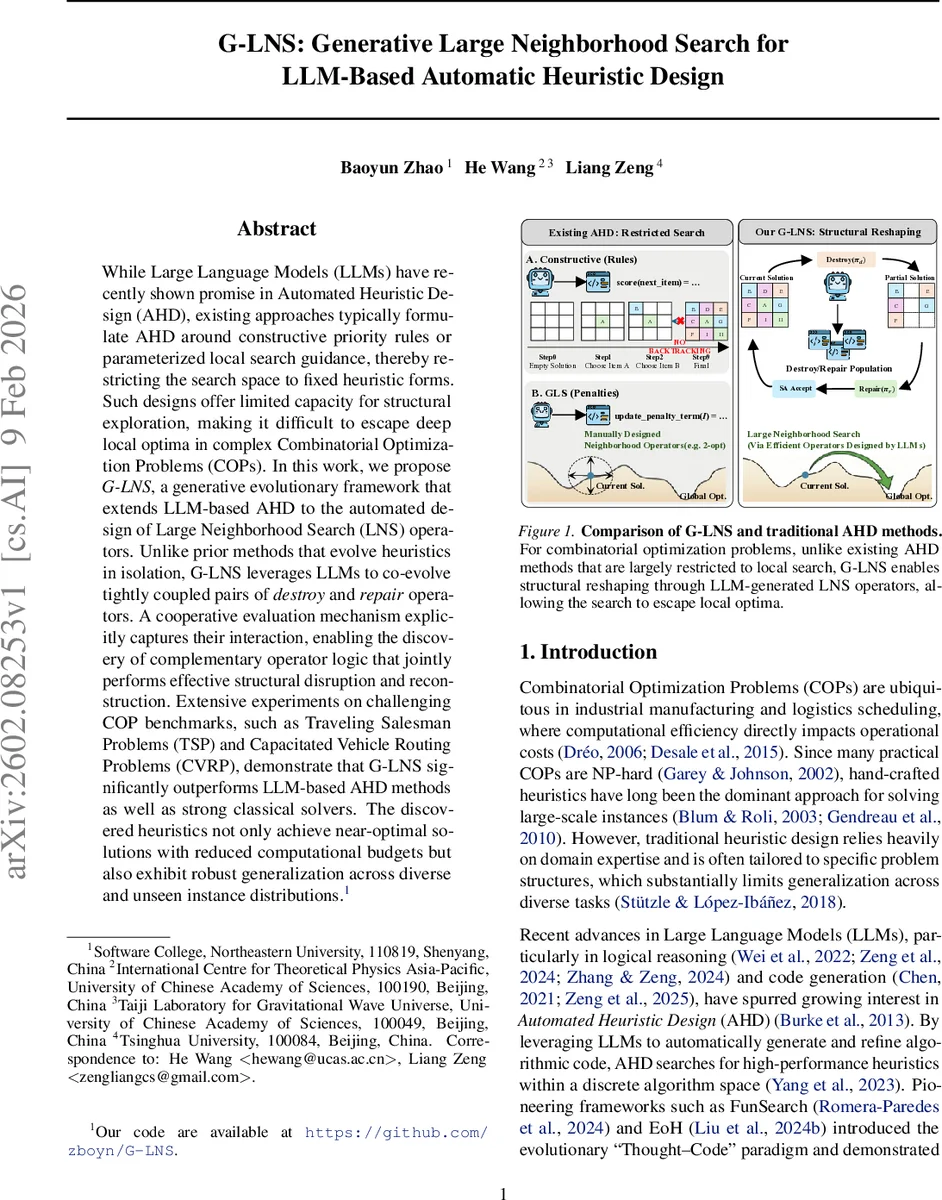

While Large Language Models (LLMs) have recently shown promise in Automated Heuristic Design (AHD), existing approaches typically formulate AHD around constructive priority rules or parameterized local search guidance, thereby restricting the search space to fixed heuristic forms. Such designs offer limited capacity for structural exploration, making it difficult to escape deep local optima in complex Combinatorial Optimization Problems (COPs). In this work, we propose G-LNS, a generative evolutionary framework that extends LLM-based AHD to the automated design of Large Neighborhood Search (LNS) operators. Unlike prior methods that evolve heuristics in isolation, G-LNS leverages LLMs to co-evolve tightly coupled pairs of destroy and repair operators. A cooperative evaluation mechanism explicitly captures their interaction, enabling the discovery of complementary operator logic that jointly performs effective structural disruption and reconstruction. Extensive experiments on challenging COP benchmarks, such as Traveling Salesman Problems (TSP) and Capacitated Vehicle Routing Problems (CVRP), demonstrate that G-LNS significantly outperforms LLM-based AHD methods as well as strong classical solvers. The discovered heuristics not only achieve near-optimal solutions with reduced computational budgets but also exhibit robust generalization across diverse and unseen instance distributions.

💡 Research Summary

The paper introduces G‑LNS, a novel evolutionary framework that automates the design of Large Neighborhood Search (LNS) operators by co‑evolving tightly coupled destroy and repair components with the help of large language models (LLMs). Traditional Automated Heuristic Design (AHD) methods have largely focused on constructive priority‑rule heuristics or parameter‑tuned local‑search operators, which restrict the search space to fixed algorithmic templates and make it difficult to escape deep local optima in combinatorial optimization problems (COPs) such as the Traveling Salesperson Problem (TSP) and the Capacitated Vehicle Routing Problem (CVRP).

G‑LNS addresses this structural bottleneck by treating the design of LNS operators as a discrete code‑space optimization problem. Two separate populations are maintained: a destroy‑operator pool (P_d) and a repair‑operator pool (P_r). Each population is initialized with a few classic domain‑expert operators (e.g., Random Removal, Worst Removal, Greedy Insertion) and then expanded to a fixed size (N) using LLM‑driven prompts (i₁ for destroy, i₂ for repair) that generate executable Python code. This dual‑population architecture enables the framework to explore a much richer set of algorithmic structures than single‑population or template‑based approaches.

Evaluation proceeds through a multi‑episode scheme. In each episode, a random initial solution is iteratively improved for (T) LNS steps. At every step a destroy operator (d_i) and a repair operator (r_j) are selected via roulette‑wheel sampling based on adaptive weights. The pair is applied, producing a neighbor solution (x’). A hierarchical reward system assigns one of four scores ((\sigma_1)–(\sigma_4)) depending on whether (x’) improves the global best, improves the current solution, is accepted by Simulated Annealing, or is rejected. The reward updates three key structures: (1) Adaptive weights for the current episode (smoothly updated with factor (\lambda)), (2) Global fitness scores (F) that accumulate rewards across episodes for each operator, and (3) a synergy matrix (S) that records the joint performance of each destroy‑repair pair. High values in (S) indicate complementary logic and guide a “Synergistic Joint Crossover” that preferentially recombines well‑performing pairs.

After every (K) episodes, the bottom‑performing (M) operators in each pool are pruned based on their global fitness. The LLM then performs mutation (code variation), homo‑crossover (within‑type recombination), and the synergy‑aware joint crossover to refill the populations with novel operators. A sanity check ensures generated code is syntactically correct and executable before it re‑enters the evolutionary loop.

Experimental results on a suite of TSP instances (100–500 nodes) and CVRP instances (50–200 customers) demonstrate that G‑LNS consistently outperforms state‑of‑the‑art AHD systems (FunSearch, EoH) and strong classical solvers (ALNS, LKH, Concorde). It achieves lower average optimality gaps (often < 0.8 %) and reduces computational budgets by roughly 10 % compared to baselines. Importantly, the operators discovered by G‑LNS generalize well to unseen instance distributions, maintaining small gaps where other methods deteriorate sharply.

The authors argue that the key to this success is the explicit modeling of operator interaction via the synergy matrix and the co‑evolution of destroy‑repair pairs, which enables structural innovation beyond mere parameter tuning. They also discuss limitations: reliance on Python code and LLM API costs, potential scalability issues as the code base grows, and the need for more sophisticated code verification mechanisms.

In conclusion, G‑LNS expands the horizon of LLM‑based automated heuristic design by moving from fixed local‑search templates to the automated synthesis of large‑scale, structurally rich neighborhood operators. The framework opens avenues for extending the approach to other meta‑heuristics (e.g., Variable Neighborhood Search), multi‑module algorithm design, and cost‑effective LLM integration, thereby setting a new benchmark for automated, generalizable combinatorial optimization.

Comments & Academic Discussion

Loading comments...

Leave a Comment