Weak-Driven Learning: How Weak Agents make Strong Agents Stronger

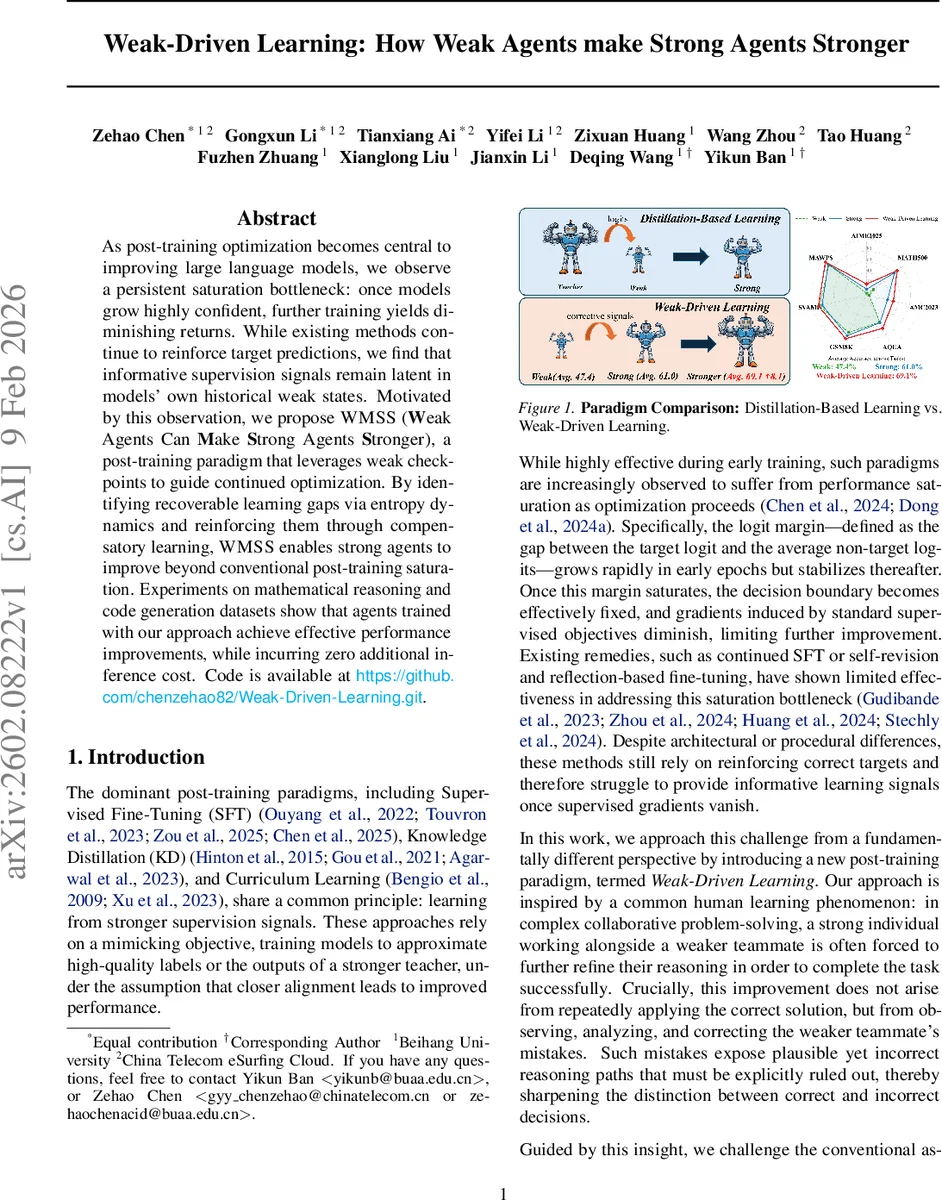

As post-training optimization becomes central to improving large language models, we observe a persistent saturation bottleneck: once models grow highly confident, further training yields diminishing returns. While existing methods continue to reinforce target predictions, we find that informative supervision signals remain latent in models’ own historical weak states. Motivated by this observation, we propose WMSS (Weak Agents Can Make Strong Agents Stronger), a post-training paradigm that leverages weak checkpoints to guide continued optimization. By identifying recoverable learning gaps via entropy dynamics and reinforcing them through compensatory learning, WMSS enables strong agents to improve beyond conventional post-training saturation. Experiments on mathematical reasoning and code generation datasets show that agents trained with our approach achieve effective performance improvements, while incurring zero additional inference cost.

💡 Research Summary

The paper tackles the well‑known saturation problem that arises during post‑training of large language models (LLMs). After a model becomes highly confident, the logit margin stabilizes, gradients on non‑target tokens vanish, and further supervised fine‑tuning (SFT) or knowledge distillation (KD) yields diminishing returns. The authors observe that a model’s own historical checkpoints—still “weak” compared to the current strong version—contain useful supervision signals that are ignored by conventional methods.

Motivated by this, they introduce a new paradigm called Weak‑Driven Learning (WDL). Instead of learning from a stronger teacher, a weak reference model (M_weak) is used to inject structured uncertainty into the training of the strong model (M_strong). The core mechanism is logit mixing: for each training example, the logits of the weak and strong models are linearly combined, z_mix = λ·z_strong + (1‑λ)·z_weak, and the mixed distribution is used in a standard cross‑entropy loss. Because the weak model retains higher entropy, it assigns non‑negligible probability mass to plausible but incorrect tokens. This re‑introduces gradients on those tokens, preventing them from vanishing and allowing the strong model to continue refining its decision boundary even after the usual SFT gradient signal has disappeared.

The training pipeline, named WMSS, consists of three phases. (1) Initialization: a base model is fine‑tuned to obtain M_strong, while M_weak is set to the original checkpoint. (2) Curriculum‑enhanced data activation: entropy dynamics ΔH = H(M_strong) – H(M_weak) are computed for each sample; a mixture of base difficulty, consolidation (samples where the strong model became overly certain) and regression repair (samples where the strong model is now less certain) determines a sampling probability. This focuses training on data that are either inherently hard or have suffered forgetting. (3) Joint training: the mixed‑logit loss is applied on the curriculum‑selected data, updating only the strong model while the weak model remains fixed (or co‑trained). No extra inference cost is incurred because the weak model is used only during training.

The authors provide a gradient‑level analysis showing that the mixed gradient g_mix = P_mix – e_y retains a term proportional to P_weak(k) for every non‑target token k, thereby amplifying learning pressure in saturated regions. By adjusting λ, one can smoothly interpolate between pure SFT (λ=1) and strong reliance on the weak signal (λ≈0).

Empirical results on mathematical reasoning benchmarks (e.g., MATH, GSM‑8K) and code generation (HumanEval) demonstrate consistent improvements of 1–2 absolute percentage points over strong baselines, with particular gains on difficult or previously forgotten examples. Importantly, inference latency and memory usage remain unchanged because the weak model is discarded after training. The code and checkpoints are publicly released for reproducibility.

In summary, Weak‑Driven Learning shows that historical weak checkpoints can serve as effective corrective supervisors, breaking the saturation barrier of conventional post‑training methods without requiring a stronger teacher or incurring inference overhead. This opens a new direction for cost‑effective model refinement and suggests further research into weak‑model selection, adaptive λ schedules, and extensions to other modalities.

Comments & Academic Discussion

Loading comments...

Leave a Comment