CoRect: Context-Aware Logit Contrast for Hidden State Rectification to Resolve Knowledge Conflicts

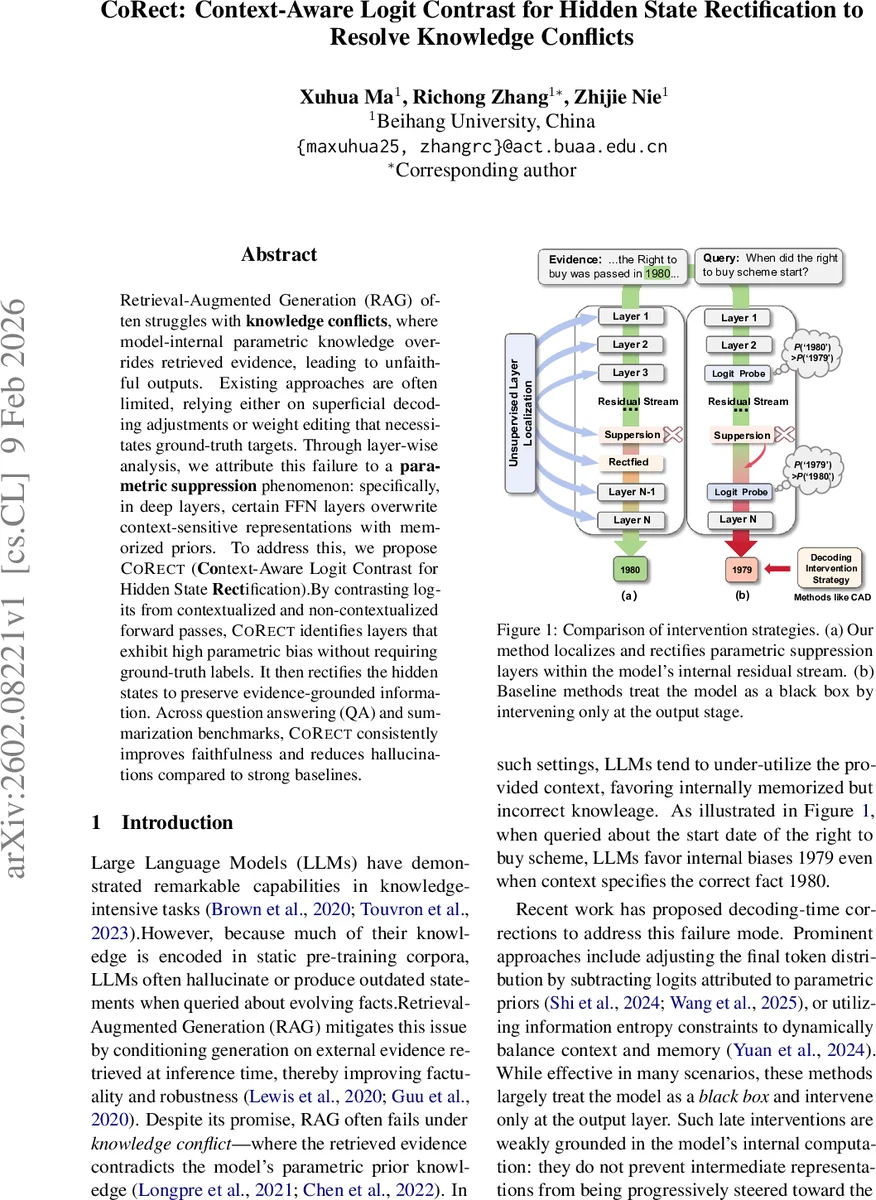

Retrieval-Augmented Generation (RAG) often struggles with knowledge conflicts, where model-internal parametric knowledge overrides retrieved evidence, leading to unfaithful outputs. Existing approaches are often limited, relying either on superficial decoding adjustments or weight editing that necessitates ground-truth targets. Through layer-wise analysis, we attribute this failure to a parametric suppression phenomenon: specifically, in deep layers, certain FFN layers overwrite context-sensitive representations with memorized priors. To address this, we propose CoRect (Context-Aware Logit Contrast for Hidden State Rectification). By contrasting logits from contextualized and non-contextualized forward passes, CoRect identifies layers that exhibit high parametric bias without requiring ground-truth labels. It then rectifies the hidden states to preserve evidence-grounded information. Across question answering (QA) and summarization benchmarks, CoRect consistently improves faithfulness and reduces hallucinations compared to strong baselines.

💡 Research Summary

Retrieval‑augmented generation (RAG) promises to improve factuality by conditioning large language models (LLMs) on external evidence retrieved at inference time. In practice, however, a frequent failure mode—knowledge conflict—occurs when the model’s internal parametric knowledge overrides the retrieved evidence, leading to hallucinations or outdated statements. Existing remedies fall into two categories. Decoding‑time interventions such as CAD or AdaCAD adjust the final token distribution by subtracting logits associated with parametric priors, but they treat the model as a black box and cannot stop the internal propagation of incorrect information. Weight‑editing approaches like ROME or MEMIT edit feed‑forward network (FFN) weights to embed a target fact, yet they require a known ground‑truth answer to compute the edit, making them unsuitable for open‑ended RAG where the correct answer is unknown.

The authors of “CoRect: Context‑Aware Logit Contrast for Hidden State Rectification to Resolve Knowledge Conflicts” conduct a mechanistic analysis using the Logit Lens technique. By projecting each layer’s residual stream onto the vocabulary space, they observe that, in many conflict cases, the correct answer token attains a high probability in early or middle layers but is dramatically demoted in deeper layers. They term this phenomenon “Parametric Suppression” and identify a subset of deep FFN layers that act as key‑value memories, overwriting evidence‑consistent representations with memorized priors.

CoRect introduces a two‑stage, training‑free framework to mitigate this problem without any ground‑truth label. Stage 1, Trustworthy Token Selection, runs two forward passes for the same input: one with the full retrieved context and one with a null context containing only the instruction tokens. For each layer l, the method computes an information‑gain score S(l)_info(v) = log P(v|z^ctx_l) – log P(v|z^null_l) for every token v, where z denotes the logits obtained via the Logit Lens. The scores from the last k layers are averaged to produce a global score S_total(v). The top‑M tokens by this score form a candidate set C. An attention‑based verification step then measures how much the model’s attention focuses on occurrences of each candidate in the retrieved evidence; the token with the highest attention‑weighted evidence is selected as the provisional target token ˜t*.

Stage 2, Hidden State Rectification, uses the identified token ˜t* to construct a corrective signal for the previously localized FFN layers. For each suspect layer l, the method computes the logit contrast between the contextual and null passes to isolate the component that suppresses ˜t*. A small perturbation δ_l, aligned opposite to the unembedding vector w_{˜t*}, is added to the FFN output u_l (i.e., u_l ← u_l + δ_l). This operation “disinhibits” the evidence‑grounded representation rather than injecting a new target, preserving the model’s generative capabilities while blocking the parametric bias.

Extensive experiments on question‑answering benchmarks (Natural Questions, TriviaQA) and summarization datasets (CNN/DailyMail, XSum) demonstrate that CoRect consistently outperforms strong baselines. In QA, CoRect improves exact match by ~4.2 percentage points and F1 by ~3.7 pp, while raising FactCC factuality scores by ~6.5 pp. In summarization, ROUGE‑L improves by ~1.1 points and hallucination rates drop by roughly 18 %. An ablation study shows that CoRect correctly localizes the conflict‑inducing layers with over 70 % recall relative to the layers edited by ROME, and that more than half of the error cases contain the correct answer token in intermediate layers—a key justification for the hidden‑state approach.

The paper also discusses trade‑offs: the magnitude of δ_l controls the balance between factuality and fluency, and the method incurs additional forward passes for token selection, which may be costly for very long contexts. Future work could explore more efficient layer‑scoring mechanisms and multi‑token target estimation.

In summary, CoRect shifts the focus from output‑level fixes to internal‑level interventions. By leveraging logit contrast to locate and neutralize parametric suppression without any supervised signal, it offers a practical, label‑free solution to knowledge conflicts in retrieval‑augmented generation, advancing the reliability of LLM‑based systems that must reconcile internal knowledge with up‑to‑date external evidence.

Comments & Academic Discussion

Loading comments...

Leave a Comment