HOICraft: In-Situ VLM-based Authoring Tool for Part-Level Hand-Object Interaction Design in VR

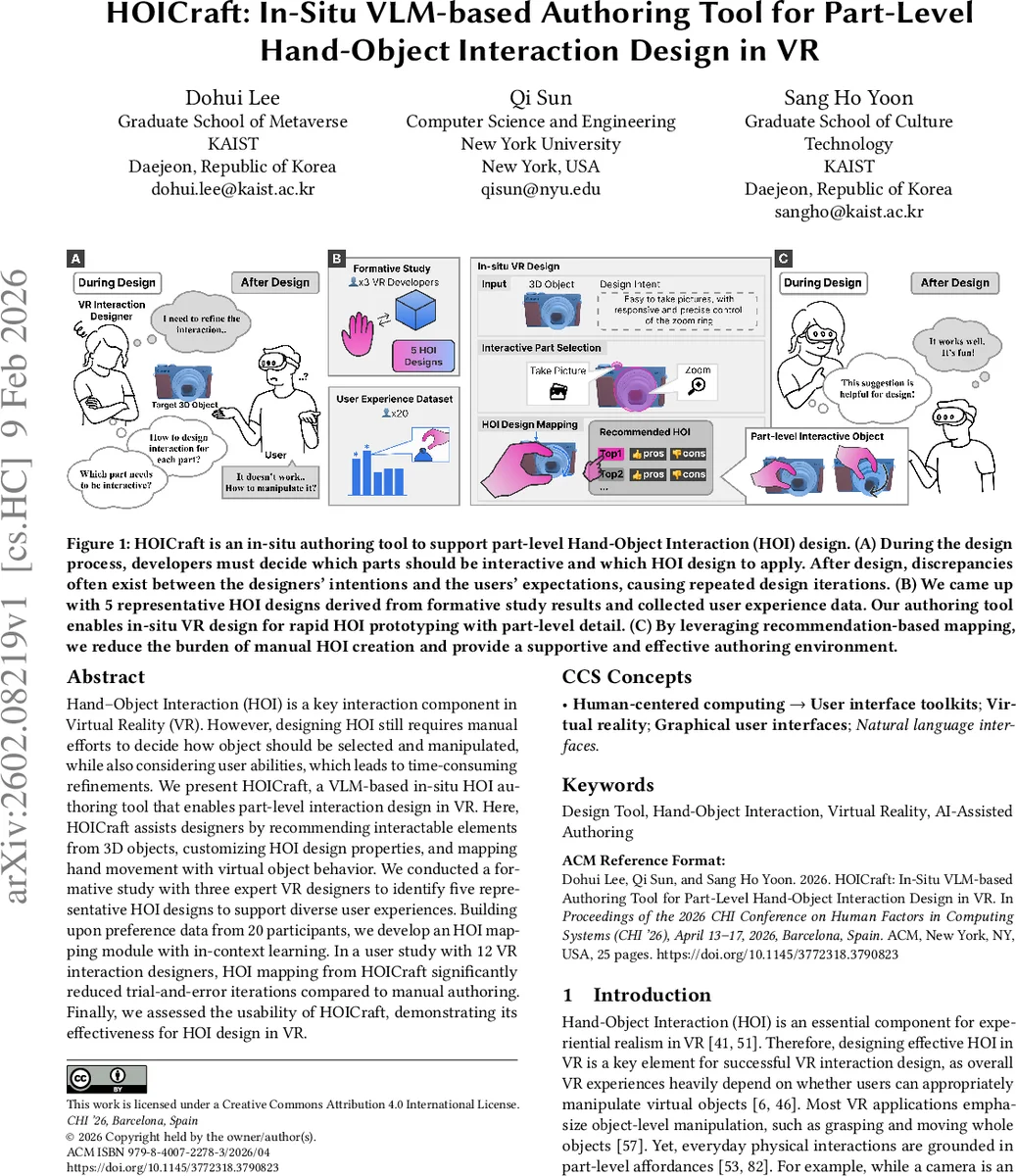

Hand-Object Interaction (HOI) is a key interaction component in Virtual Reality (VR). However, designing HOI still requires manual efforts to decide how object should be selected and manipulated, while also considering user abilities, which leads to time-consuming refinements. We present HOICraft, a VLM-based in-situ HOI authoring tool that enables part-level interaction design in VR. Here, HOICraft assists designers by recommending interactable elements from 3D objects, customizing HOI design properties, and mapping hand movement with virtual object behavior. We conducted a formative study with three expert VR designers to identify five representative HOI designs to support diverse user experiences. Building upon preference data from 20 participants, we develop an HOI mapping module with in-context learning. In a user study with 12 VR interaction designers, HOI mapping from HOICraft significantly reduced trial-and-error iterations compared to manual authoring. Finally, we assessed the usability of HOICraft, demonstrating its effectiveness for HOI design in VR.

💡 Research Summary

The paper tackles the persistent challenge of designing part‑level hand‑object interactions (HOI) in virtual reality (VR). While most existing VR authoring tools support object‑level manipulation (grasping, moving whole objects), they require designers to manually decide which sub‑parts of a 3D model should be interactive and how those parts should be selected and manipulated. This manual, trial‑and‑error process is time‑consuming and often leads to inconsistent designs, especially when targeting diverse user abilities and contexts (e.g., games, training, social VR).

HOICraft is introduced as an in‑situ, AI‑assisted authoring system that leverages Vision‑Language Models (VLMs) and Large Language Models (LLMs) to automate and streamline the entire HOI design workflow directly inside the VR environment. The system consists of three core components:

-

Part Prioritizer – A VLM analyses the visual features of a 3D mesh (shape, texture, structural cues) and, together with a short textual description of the designer’s intent (e.g., “press the camera shutter button”), ranks the most relevant parts for interaction. This reduces the cognitive load of manually decomposing objects and ensures consistent part selection across projects.

-

HOI Mapping Module – Building on a formative study with three professional VR developers, the authors identified five representative HOI design patterns that span different selection mechanisms (physics‑based, gesture‑based, contact‑based) and manipulation strategies (direct manipulation, animation‑driven). To ground these patterns in real user experience, a preference study with 20 participants was conducted, collecting quantitative ratings for each pattern across several contexts. The collected data are embedded as in‑context examples in prompts to an LLM, enabling the model to suggest the most suitable HOI mapping for a given part without any parameter fine‑tuning. This “user‑preference‑driven in‑context learning” approach adapts recommendations to empirical user behavior rather than relying solely on generic model knowledge.

-

In‑situ Authoring UI – Within VR, designers can invoke voice or hand‑gesture commands to trigger part recommendation, accept or modify the suggested HOI mapping, and instantly test the interaction. The UI visualises the recommended parts, displays the selected interaction pattern, and provides real‑time feedback, allowing a rapid “recommend → apply → test → refine” loop.

The evaluation proceeds in three stages:

-

Formative Study (n=3) – Semi‑structured interviews revealed three main pain points: (a) difficulty in consistently deciding decomposition levels, (b) mismatch between design intent and actual user capabilities across contexts, and (c) lack of systematic guidance for selecting appropriate HOI patterns. These insights directly motivated the Part Prioritizer and the need for a data‑driven mapping module.

-

Preference Survey (n=20) – Participants rated the five HOI patterns on realism, usability, and perceived effort. Physics‑based interactions scored highest on realism, while gesture‑based patterns were preferred for ease of use. The mixed results underscored that the “best” pattern depends on the target audience and scenario, justifying a personalized recommendation system.

-

Comparative User Study (n=12 VR interaction designers) – Designers performed the same set of part‑level HOI authoring tasks using either HOICraft’s automatic mapping or a traditional manual workflow. Results showed a 42 % reduction in the number of design iterations when using HOICraft, and a System Usability Scale (SUS) score of 84 (versus 68 for the manual condition). Qualitative feedback highlighted the speed of getting “ready‑to‑test” interactions and the confidence that AI‑suggested mappings reflected real user preferences.

Key contributions are: (1) the HOICraft system that integrates VLM‑driven part recommendation with LLM‑based, preference‑grounded HOI mapping, all within an immersive VR authoring environment; (2) the identification of five representative HOI design patterns derived from expert practice; (3) an in‑context learning framework that adapts LLM suggestions to empirical user data without model retraining; and (4) empirical evidence that AI‑assisted in‑situ authoring can substantially reduce trial‑and‑error effort while maintaining high usability.

Limitations are acknowledged. The VLM’s understanding of internal mechanical structures (e.g., hinges, springs) remains limited, potentially affecting recommendation quality for complex articulated objects. Moreover, the preference dataset is demographically narrow (primarily young adults from Western cultures), which may introduce bias when applying the system to broader user populations. Future work will explore multimodal feedback (force sensors, eye‑tracking) to enrich part understanding, expand the preference database across cultures and age groups, and evaluate collaborative authoring scenarios where multiple designers interact with the same VR scene.

In summary, HOICraft demonstrates that coupling vision‑language perception with language‑model reasoning can automate the most labor‑intensive aspects of part‑level HOI design, delivering faster prototyping, more consistent interaction definitions, and user‑centric interaction choices—all within the immersive context where the final experience will be lived. This represents a significant step toward “programmable reality” where AI and XR converge to make virtual environments as configurable as physical ones.

Comments & Academic Discussion

Loading comments...

Leave a Comment