Chain-of-Caption: Training-free improvement of multimodal large language model on referring expression comprehension

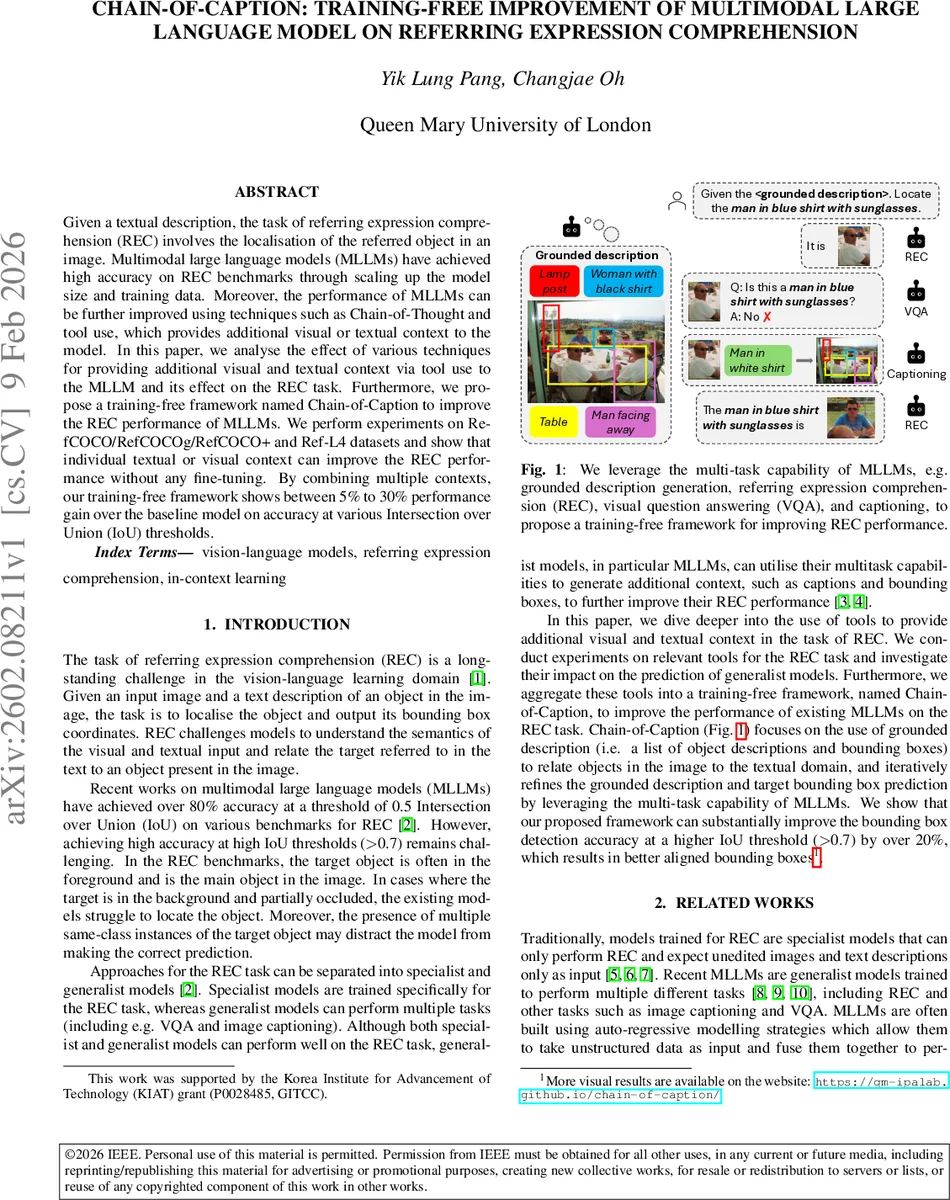

Given a textual description, the task of referring expression comprehension (REC) involves the localisation of the referred object in an image. Multimodal large language models (MLLMs) have achieved high accuracy on REC benchmarks through scaling up the model size and training data. Moreover, the performance of MLLMs can be further improved using techniques such as Chain-of-Thought and tool use, which provides additional visual or textual context to the model. In this paper, we analyse the effect of various techniques for providing additional visual and textual context via tool use to the MLLM and its effect on the REC task. Furthermore, we propose a training-free framework named Chain-of-Caption to improve the REC performance of MLLMs. We perform experiments on RefCOCO/RefCOCOg/RefCOCO+ and Ref-L4 datasets and show that individual textual or visual context can improve the REC performance without any fine-tuning. By combining multiple contexts, our training-free framework shows between 5% to 30% performance gain over the baseline model on accuracy at various Intersection over Union (IoU) thresholds.

💡 Research Summary

The paper addresses the challenging task of Referring Expression Comprehension (REC), where a model must locate the object described by a natural‑language phrase within an image. While large multimodal language models (MLLMs) have achieved strong performance on standard REC benchmarks (often exceeding 80 % accuracy at IoU 0.5), they still struggle at higher IoU thresholds (≥ 0.7), indicating poor boundary precision. The authors investigate whether additional visual or textual context—provided at inference time without any fine‑tuning—can close this gap.

Two types of context are explored. First, a grounded description: the model is prompted to enumerate a fixed number of objects in the image, outputting for each a natural‑language description together with a normalized bounding‑box. This text‑plus‑spatial information directly links language to image regions. Second, visual context: (a) cropping the image around an initial REC prediction (scaled by 1.5×) to force the model’s attention onto a smaller region, and (b) drawing the bounding boxes from the grounded description onto the image as a visual cue.

Building on these, the authors propose a training‑free framework called Chain‑of‑Caption (CoC). The procedure is iterative:

- Generate a grounded description g = M_GDESC(x, p).

- Predict an initial bounding box b = M_REC(x, g, t).

- Verify the prediction with the model’s VQA capability: a = M_VQA(crop(x, b), t) (yes/no).

- If a = yes, stop and output b.

- If a = no, caption the cropped region c = M_CAPTION(crop(x, b)) and augment the grounded description with the pair (b, c).

- Re‑run REC with the enriched context. The loop repeats until a = yes or a maximum number of attempts is reached.

Experiments are conducted on four widely used REC datasets: RefCOCO, RefCOCO+, RefCOCOg, and the newer Ref‑L4 (which contains cleaned labels and longer, more complex expressions). Two base models are evaluated: NVILA‑8B (an 8‑billion‑parameter MLLM) and Qwen2.5‑7B (a newer 7‑billion‑parameter model). The authors compare six settings: (i) baseline (no extra context), (ii) object description only (textual without boxes), (iii) grounded description, (iv) cropping, (v) drawing boxes, and (vi) the full Chain‑of‑Caption pipeline.

Key findings:

- Grounded description alone yields the largest single‑component gain, especially at IoU 0.7 and 0.9 (up to ~30 % absolute improvement), confirming that explicit spatial grounding is crucial.

- Cropping improves the hardest metric (IoU 0.9) because the model can focus on a tighter region, but it hurts lower IoU scores due to loss of global context.

- Visualizing boxes (draw) shows negligible impact, suggesting that the textual grounding already provides sufficient spatial cues.

- The full Chain‑of‑Caption method consistently outperforms all individual contexts across all datasets, delivering 5 %–30 % absolute gains over the baseline. On Ref‑L4, for example, NVILA‑8B’s IoU 0.7 accuracy rises from 30.28 % to 56.63 % (+26.35 pts) and IoU 0.9 from 2.82 % to 25.16 % (+22.34 pts).

- Scaling the model size (NVILA‑15B) further amplifies the benefit, indicating that the approach is model‑agnostic and complementary to larger architectures.

The proposed pipeline is training‑free, meaning it incurs no additional parameter updates or data collection; it merely leverages the existing multitask abilities of the MLLM (REC, VQA, captioning, object detection). This makes it attractive for rapid deployment on existing systems. However, the method has limitations: it relies heavily on the quality of generated captions and bounding boxes; errors can propagate through the iterative loop. The iterative process also increases inference latency, which may be prohibitive for real‑time applications. Hyper‑parameters such as the number of objects in the grounded description (set to five) and the maximum number of refinement steps are currently hand‑tuned.

Future work could explore (1) automated prompt optimization and dynamic stopping criteria, (2) richer visual tools such as segmentation masks or depth maps, (3) joint optimization of all tasks within a single chain to reduce latency, and (4) extending the training‑free context‑augmentation paradigm to other multimodal tasks like visual question answering, image‑based storytelling, or video grounding. Overall, the paper demonstrates that carefully orchestrated, inference‑time context can dramatically boost REC performance without any additional training, opening a practical pathway for enhancing existing multimodal models.

Comments & Academic Discussion

Loading comments...

Leave a Comment