ZipFlow: a Compiler-based Framework to Unleash Compressed Data Movement for Modern GPUs

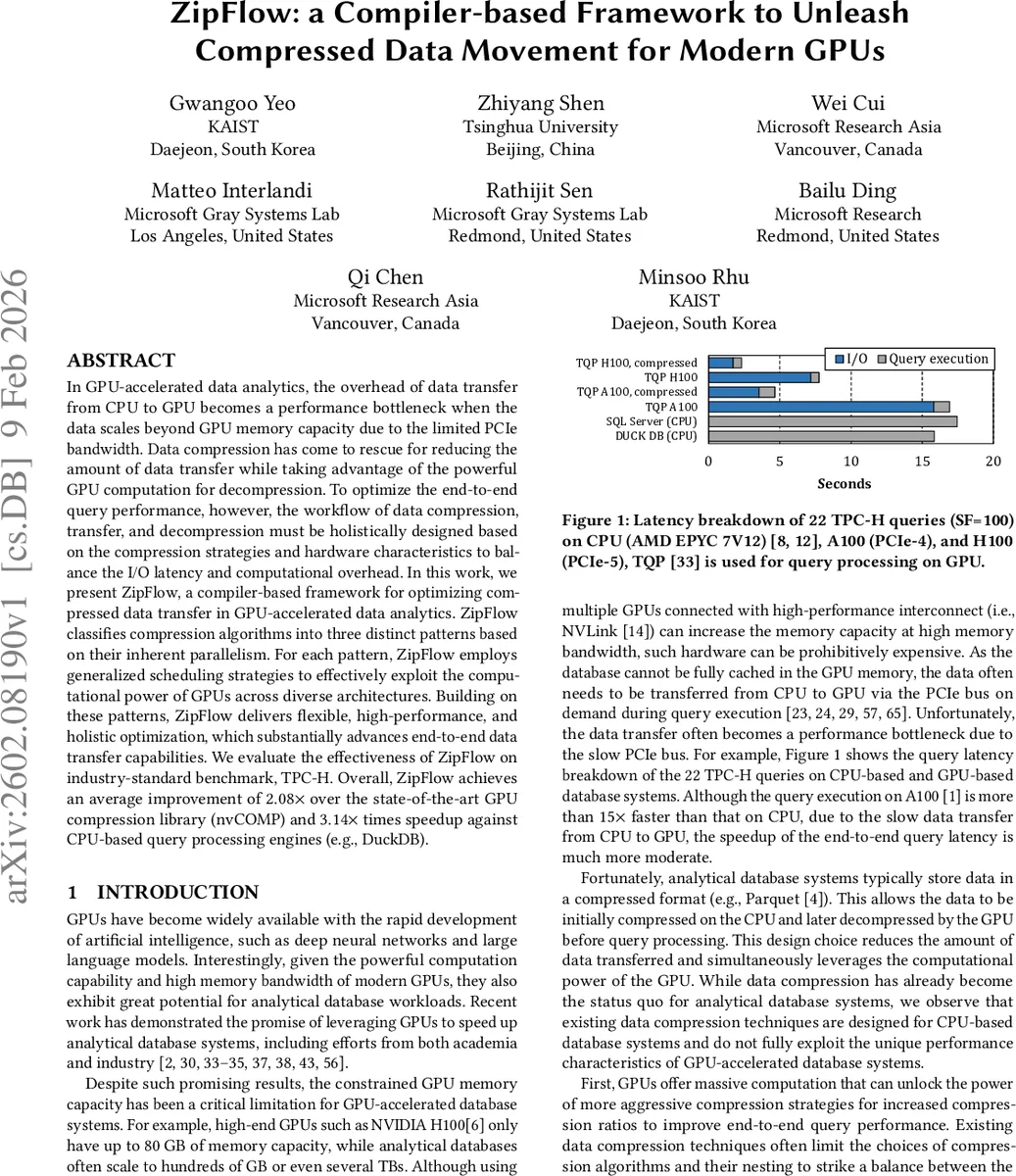

In GPU-accelerated data analytics, the overhead of data transfer from CPU to GPU becomes a performance bottleneck when the data scales beyond GPU memory capacity due to the limited PCIe bandwidth. Data compression has come to rescue for reducing the amount of data transfer while taking advantage of the powerful GPU computation for decompression. To optimize the end-to-end query performance, however, the workflow of data compression, transfer, and decompression must be holistically designed based on the compression strategies and hardware characteristics to balance the I/O latency and computational overhead. In this work, we present ZipFlow, a compiler-based framework for optimizing compressed data transfer in GPU-accelerated data analytics. ZipFlow classifies compression algorithms into three distinct patterns based on their inherent parallelism. For each pattern, ZipFlow employs generalized scheduling strategies to effectively exploit the computational power of GPUs across diverse architectures. Building on these patterns, ZipFlow delivers flexible, high-performance, and holistic optimization, which substantially advances end-to-end data transfer capabilities. We evaluate the effectiveness of ZipFlow on industry-standard benchmark, TPC-H. Overall, ZipFlow achieves an average improvement of 2.08 times over the state-of-the-art GPU compression library (nvCOMP) and 3.14 times speedup against CPU-based query processing engines (e.g., DuckDB).

💡 Research Summary

ZipFlow: A Compiler‑Based Framework for Accelerating Compressed Data Movement on Modern GPUs

Modern analytical workloads increasingly rely on GPUs for their massive compute power and high memory bandwidth. However, the limited capacity of GPU memory (e.g., 80 GB on an NVIDIA H100) forces systems to stream data from the CPU over PCIe, where the relatively low bandwidth (16–64 GB/s) becomes a dominant performance bottleneck. While columnar storage formats such as Parquet already store data in compressed form, existing compression techniques and libraries (e.g., nvCOMP) are designed primarily for CPU‑centric execution and do not fully exploit the parallelism and compute capabilities of GPUs. Moreover, they support only a narrow set of nested compression schemes and lack systematic ways to co‑optimize compression, data transfer, and decompression.

ZipFlow addresses these gaps by providing a unified, compiler‑driven stack that treats compression algorithms as compositions of three fundamental parallel patterns:

-

Fully‑Parallel (N→M) – each input element maps independently to an output element. This pattern fits dictionary look‑ups, bit‑packing, Float2Int, and other 1‑to‑1 transformations. The generated kernels launch one thread per element, fully leveraging the SIMT model.

-

Group‑Parallel (N→1) – the workload is partitioned into variable‑size groups (e.g., runs in RLE, match lengths in LZ77). Within a group there are dependencies, but groups are independent. ZipFlow implements a two‑stage approach: a first kernel computes group boundaries and lengths, and a second kernel processes each group in parallel using shared memory and warp‑level primitives.

-

Non‑Parallel (Sequential) – algorithms with strong sequential dependencies such as ANS or Huffman coding. Here ZipFlow splits the algorithm into pipeline stages, minimizes host‑device transfers, and applies kernel fusion where possible.

The framework is organized into four layers:

-

Pattern Layer – provides a library of GPU kernels implementing the three patterns. These kernels are parameterized by block size, shared‑memory usage, and other device‑specific attributes.

-

Algorithm Layer – assembles primitive kernels into concrete compression primitives (RLE, dictionary encoding, bit‑packing, entropy coders, etc.).

-

Nesting Layer – automatically explores combinations of primitives (e.g., Dictionary + ANS, RLE + Bit‑Packing) to find the best trade‑off between compression ratio and decompression cost for each column. The exploration uses a lightweight cost model that accounts for data distribution, expected I/O reduction, and GPU compute cost.

-

Pipelining Layer – schedules overlapping PCIe transfers and GPU decompression across multiple data chunks. By constructing a DAG where transfer edges and compute edges can execute concurrently, ZipFlow hides most of the PCIe latency. The scheduler also balances load across SMs, adapts to heterogeneous GPUs (CUDA vs. ROCm), and respects memory‑bandwidth limits.

A key technical contribution is the device‑geometry aware optimizer. At compile time, ZipFlow queries the target GPU’s SM count, register budget, and shared‑memory capacity, then runs a search (guided by Bayesian optimization) to select the optimal kernel launch configuration for each pattern. This enables the same high‑level compression description to run efficiently on a wide range of GPUs, from NVIDIA A100 (PCIe‑4) to AMD MI300x.

Evaluation uses the TPC‑H benchmark (scale factor 100, 22 queries) on both A100 and H100 GPUs. ZipFlow is compared against:

- nvCOMP – the state‑of‑the‑art GPU compression library, using the same underlying algorithms but without ZipFlow’s scheduling and nesting capabilities.

- DuckDB – a high‑performance CPU‑only analytical engine.

Results show:

- End‑to‑end query latency – ZipFlow achieves an average 2.08× speedup over nvCOMP and 3.14× over DuckDB.

- I/O reduction – custom nesting yields 1.85× less data transferred compared to nvCOMP’s default pipelines, translating into a 1.85× reduction in PCIe wait time.

- Decompression throughput – thanks to kernel fusion and the Fully‑Parallel pattern, ZipFlow’s decompression is 3.26× faster than nvCOMP’s best‑case.

- Robustness across data distributions – experiments on uniform, skewed, and mixed‑type columns confirm that the pattern‑based abstraction captures the essential parallelism of most lossless compressors.

- Cross‑architecture portability – the same ZipFlow description runs unmodified on both CUDA and ROCm devices, demonstrating the framework’s hardware‑agnostic design.

Contributions are summarized as:

- A three‑pattern abstraction that captures the core parallelism of virtually all lossless (de)compression algorithms.

- A universal optimization space that maps pattern parameters to GPU execution geometry, combined with automatic kernel fusion and pipelining.

- A systematic, hardware‑aware nesting exploration that jointly optimizes compression ratio and compute cost.

- A comprehensive evaluation showing substantial end‑to‑end gains on a realistic analytical workload.

Limitations and Future Work – ZipFlow currently focuses on columnar, integer‑oriented data; extending the pattern library to image/video codecs or other unstructured data is an open direction. Moreover, while PCIe‑Gen5 improves raw bandwidth, the framework would benefit from tighter integration with NVLink, Infinity Fabric, or emerging GPU‑direct storage technologies. The authors plan to incorporate machine‑learning‑driven cost models for automatic selection of compression pipelines and to evaluate ZipFlow in multi‑GPU, distributed query execution scenarios.

In summary, ZipFlow demonstrates that a compiler‑driven, pattern‑centric approach can unlock the full potential of modern GPUs for compressed data movement, delivering order‑of‑magnitude improvements in both I/O efficiency and overall query performance.

Comments & Academic Discussion

Loading comments...

Leave a Comment