Evasion of IoT Malware Detection via Dummy Code Injection

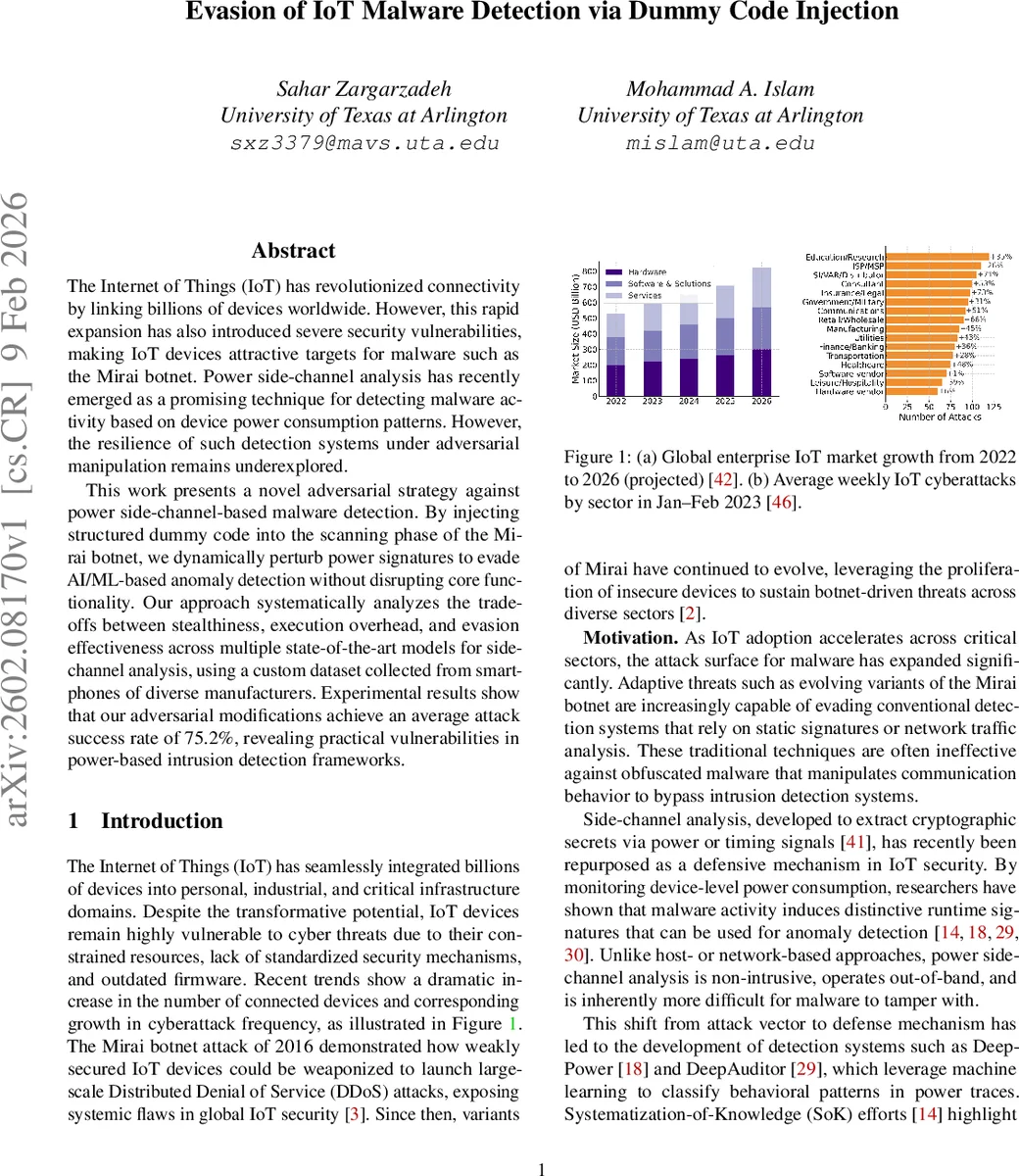

The Internet of Things (IoT) has revolutionized connectivity by linking billions of devices worldwide. However, this rapid expansion has also introduced severe security vulnerabilities, making IoT devices attractive targets for malware such as the Mirai botnet. Power side-channel analysis has recently emerged as a promising technique for detecting malware activity based on device power consumption patterns. However, the resilience of such detection systems under adversarial manipulation remains underexplored. This work presents a novel adversarial strategy against power side-channel-based malware detection. By injecting structured dummy code into the scanning phase of the Mirai botnet, we dynamically perturb power signatures to evade AI/ML-based anomaly detection without disrupting core functionality. Our approach systematically analyzes the trade-offs between stealthiness, execution overhead, and evasion effectiveness across multiple state-of-the-art models for side-channel analysis, using a custom dataset collected from smartphones of diverse manufacturers. Experimental results show that our adversarial modifications achieve an average attack success rate of 75.2%, revealing practical vulnerabilities in power-based intrusion detection frameworks.

💡 Research Summary

The paper investigates the robustness of power‑side‑channel‑based malware detection for IoT devices and introduces a novel adversarial technique that defeats such detectors by injecting structured dummy code into the Mirai botnet’s scanning phase. The authors begin by highlighting the rapid proliferation of IoT devices and the corresponding rise of malware like Mirai, which exploits weak default credentials to build massive DDoS botnets. Recent research has shown that malware activity leaves distinctive footprints in a device’s power consumption, enabling non‑intrusive, out‑of‑band detection using deep learning models such as LSTM, TCN, and CNN‑based architectures. However, these systems assume that power signatures are relatively stable and that attackers cannot deliberately manipulate them.

To challenge this assumption, the authors design a gray‑box attack. They obtain the Mirai source code, modify the scanning routine, and embed “dummy” instructions—lightweight arithmetic, conditional branches, and short loops—that generate controlled variations in current draw without affecting the botnet’s core functionality. Placement of the dummy code is guided by SHAP (Shapley Additive Explanations) analysis performed on each target model; the most influential time‑points and voltage ranges are identified, and dummy operations are inserted precisely where the classifier relies on salient features. This creates a temporal desynchronization of the power trace, making malicious activity appear similar to benign idle or normal IoT service states.

The experimental dataset comprises power traces collected from three commercial smartphones (Samsung, Xiaomi, Google Pixel) under four operational conditions: idle, normal IoT service, unmodified Mirai, and dummy‑code‑augmented Mirir. Traces were sampled at 1 kHz, filtered, normalized, and segmented into 128‑sample windows, yielding over 144 k sequences. Six deep learning detectors were trained on the clean data: LSTM, BiLSTM, Temporal Convolutional Network (TCN), a hybrid BiLSTM+CNN, CNN with self‑attention, and an LSTM auto‑encoder with an MLP classifier.

When evaluated on the adversarial samples, the detectors exhibited substantial degradation. The LSTM‑based models achieved the highest attack success rate (ASR) of 78 %, while CNN‑based models reached around 71 %. Overall, the average ASR across all models was 75.2 %. Statistical analysis showed a positive Pearson correlation (0.68) between dummy‑code execution overhead (average 8 ms per injection) and evasion success, and a negative correlation (‑0.45) between stealthiness (measured as average power variance) and success, highlighting a trade‑off between performance impact and detectability. ANOVA and Granger causality tests confirmed the significance of these relationships.

The threat model assumes the attacker can modify malware code but lacks direct access to the detector’s parameters, training data, or hardware monitoring infrastructure—reflecting realistic scenarios where Mirai source is publicly available and power‑based IDS designs are described in literature. The results demonstrate that even under these constraints, power‑side‑channel detectors are vulnerable to software‑level perturbations, undermining the belief that such detectors are inherently tamper‑resistant.

In the discussion, the authors propose mitigation strategies: (1) multi‑modal sensing (combining power, voltage, and electromagnetic emissions) to increase feature diversity; (2) randomizing sampling rates and window sizes to reduce predictability; (3) employing ensemble models and continual adversarial training to harden classifiers against such perturbations. They acknowledge limitations, including the focus on high‑end smartphones rather than low‑power microcontrollers typical of many IoT nodes, and the lack of fine‑grained hardware power overhead measurements for the dummy code. Future work will extend experiments to embedded MCUs, routers, and industrial PLCs, and explore integrated defense mechanisms that combine real‑time anomaly detection with automatic firmware patching.

Overall, the paper provides a compelling demonstration that power‑side‑channel malware detection, while promising, is not immune to adversarial manipulation. It calls for a re‑evaluation of security assumptions in side‑channel‑based IDS and encourages the development of more resilient, multi‑layered detection frameworks.

Comments & Academic Discussion

Loading comments...

Leave a Comment