Reimagining Legal Fact Verification with GenAI: Toward Effective Human-AI Collaboration

Fact verification is a critical yet underexplored component of non-litigation legal practice. While existing research has examined automation in legal workflow and human-AI collaboration in high-stakes domains, little is known about how GenAI can support fact verification, a task that demands prudent judgment and strict accountability. To address this, we conducted semi-structured interviews with 18 lawyers to understand their current verification practices, attitudes toward GenAI adoption, and expectations for future systems. We found that while lawyers use GenAI for low-risk tasks like drafting and language optimization, concerns over accuracy, confidentiality, and liability are currently limiting its adoption for fact verification. These concerns translate into core design requirements for AI systems that are trustworthy and accountable. Based on these, we contribute design insights for human-AI collaboration in legal fact verification, emphasizing the development of auditable systems that balance efficiency with professional judgment and uphold ethical and legal accountability in high-stakes practice.

💡 Research Summary

This paper investigates how generative AI (GenAI) can be integrated into the fact‑verification process of non‑litigation legal practice, a domain that demands meticulous judgment, accountability, and confidentiality. The authors conducted semi‑structured interviews with 18 experienced non‑litigation lawyers from corporate transactions, cross‑border compliance, and contract‑review backgrounds. The study is guided by three research questions: (1) how lawyers currently conduct fact verification and where GenAI might fit; (2) what cognitive and operational challenges affect lawyers’ willingness to adopt GenAI for verification; and (3) what human‑AI interaction designs could support reliable, accountable verification.

The findings reveal that fact verification is a dynamic sense‑making activity. Lawyers must gather information from client‑provided documents, public registries, and third‑party reports, reconcile inconsistencies, and continuously refine a coherent narrative under tight deadlines. Existing legal‑tech tools (e.g., clause‑comparison engines, semantic search) automate isolated, structured sub‑tasks but fall short of supporting cross‑document reasoning, contextual interpretation, and judgment‑heavy synthesis. Consequently, lawyers rely on their expertise for the core verification work.

Lawyers already use GenAI for low‑risk tasks such as drafting, language polishing, and generating outlines. However, they express strong reservations about employing GenAI in verification due to three intertwined risk dimensions: accuracy (hallucinations and incorrect citations), confidentiality (exposure of sensitive client data to external models), and liability (unclear responsibility for AI‑generated errors). These concerns translate into concrete design requirements: (a) auditability – the system must log which sources and reasoning steps underlie each AI suggestion; (b) explainability – users need transparent rationales to assess AI output; (c) human‑in‑the‑loop control – lawyers must be able to edit, approve, or reject AI‑produced statements, with edits feeding back into model refinement; and (d) clear responsibility boundaries – policy and UI cues should delineate when human judgment supersedes AI.

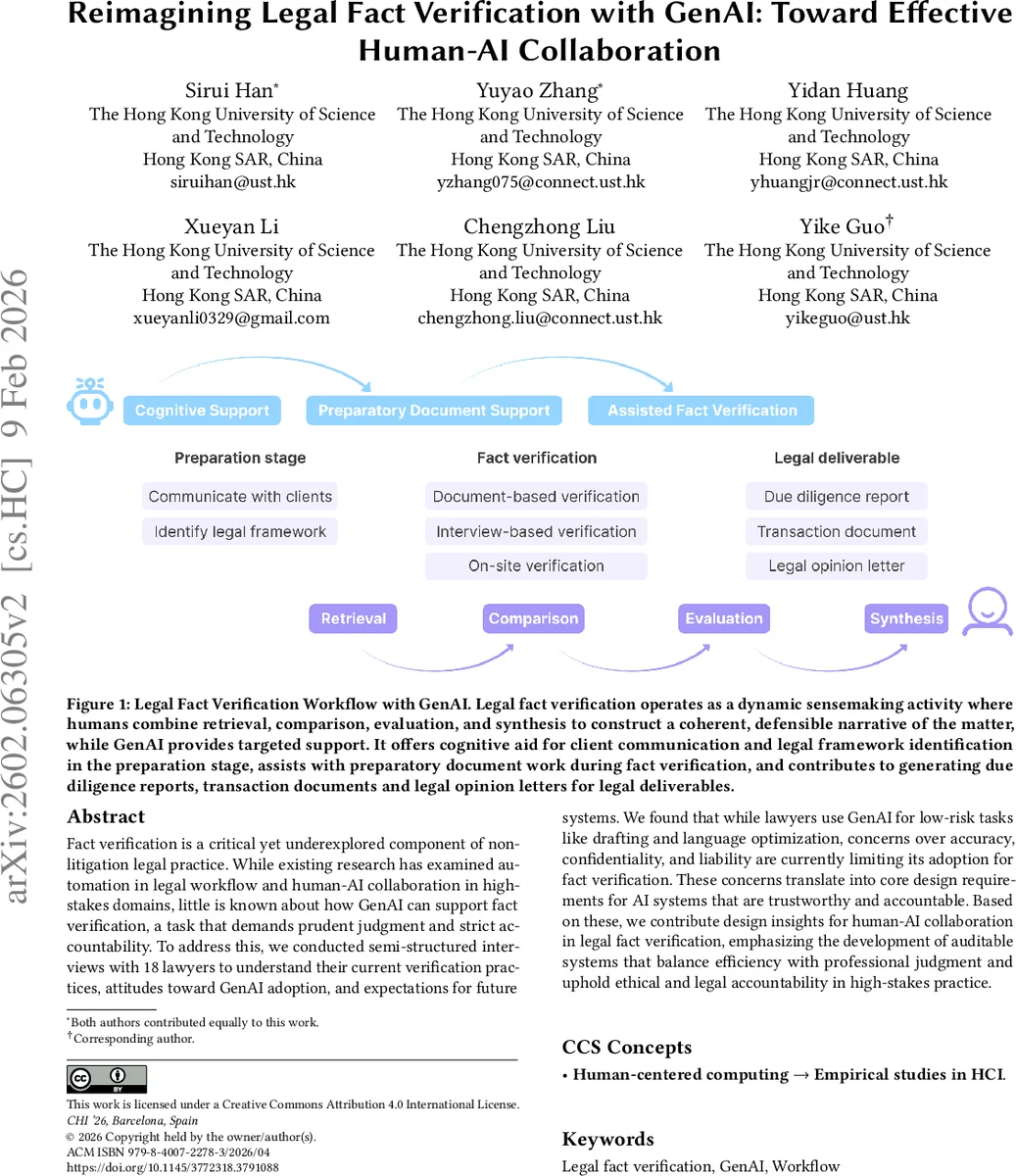

Based on these insights, the authors propose a “auditable collaborative AI” framework. In the preparation phase, GenAI assists by summarizing client materials, surfacing relevant statutes, and generating question checklists, thereby reducing cognitive load. During verification, the AI highlights potential inconsistencies across documents, surfaces supporting excerpts, and visualizes relational maps of facts, while always presenting source citations for human scrutiny. In the reporting phase, the AI drafts due‑diligence reports or opinion letters, which lawyers review, edit, and finalize. All interactions are recorded, enabling post‑hoc audits and compliance checks.

The paper also emphasizes organizational and cultural prerequisites. Effective adoption requires AI literacy training for lawyers, robust data‑privacy safeguards (e.g., on‑premise LLM deployment or secure enclaves), and firm‑wide policies that define data handling, liability, and ethical use. Without these, even a technically sound system may be rejected or misused.

In sum, the study contributes (1) an empirically grounded description of fact‑verification workflows in non‑litigation law, (2) a set of concrete, practitioner‑derived design requirements for trustworthy GenAI support, and (3) a conceptual architecture for human‑AI collaboration that balances efficiency gains with the non‑negotiable need for professional judgment and legal accountability. This work advances both HCI theory on high‑stakes human‑AI collaboration and practical guidance for legal‑tech developers aiming to augment, rather than replace, lawyers in the most critical aspects of their work.

Comments & Academic Discussion

Loading comments...

Leave a Comment