Driving with DINO: Vision Foundation Features as a Unified Bridge for Sim-to-Real Generation in Autonomous Driving

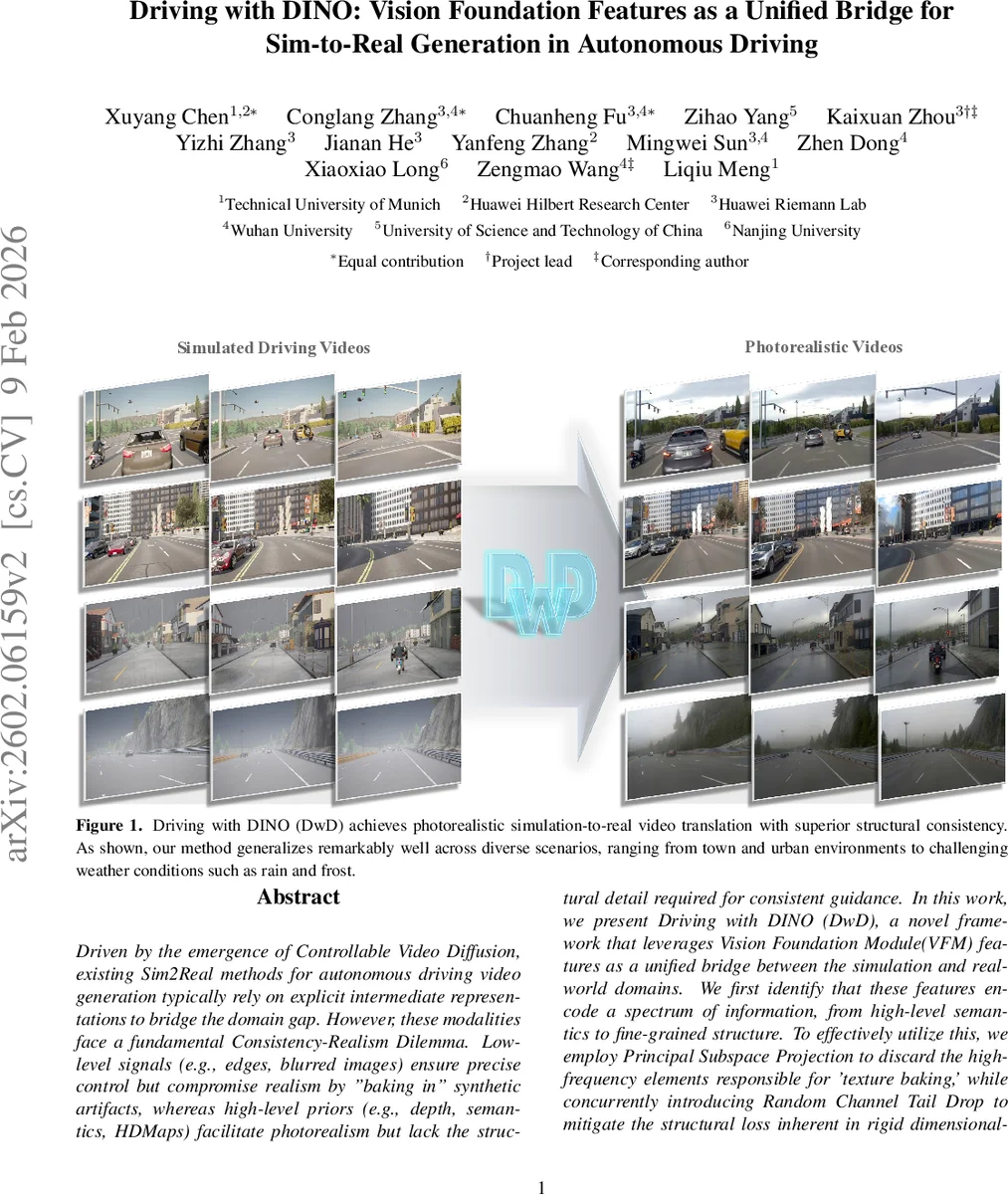

Driven by the emergence of Controllable Video Diffusion, existing Sim2Real methods for autonomous driving video generation typically rely on explicit intermediate representations to bridge the domain gap. However, these modalities face a fundamental Consistency-Realism Dilemma. Low-level signals (e.g., edges, blurred images) ensure precise control but compromise realism by “baking in” synthetic artifacts, whereas high-level priors (e.g., depth, semantics, HDMaps) facilitate photorealism but lack the structural detail required for consistent guidance. In this work, we present Driving with DINO (DwD), a novel framework that leverages Vision Foundation Module (VFM) features as a unified bridge between the simulation and real-world domains. We first identify that these features encode a spectrum of information, from high-level semantics to fine-grained structure. To effectively utilize this, we employ Principal Subspace Projection to discard the high-frequency elements responsible for “texture baking,” while concurrently introducing Random Channel Tail Drop to mitigate the structural loss inherent in rigid dimensionality reduction, thereby reconciling realism with control consistency. Furthermore, to fully leverage DINOv3’s high-resolution capabilities for enhancing control precision, we introduce a learnable Spatial Alignment Module that adapts these high-resolution features to the diffusion backbone. Finally, we propose a Causal Temporal Aggregator employing causal convolutions to explicitly preserve historical motion context when integrating frame-wise DINO features, which effectively mitigates motion blur and guarantees temporal stability. Project page: https://albertchen98.github.io/DwD-project/

💡 Research Summary

Driving with DINO (DwD) tackles the long‑standing consistency‑realism dilemma in simulation‑to‑real (Sim2Real) video generation for autonomous driving. Existing approaches rely on multiple explicit intermediate representations—edges, blurred images, depth maps, semantic masks, HDMaps—to bridge the domain gap. Low‑level cues provide precise geometric guidance but “bake in” synthetic textures, harming photorealism; high‑level cues enable realistic rendering but discard fine structural details, leading to hallucinations such as spurious lane markings. DwD proposes a fundamentally different conditioning strategy: it uses the features of a Vision Foundation Model (VFM), specifically DINOv3, as a unified bridge. DINOv3’s self‑supervised representations naturally encode a spectrum from low‑level texture to high‑level semantics within a single latent space, eliminating the need for heterogeneous control branches and the associated memory and gradient‑conflict issues.

The core technical contributions are fourfold. First, Principal Subspace Projection (PSP) applies PCA to DINO features and projects them onto a lower‑dimensional subspace, effectively filtering out high‑frequency components that carry simulation‑specific texture while preserving dominant structural information. Second, Random Channel Tail Drop (RCTD) introduces stochastic regularization: during training the number of retained PCA channels k is randomly sampled, preventing the rigid loss of structural cues that would occur with a fixed dimensionality reduction. This stochastic pruning balances realism and controllability across training iterations. Third, Spatial Resolution Enhancer (SRE) addresses the severe spatial compression of DINOv3 (typically 1/16 resolution). The input video is upscaled by a factor S before feeding it to DINOv3, yielding high‑resolution feature maps. Because these maps no longer match the spatial dimensions expected by the diffusion backbone, a learnable Spatial Alignment Module—a stack of strided convolutions with residual connections—maps the upscaled features to the required H/16 × W/16 shape, preserving fine‑grained geometric detail. Fourth, Causal Temporal Aggregator (CTA) mitigates temporal redundancy and motion blur. It processes frame‑wise DINO features with causal convolutions that aggregate historical context while respecting causality, ensuring smooth motion continuity and reducing flicker.

DwD integrates these modules into a controllable video diffusion architecture based on Cosmos‑Predict2.5, which combines a pretrained 3D VAE with a fine‑tuned Diffusion Transformer (DiT). A dedicated control branch, initialized from the denoising DiT weights, receives the processed DINO features as conditioning inputs at regular intervals (as in VACE). During training, the model reconstructs real‑world driving videos, learning to map the unified DINO conditioning to photorealistic outputs while preserving the simulated layout. At inference, synthetic simulation frames (e.g., from CARLA) are passed through the same pipeline, and the diffusion model generates high‑fidelity videos that faithfully respect the original geometry.

Extensive experiments demonstrate that DwD outperforms state‑of‑the‑art baselines—including multi‑control methods such as Magic‑Drive, DriveDreamer, and various ControlNet‑augmented video diffusion models—across quantitative metrics (PSNR, SSIM, LPIPS, FID) and human perceptual studies. Notably, lane markings, traffic signs, and vehicle contours remain accurate even under challenging weather (rain, fog, night) where prior methods either lose structural fidelity or produce unrealistic textures. The unified DINO conditioning eliminates the need for multiple adapters, reducing GPU memory consumption by over 30 % and simplifying training dynamics.

Ablation studies confirm the importance of each component: removing PSP leads to severe texture baking; omitting RCTD causes over‑smoothing and loss of fine geometry; without SRE the spatial alignment error degrades lane‑level precision; and without CTA the generated videos exhibit noticeable flicker and motion blur. Sensitivity analysis shows that a moderate PCA dimensionality (k ≈ 8–32) yields the best trade‑off between realism and structural detail.

The paper also discusses limitations. DINOv3, while powerful, is a 2‑D vision model and does not directly ingest LiDAR or radar data, which could further improve Sim2Real fidelity for autonomous driving. The choice of PCA dimensionality and RCTD sampling distribution still requires dataset‑specific tuning; future work could explore meta‑learning or adaptive schemes. Moreover, current experiments focus on short video clips (2–4 seconds); scaling to longer continuous drives will demand more efficient temporal modeling and memory management.

In summary, Driving with DINO introduces a novel paradigm where a Vision Foundation Model serves as a single, rich intermediate representation for Sim2Real video translation. By carefully pruning high‑frequency texture, enhancing spatial resolution, and aggregating temporal context, DwD achieves both photorealism and strict structural consistency without the complexity of multi‑modal control architectures. The approach sets a new benchmark for autonomous driving video synthesis and opens avenues for applying VFM‑based unified conditioning to other simulation‑to‑real domains such as robotics manipulation, medical imaging, and virtual‑reality content creation.

Comments & Academic Discussion

Loading comments...

Leave a Comment