Reinforcement World Model Learning for LLM-based Agents

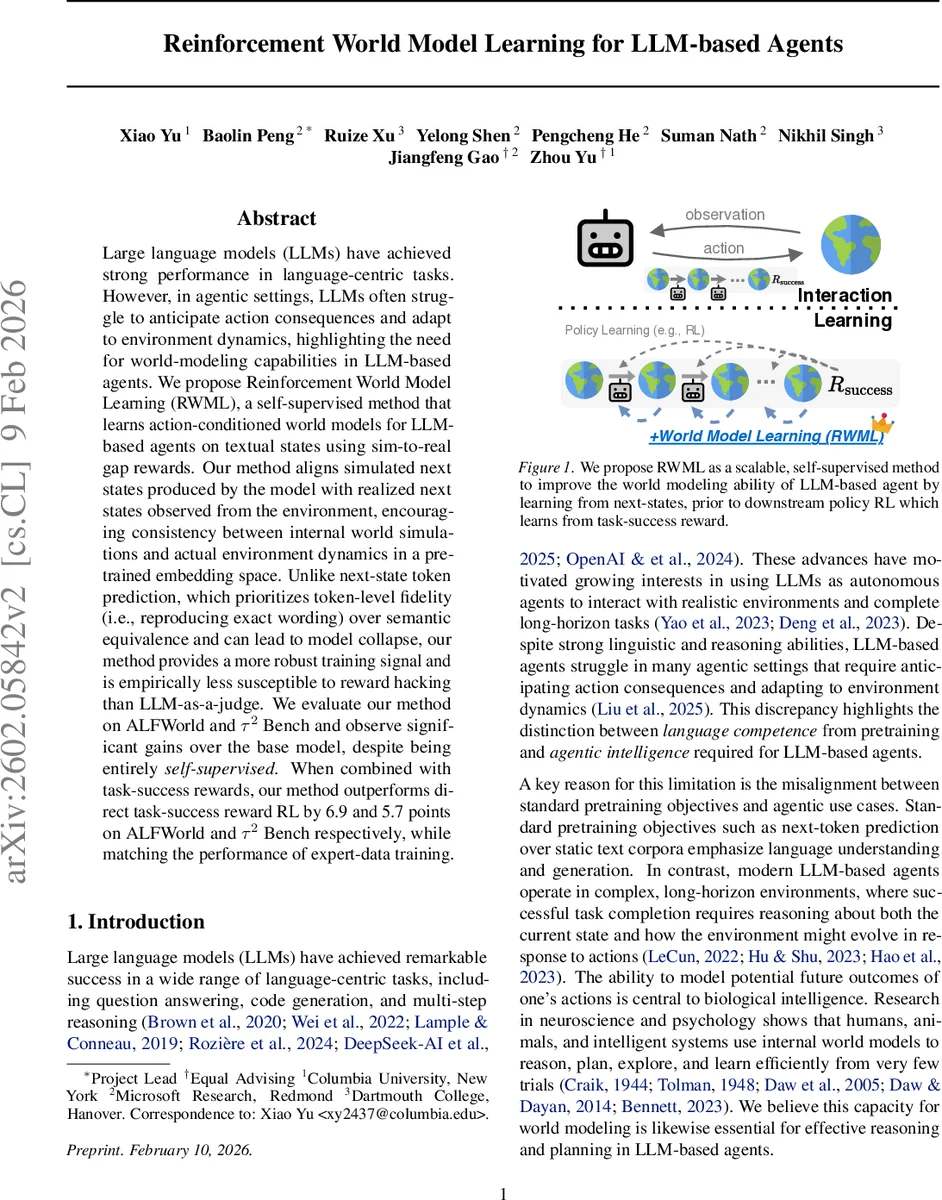

Large language models (LLMs) have achieved strong performance in language-centric tasks. However, in agentic settings, LLMs often struggle to anticipate action consequences and adapt to environment dynamics, highlighting the need for world-modeling capabilities in LLM-based agents. We propose Reinforcement World Model Learning (RWML), a self-supervised method that learns action-conditioned world models for LLM-based agents on textual states using sim-to-real gap rewards. Our method aligns simulated next states produced by the model with realized next states observed from the environment, encouraging consistency between internal world simulations and actual environment dynamics in a pre-trained embedding space. Unlike next-state token prediction, which prioritizes token-level fidelity (i.e., reproducing exact wording) over semantic equivalence and can lead to model collapse, our method provides a more robust training signal and is empirically less susceptible to reward hacking than LLM-as-a-judge. We evaluate our method on ALFWorld and $τ^2$ Bench and observe significant gains over the base model, despite being entirely self-supervised. When combined with task-success rewards, our method outperforms direct task-success reward RL by 6.9 and 5.7 points on ALFWorld and $τ^2$ Bench respectively, while matching the performance of expert-data training.

💡 Research Summary

The paper addresses a critical gap in the use of large language models (LLMs) as autonomous agents: while LLMs excel at language understanding and generation, they often fail to anticipate the consequences of their actions and adapt to dynamic environments. To bridge this gap, the authors introduce Reinforcement World Model Learning (RWML), a self‑supervised method that equips LLM‑based agents with action‑conditioned world‑model capabilities without relying on expert demonstrations or explicit task‑success rewards.

RWML reframes world‑model learning as a reinforcement learning problem. An LLM receives a history of observations and actions, generates a “reason” token sequence, and then predicts the next textual state (\hat{s}{t+1}). Instead of training the model to reproduce the exact next‑state tokens (as in standard supervised fine‑tuning, SFT), RWML evaluates the semantic similarity between (\hat{s}{t+1}) and the true next state (s_{t+1}) using a pre‑trained embedding encoder (E). The distance is measured by cosine similarity, and a binary reward is given: 1 if the distance falls below a threshold (\tau_d), otherwise 0. This binary, embedding‑based reward encourages semantic alignment while being robust to surface‑level token variations and resistant to reward‑hacking.

Policy optimization is performed with Generalized PPO (GRPO), which incorporates importance‑sampling ratios, KL‑regularization, and a group‑relative advantage computed from the binary reward. To focus learning on informative transitions, the authors introduce an “easy‑sample” filtering step. They first train a lightweight SFT model on 10 % of the data, then use it to label the remaining 90 % of rollouts. Samples that consistently achieve high reward across multiple attempts are deemed “easy” and are down‑sampled (kept with probability 0.1). This preserves data diversity while preventing the model from over‑fitting trivial transitions.

Experiments are conducted on two long‑horizon benchmarks: ALFWorld, a text‑based embodied household environment, and τ² Bench, an interleaved tool‑use and dialogue setting. In both cases the agent must reason about objects, tools, and user intents over many steps. The authors collect interaction data solely by letting the target model roll out trajectories (N = 3 for ALFWorld, N = 6 for τ² Bench) with a temperature of 1.0. No expert trajectories, strong LLMs, or task‑success signals are used during RWML training.

Results show that RWML alone improves the base model by 19.6 points on ALFWorld and 7.9 points on τ² Bench. When combined with standard task‑success reward RL (Policy RL), RWML‑augmented agents outperform pure Policy RL by 6.9 points (ALFWorld) and 5.7 points (τ² Bench), and match the performance of methods that rely on expert data and strong LLMs. Additional analyses reveal that RWML suffers far less catastrophic forgetting than a world‑model SFT baseline across a suite of general‑knowledge (MMLU‑Redux, IFEval), math (Math‑500, GSM8k, GPQA‑Diamond), and coding (LiveCodeBench) benchmarks. This aligns with prior findings that online RL preserves prior capabilities better than static fine‑tuning.

The authors also compare RWML to LLM‑as‑a‑judge approaches, demonstrating that the simple embedding‑based binary reward is far less vulnerable to reward hacking because it does not depend on surface token matching.

In summary, RWML contributes a scalable, self‑supervised pipeline that (1) learns action‑conditioned world models via reinforcement learning, (2) uses semantic similarity as a robust training signal, (3) filters out trivial samples to focus on informative dynamics, and (4) can be seamlessly combined with downstream task‑success RL. This “mid‑training” strategy offers a practical path to endow LLM agents with richer predictive models of their environments, improving planning, sample efficiency, and long‑term performance without the heavy reliance on expert data.

Comments & Academic Discussion

Loading comments...

Leave a Comment