ToMigo: Interpretable Design Concept Graphs for Aligning Generative AI with Creative Intent

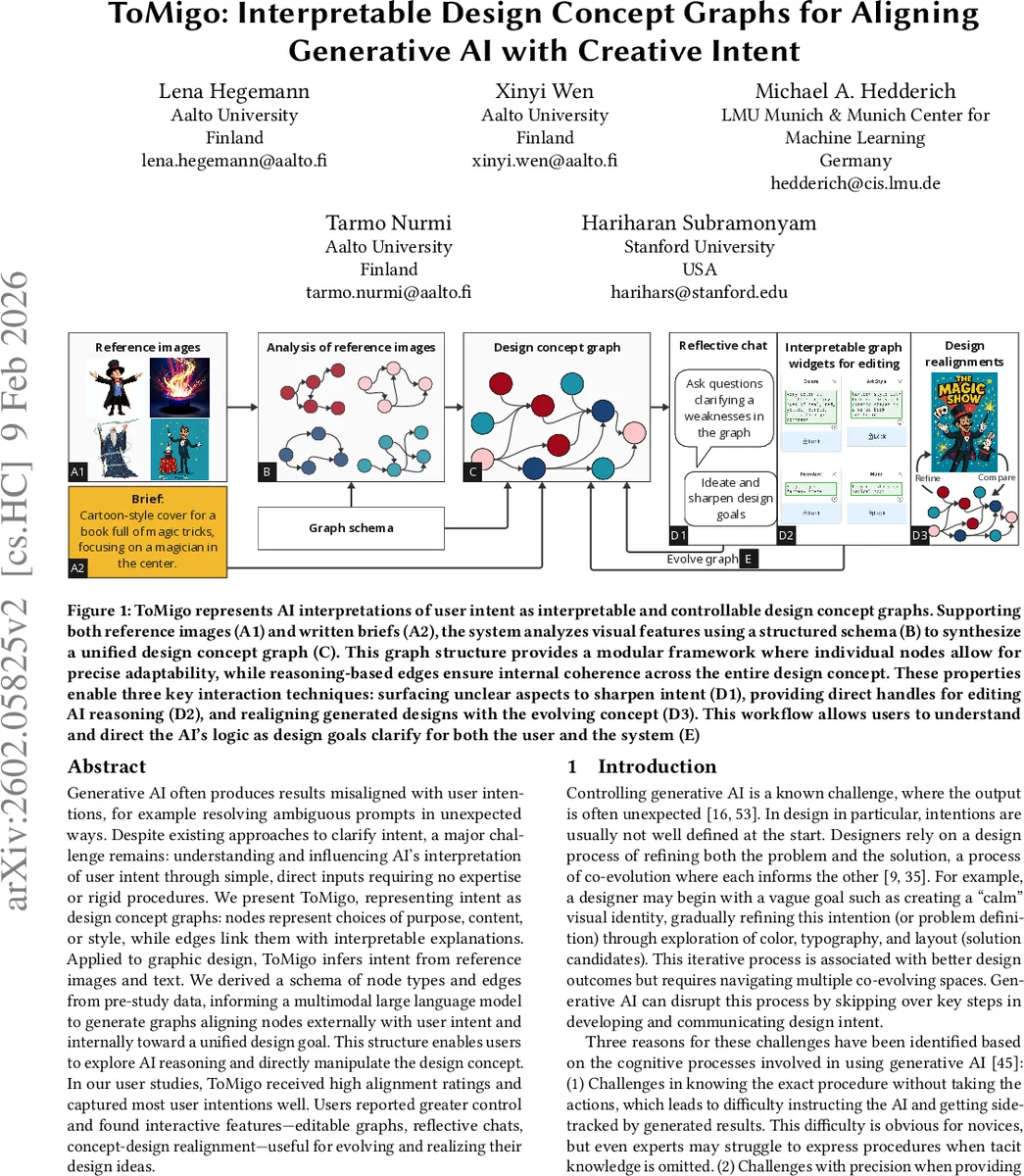

Generative AI often produces results misaligned with user intentions, for example, resolving ambiguous prompts in unexpected ways. Despite existing approaches to clarify intent, a major challenge remains: understanding and influencing AI’s interpretation of user intent through simple, direct inputs requiring no expertise or rigid procedures. We present ToMigo, representing intent as design concept graphs: nodes represent choices of purpose, content, or style, while edges link them with interpretable explanations. Applied to graphic design, ToMigo infers intent from reference images and text. We derived a schema of node types and edges from pre-study data, informing a multimodal large language model to generate graphs aligning nodes externally with user intent and internally toward a unified design goal. This structure enables users to explore AI reasoning and directly manipulate the design concept. In our user studies, ToMigo received high alignment ratings and captured most user intentions well. Users reported greater control and found interactive features-editable graphs, reflective chats, concept-design realignment-useful for evolving and realizing their design ideas.

💡 Research Summary

ToMigo addresses the persistent problem of misalignment between generative AI outputs and user intentions, especially when prompts are ambiguous or under‑specified. The system externalises a user’s creative intent as a “design concept graph” in which nodes encode purpose, content, and style decisions, while edges carry natural‑language explanations of how those decisions relate. By ingesting both textual briefs and reference images, a multimodal large language model (LLM) extracts explicit cues (e.g., “magician in the centre”, “comic‑book style”) and infers implicit cues (e.g., mood‑appropriate colour palettes, layout conventions). These cues are mapped onto a graph schema derived from a formative study that identified ten distinct intention types and their complex interdependencies.

The graph serves as a shared, editable boundary object between the user and the AI. Two interaction techniques are built around it. First, Theory‑of‑Mind widgets let users inspect any node, read the AI’s reasoning, and directly edit node attributes via text fields, sliders, or dropdowns. Changes propagate automatically along the edges, preserving global coherence. Second, a reflective chat module detects ambiguous or conflicting graph regions and asks targeted clarification questions, allowing users to refine or add intentions on the fly. The graph can also be fed back into the generation pipeline so that updated designs are produced in line with the current graph state.

Two user studies validate the approach. In the first, participants created initial graphs from brief + image inputs; the resulting graphs matched the participants’ intended goals with 87 % accuracy, outperforming baseline prompt‑only tools on alignment and usability metrics. In the second, participants engaged in an iterative design workflow, repeatedly editing the graph and regenerating cover designs. Graph‑driven edits led to a statistically significant increase (≈1.3 points on a 5‑point alignment scale) in perceived goal‑fit of the final outputs. Qualitative feedback highlighted the sense of control and transparency: users appreciated being able to see “why the AI chose a bright blue palette” and to correct it directly.

Limitations include the domain‑specific nature of the current schema (focused on graphic‑design covers) and occasional over‑generalisation in LLM‑generated edge explanations, which can become vague. The authors propose future work on graph neural‑network‑based update mechanisms, automatic schema generation for new design domains, and quality‑control modules for edge‑text generation.

Overall, ToMigo demonstrates that representing creative intent as an interpretable, modular graph bridges the gap between human designers and generative models, offering a scalable way to achieve alignment, explainability, and iterative refinement in AI‑assisted design.

Comments & Academic Discussion

Loading comments...

Leave a Comment