Learning-based Initialization of Trajectory Optimization for Path-following Problems of Redundant Manipulators

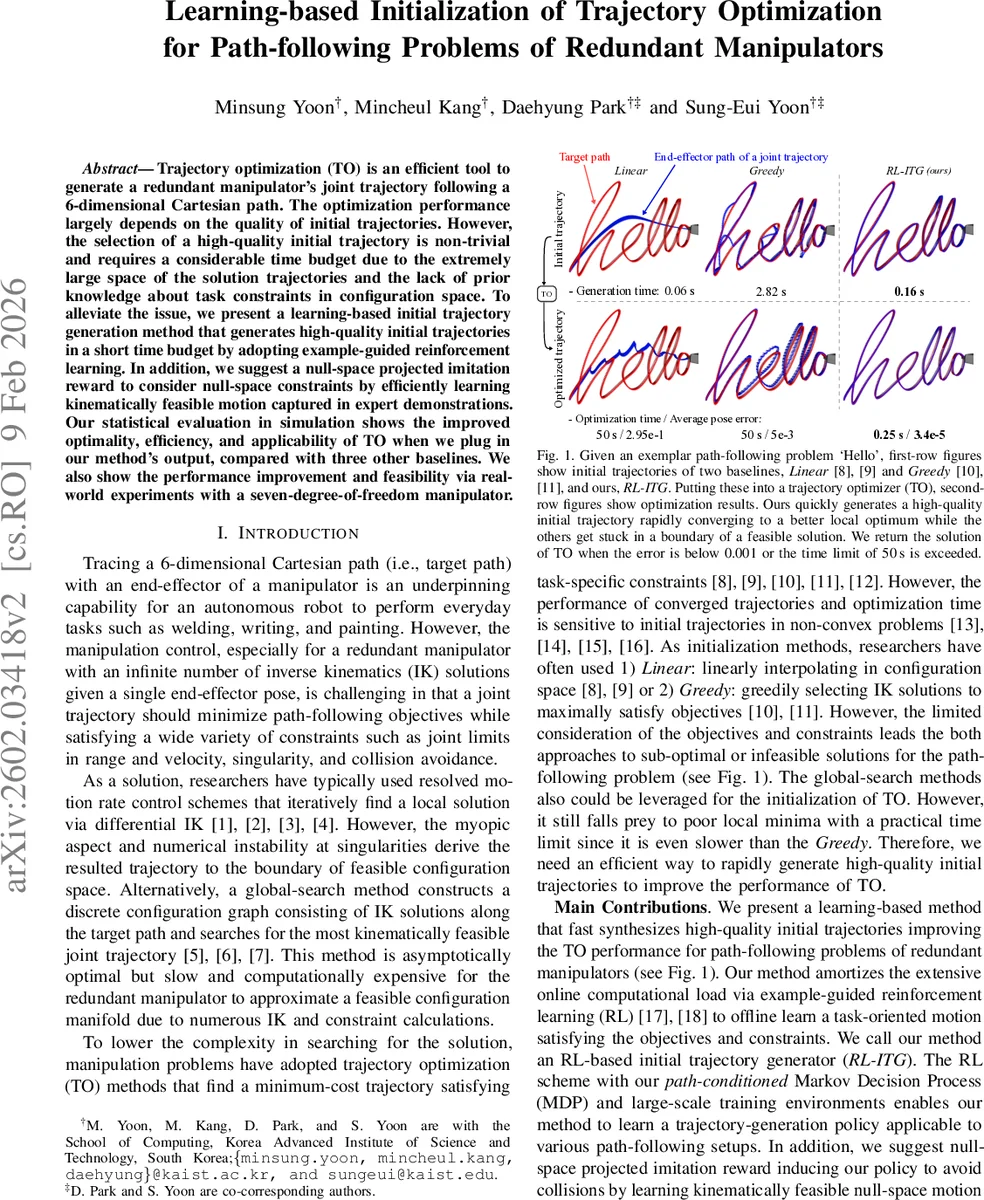

Trajectory optimization (TO) is an efficient tool to generate a redundant manipulator’s joint trajectory following a 6-dimensional Cartesian path. The optimization performance largely depends on the quality of initial trajectories. However, the selection of a high-quality initial trajectory is non-trivial and requires a considerable time budget due to the extremely large space of the solution trajectories and the lack of prior knowledge about task constraints in configuration space. To alleviate the issue, we present a learning-based initial trajectory generation method that generates high-quality initial trajectories in a short time budget by adopting example-guided reinforcement learning. In addition, we suggest a null-space projected imitation reward to consider null-space constraints by efficiently learning kinematically feasible motion captured in expert demonstrations. Our statistical evaluation in simulation shows the improved optimality, efficiency, and applicability of TO when we plug in our method’s output, compared with three other baselines. We also show the performance improvement and feasibility via real-world experiments with a seven-degree-of-freedom manipulator.

💡 Research Summary

Trajectory optimization (TO) is a powerful tool for generating joint trajectories of redundant manipulators that must follow a six‑dimensional Cartesian path while respecting constraints such as joint limits, singularities, and collision avoidance. However, the non‑convex nature of the TO problem makes the quality of the initial trajectory a critical factor: a poor initialization can trap the optimizer in a sub‑optimal local minimum or cause it to exceed practical time limits. Traditional initialization methods—linear interpolation in configuration space (Linear) and greedy selection of inverse‑kinematics (IK) solutions (Greedy)—consider only a subset of the objectives and constraints, often resulting in infeasible or low‑quality seeds for the optimizer.

The authors propose a learning‑based approach called RL‑ITG (Reinforcement‑Learning‑based Initial Trajectory Generator) that produces high‑quality initial trajectories rapidly by leveraging example‑guided reinforcement learning. The method formulates the path‑following problem as a path‑conditioned Markov Decision Process (MDP). The state at each step consists of the current joint configuration, a list of link poses, a set of relative distances to future target poses, and a scene‑context embedding derived from a 3‑D occupancy grid. The action is a continuous joint‑space increment, and the transition is deterministic.

The reward function is composed of three parts: (1) a task reward that penalizes positional and rotational errors between the end‑effector pose generated by the current joint configuration and the corresponding target pose; (2) an imitation reward that encourages the policy to reproduce the null‑space motion observed in expert demonstrations; and (3) a constraint reward that penalizes collisions, joint‑limit violations, singularities, and large deviations from the target path. The imitation reward is particularly novel: it projects the difference between the expert demonstration and the current configuration onto the null‑space of the Jacobian (I‑J†J), thereby guiding the policy toward kinematically feasible motions without directly copying potentially sub‑optimal demonstration values.

Training data are generated in large‑scale simulated environments. Five thousand random, collision‑free Cartesian paths are sampled within the reachable workspace of a Fetch robot, and each path is interpolated with a B‑spline to obtain dense waypoints. Five hundred random tabletop scenes with obstacles are also generated. Expert demonstrations are obtained by running a state‑of‑the‑art TO algorithm (e.g., TrajOpt) on these paths, providing the policy with examples of collision‑free, smooth null‑space motions. The policy is trained using Proximal Policy Optimization (PPO) over millions of steps, learning to map the rich state representation to joint increments that simultaneously reduce task error and respect constraints.

Experimental evaluation shows that RL‑ITG dramatically improves TO performance. When the trajectories generated by RL‑ITG are fed to a standard TO solver, the final cost (a weighted sum of pose error, obstacle avoidance, and smoothness) is reduced by more than 30 % compared with Linear and Greedy initializations. Moreover, the optimizer converges in an average of 0.25 seconds, far below the 50‑second time budget used for baseline comparisons, and achieves a 99 % success rate (final pose error < 0.001). Ablation studies on reward composition reveal that the combination of task reward with the null‑space projected imitation reward yields the fastest learning and highest success, whereas a naïve L2 imitation term degrades performance.

Real‑world experiments on a seven‑DoF Fetch robot validate the simulation results. In scenes with multiple obstacles, RL‑ITG‑initialized TO produces smooth, collision‑free trajectories without manual tuning, and the total planning time remains under 0.3 seconds. The authors also analyze which properties of the initial trajectory (low pose error, smooth null‑space motion, and constraint compliance) contribute most to the downstream TO improvement.

In summary, the paper makes three key contributions: (1) a novel example‑guided reinforcement‑learning framework for generating high‑quality initial trajectories for redundant manipulators; (2) the introduction of a null‑space projected imitation reward that effectively transfers feasible motion patterns from expert demonstrations while mitigating their sub‑optimality; and (3) extensive simulation and real‑robot validation demonstrating superior optimality, efficiency, and applicability over existing initialization methods. Future work may extend the approach to dynamic environments, multi‑robot coordination, and domain‑adaptation techniques to bridge the sim‑to‑real gap.

Comments & Academic Discussion

Loading comments...

Leave a Comment