Beyond Quantity: Trajectory Diversity Scaling for Code Agents

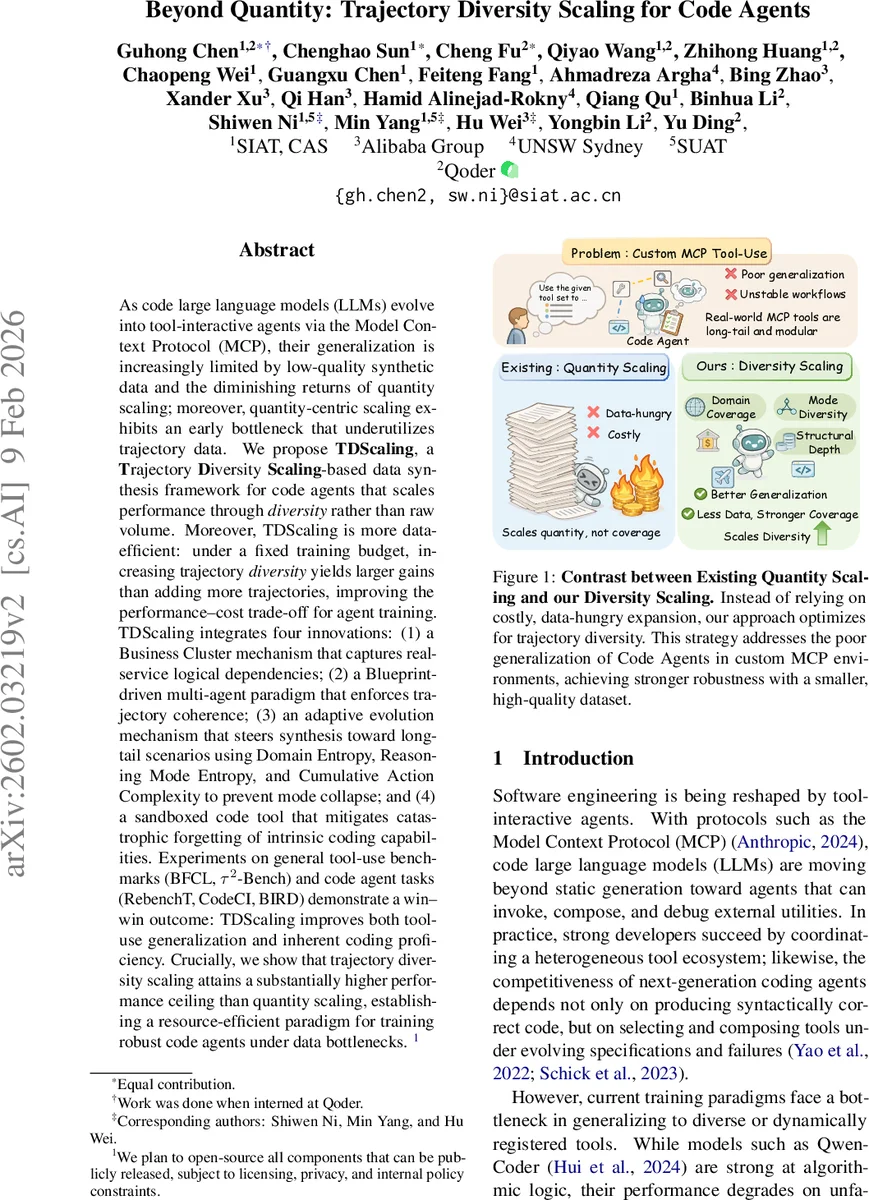

As code large language models (LLMs) evolve into tool-interactive agents via the Model Context Protocol (MCP), their generalization is increasingly limited by low-quality synthetic data and the diminishing returns of quantity scaling. Moreover, quantity-centric scaling exhibits an early bottleneck that underutilizes trajectory data. We propose TDScaling, a Trajectory Diversity Scaling-based data synthesis framework for code agents that scales performance through diversity rather than raw volume. Under a fixed training budget, increasing trajectory diversity yields larger gains than adding more trajectories, improving the performance-cost trade-off for agent training. TDScaling integrates four innovations: (1) a Business Cluster mechanism that captures real-service logical dependencies; (2) a blueprint-driven multi-agent paradigm that enforces trajectory coherence; (3) an adaptive evolution mechanism that steers synthesis toward long-tail scenarios using Domain Entropy, Reasoning Mode Entropy, and Cumulative Action Complexity to prevent mode collapse; and (4) a sandboxed code tool that mitigates catastrophic forgetting of intrinsic coding capabilities. Experiments on general tool-use benchmarks (BFCL, tau^2-Bench) and code agent tasks (RebenchT, CodeCI, BIRD) demonstrate a win-win outcome: TDScaling improves both tool-use generalization and inherent coding proficiency. We plan to release the full codebase and the synthesized dataset (including 30,000+ tool clusters) upon publication.

💡 Research Summary

The paper addresses a pressing limitation of modern code large language models (LLMs) that are being turned into tool‑interactive agents via the Model Context Protocol (MCP). While scaling the amount of synthetic interaction data has been the dominant strategy, the authors demonstrate that sheer quantity quickly hits diminishing returns: low‑entropy, repetitive trajectories fail to cover the long‑tail of real‑world tool ecosystems, leading to brittle agents that cannot generalize to new APIs, nested calls, or error‑recovery scenarios.

To overcome this, the authors propose TDScaling (Trajectory Diversity Scaling), a data‑synthesis framework that prioritizes diversity over raw volume. Under a fixed training budget, increasing the variety of trajectories yields larger performance gains than simply adding more samples. TDScaling consists of four tightly integrated innovations:

-

Business Cluster Sampling – MCP servers are grouped into “Business Clusters” that reflect logical service dependencies (e.g., payments, search, weather). A greedy maximum‑coverage algorithm selects a subset of clusters that maximizes functional‑class coverage while respecting a budget constraint. This reduces redundancy and ensures the synthetic data mirrors the semantic breadth of real services.

-

Blueprint‑Driven Multi‑Agent Synthesis – For each chosen cluster, a BlueprintAgent creates a Scenario Blueprint containing a user goal, execution plan, constraints, and a strategy profile. User, Assistant, and Observation agents then enact the blueprint in a turn‑based dialogue. The ObservationAgent enforces Dynamic Schema Locking to keep JSON schemas consistent across turns, preventing “structural hallucination.” A QualityAgent validates format and logical consistency before trajectories are fed back into a global memory.

-

Sandboxed Code Tool as Regularizer – When a blueprint flags a task that cannot be solved with standard functional tools, a sandboxed Python interpreter is invoked. This enables deterministic program‑of‑thought reasoning for complex data processing (e.g., multi‑criteria sorting) and, crucially, mitigates catastrophic forgetting of the model’s native coding abilities by interleaving genuine code generation with tool‑use interactions.

-

Adaptive Evolution Guided by Diversity Metrics – The system continuously measures three quantitative aspects of diversity:

- Domain Entropy (H_dom) – the normalized distribution of trajectories across business clusters, encouraging coverage of under‑represented domains.

- Reasoning Mode Entropy (H_mode) – Shannon entropy over dynamically discovered reasoning modes (hypothesis‑testing, recursive correction, etc.), pushing the model away from low‑effort, repetitive patterns.

- Cumulative Action Complexity (CAC) – a product of tool‑switching cost and hierarchical argument‑instantiation cost, quantifying the cognitive load of each action.

Trajectories are scored on these metrics; high‑scoring samples update the global memory, which in turn steers future blueprint generation toward under‑explored domains, richer reasoning styles, and higher structural depth.

Empirical Evaluation

The authors evaluate TDScaling on two families of benchmarks: (i) general tool‑use suites (BFCL, τ²‑Bench) that test an agent’s ability to discover, compose, and recover from a variety of APIs; and (ii) code‑agent‑specific tasks (RebenchT, CodeCI, BIRD) that assess both tool‑use and intrinsic coding proficiency. Compared with baseline quantity‑scaling pipelines, TDScaling achieves 10‑25 % higher accuracy or success rates while using the same number of synthetic trajectories. Notably, a Qwen3‑Coder‑30B‑A3B model trained with TDScaling matches the performance of a 480B‑scale model on these tasks, illustrating a higher performance ceiling for diversity‑first scaling. Moreover, the inclusion of the sandboxed code tool preserves baseline coding scores (e.g., HumanEval) that typically degrade during aggressive tool‑fine‑tuning.

Contributions and Impact

The paper makes four key contributions: (1) introducing a diversity‑centric synthesis paradigm that empirically outperforms quantity‑centric scaling; (2) devising a business‑cluster sampling strategy that respects real‑world service modularity; (3) presenting a blueprint‑driven multi‑agent pipeline with dynamic schema enforcement; and (4) formulating entropy‑ and complexity‑based metrics that guide adaptive evolution and prevent mode collapse. By demonstrating that trajectory diversity can be systematically measured, optimized, and leveraged, the work opens a new research direction for resource‑efficient training of robust code agents, especially in environments where new tools are constantly added.

The authors plan to release the full codebase, the 30,000+ business‑cluster dataset, and the generated trajectories, facilitating reproducibility and further exploration of diversity‑driven scaling in LLM‑based agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment