Fast KVzip: Efficient and Accurate LLM Inference with Gated KV Eviction

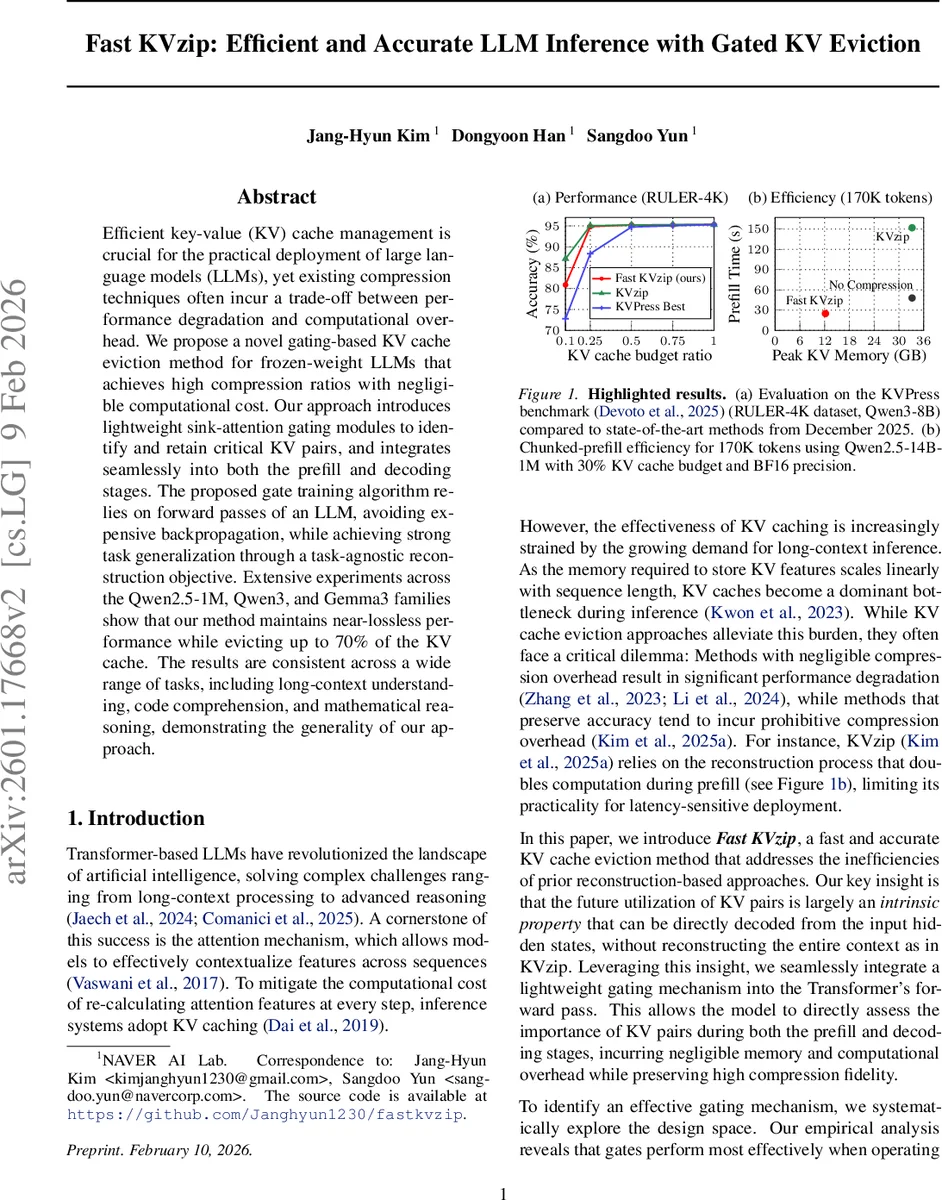

Efficient key-value (KV) cache management is crucial for the practical deployment of large language models (LLMs), yet existing compression techniques often incur a trade-off between performance degradation and computational overhead. We propose a novel gating-based KV cache eviction method for frozen-weight LLMs that achieves high compression ratios with negligible computational cost. Our approach introduces lightweight sink-attention gating modules to identify and retain critical KV pairs, and integrates seamlessly into both the prefill and decoding stages. The proposed gate training algorithm relies on forward passes of an LLM, avoiding expensive backpropagation, while achieving strong task generalization through a task-agnostic reconstruction objective. Extensive experiments across the Qwen2.5-1M, Qwen3, and Gemma3 families show that our method maintains near-lossless performance while evicting up to 70% of the KV cache. The results are consistent across a wide range of tasks, including long-context understanding, code comprehension, and mathematical reasoning, demonstrating the generality of our approach.

💡 Research Summary

The paper introduces Fast KVzip, a novel method for compressing the key‑value (KV) cache of frozen‑weight large language models (LLMs) with minimal computational overhead. Traditional KV‑compression techniques either sacrifice accuracy when aggressively pruning the cache or incur heavy extra computation (e.g., reconstruction, back‑propagation) that makes them impractical for latency‑sensitive deployments. Fast KVzip resolves this trade‑off by embedding a lightweight gating module into each transformer layer that predicts the importance of KV pairs directly from the hidden states produced during the forward pass.

Key technical contributions:

- Sink‑attention gating – a simple, parameter‑efficient module that maps per‑layer hidden vectors to a set of scores in (

Comments & Academic Discussion

Loading comments...

Leave a Comment