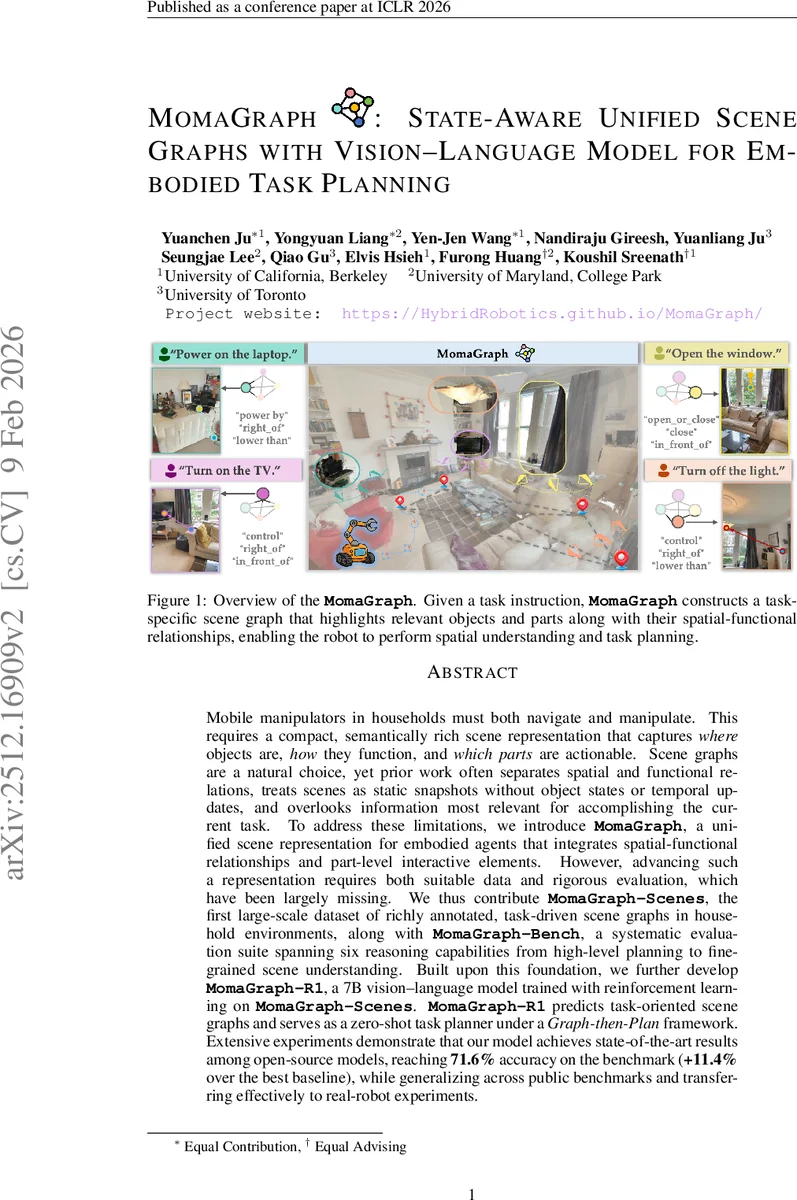

MomaGraph: State-Aware Unified Scene Graphs with Vision-Language Model for Embodied Task Planning

Mobile manipulators in households must both navigate and manipulate. This requires a compact, semantically rich scene representation that captures where objects are, how they function, and which parts are actionable. Scene graphs are a natural choice, yet prior work often separates spatial and functional relations, treats scenes as static snapshots without object states or temporal updates, and overlooks information most relevant for accomplishing the current task. To address these limitations, we introduce MomaGraph, a unified scene representation for embodied agents that integrates spatial-functional relationships and part-level interactive elements. However, advancing such a representation requires both suitable data and rigorous evaluation, which have been largely missing. We thus contribute MomaGraph-Scenes, the first large-scale dataset of richly annotated, task-driven scene graphs in household environments, along with MomaGraph-Bench, a systematic evaluation suite spanning six reasoning capabilities from high-level planning to fine-grained scene understanding. Built upon this foundation, we further develop MomaGraph-R1, a 7B vision-language model trained with reinforcement learning on MomaGraph-Scenes. MomaGraph-R1 predicts task-oriented scene graphs and serves as a zero-shot task planner under a Graph-then-Plan framework. Extensive experiments demonstrate that our model achieves state-of-the-art results among open-source models, reaching 71.6% accuracy on the benchmark (+11.4% over the best baseline), while generalizing across public benchmarks and transferring effectively to real-robot experiments.

💡 Research Summary

MomaGraph tackles the fundamental problem of how household mobile manipulators can simultaneously navigate and manipulate objects by introducing a unified, task‑oriented scene graph representation. Traditional scene graphs either encode spatial relationships (e.g., “on top of”) or functional relationships (e.g., “can be opened”) but never both, and they treat scenes as static snapshots, ignoring object state changes and task relevance. MomaGraph resolves these issues by defining a graph G_T = (N_T, E_T^s, E_T^f) where nodes N_T include both whole objects and fine‑grained parts (handles, buttons, etc.) that are directly relevant to a given natural‑language instruction T. Directed edges capture spatial relations (E_T^s) and functional affordances (E_T^f) in a single structure, enabling compact yet expressive scene encoding.

To train and evaluate such a representation, the authors construct two major resources. MomaGraph‑Scenes is a large‑scale dataset containing over 10 k indoor rooms, multi‑view RGB‑D images, executed action logs, part‑level annotations, and task‑conditioned scene graphs for 30+ household tasks. MomaGraph‑Bench is a systematic benchmark that measures six reasoning capabilities: high‑level task planning, navigation, part detection, state inference, relational consistency, and graph format compliance. This benchmark provides a unified yardstick for assessing how well a model’s scene graph supports downstream planning.

The core model, MomaGraph‑R1, builds on the open‑source Qwen‑2.5‑VL‑7B‑Instruct vision‑language model and is fine‑tuned with reinforcement learning (DAPO). The reward function explicitly evaluates (a) correct action‑type prediction, (b) semantic similarity of predicted vs. ground‑truth spatial and functional edges, (c) node set IoU, and (d) JSON format and output length constraints. By optimizing this multi‑component reward, the model learns to generate accurate, compact, task‑specific graphs directly from raw images.

Empirical results demonstrate the superiority of the unified graph. When the same backbone is trained with only spatial edges or only functional edges, performance drops by 6–7 percentage points compared to the full unified version (overall accuracy 71.6% vs. 64.9% and 59.9%). The advantage holds across a different backbone (LLaVA‑One). Moreover, direct planning from images using a large closed‑source VLM (GPT‑5) frequently omits prerequisite steps or misidentifies interaction types, achieving only ~45% success on complex tasks. In contrast, the Graph‑then‑Plan pipeline with MomaGraph‑R1 yields correct, complete plans with >78% success.

Real‑world robot experiments on four composite tasks (e.g., “open the window”, “obtain clean boiled water”) confirm the simulation findings: MomaGraph‑R1 achieves an average 85% success rate, outperforming baseline planners by roughly 15 percentage points. The model proves robust to visual noise and reliably selects the correct interactive parts.

The paper concludes that a unified spatial‑functional‑part scene graph is essential for embodied task planning, that large‑scale task‑oriented datasets and systematic benchmarks are now available, and that reinforcement‑learning‑enhanced VLMs can effectively learn to produce such graphs. Limitations include static graph updates (no real‑time temporal reasoning) and reliance on simulated data. Future work is suggested on dynamic graph maintenance, multi‑agent collaboration, and scaling to larger multimodal pre‑training to achieve full autonomy in real home environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment