How to Correctly Report LLM-as-a-Judge Evaluations

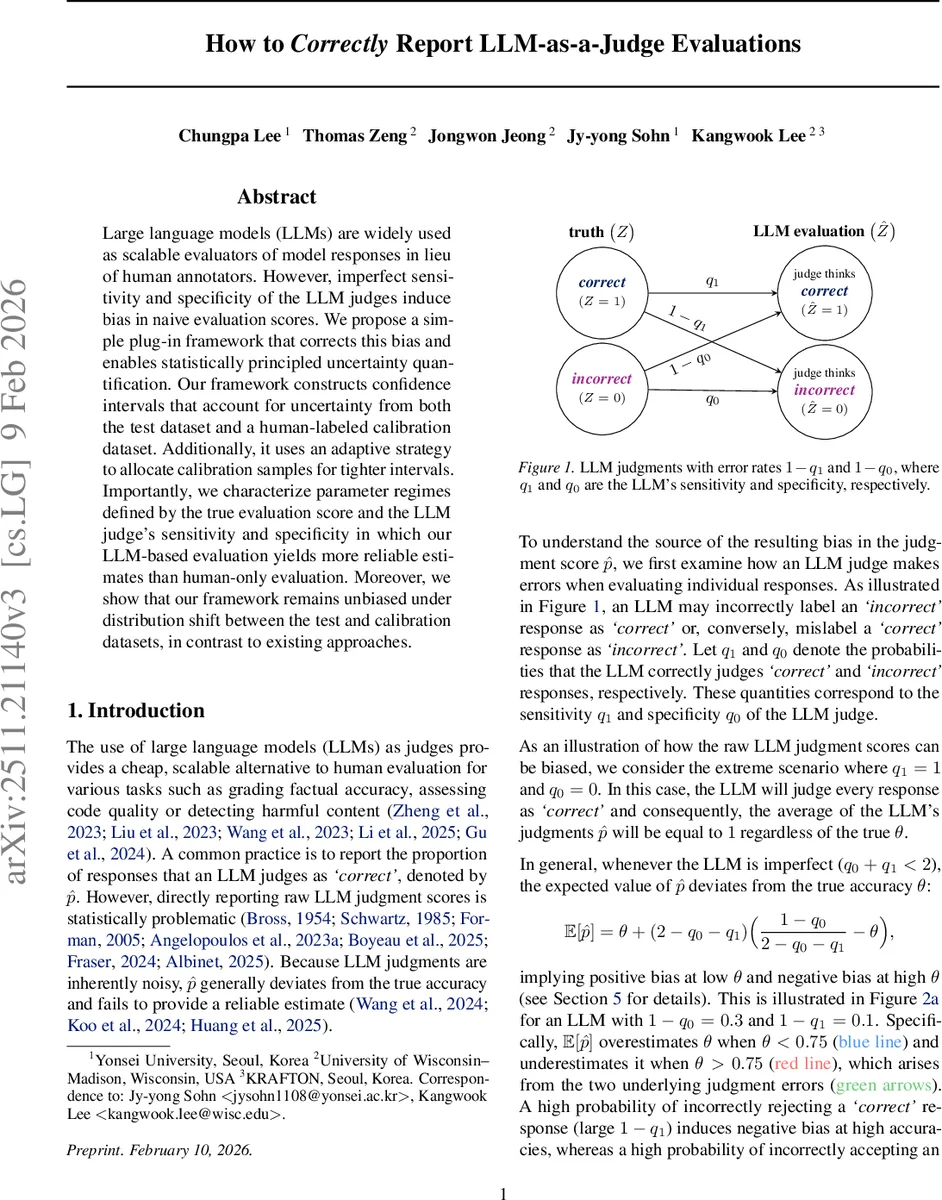

Large language models (LLMs) are widely used as scalable evaluators of model responses in lieu of human annotators. However, imperfect sensitivity and specificity of the LLM judges induce bias in naive evaluation scores. We propose a simple plug-in framework that corrects this bias and enables statistically principled uncertainty quantification. Our framework constructs confidence intervals that account for uncertainty from both the test dataset and a human-labeled calibration dataset. Additionally, it uses an adaptive strategy to allocate calibration samples for tighter intervals. Importantly, we characterize parameter regimes defined by the true evaluation score and the LLM judge’s sensitivity and specificity in which our LLM-based evaluation yields more reliable estimates than human-only evaluation. Moreover, we show that our framework remains unbiased under distribution shift between the test and calibration datasets, in contrast to existing approaches.

💡 Research Summary

The paper addresses a critical statistical flaw in the increasingly popular practice of using large language models (LLMs) as judges to evaluate the quality of model outputs, a practice that replaces costly human annotation. The authors first formalize the problem: the true binary accuracy of a system, denoted θ = Pr(Z = 1), is estimated in practice by the empirical proportion (\hat p) of responses that the LLM judges as “correct.” Because the LLM is an imperfect classifier, its sensitivity (q₁ = Pr(Ĥ = 1 | Z = 1)) and specificity (q₀ = Pr(Ĥ = 0 | Z = 0)) are typically less than one, causing (\hat p) to be a biased estimator of θ. The bias is analytically derived as

(E

Comments & Academic Discussion

Loading comments...

Leave a Comment