Color3D: Controllable and Consistent 3D Colorization with Personalized Colorizer

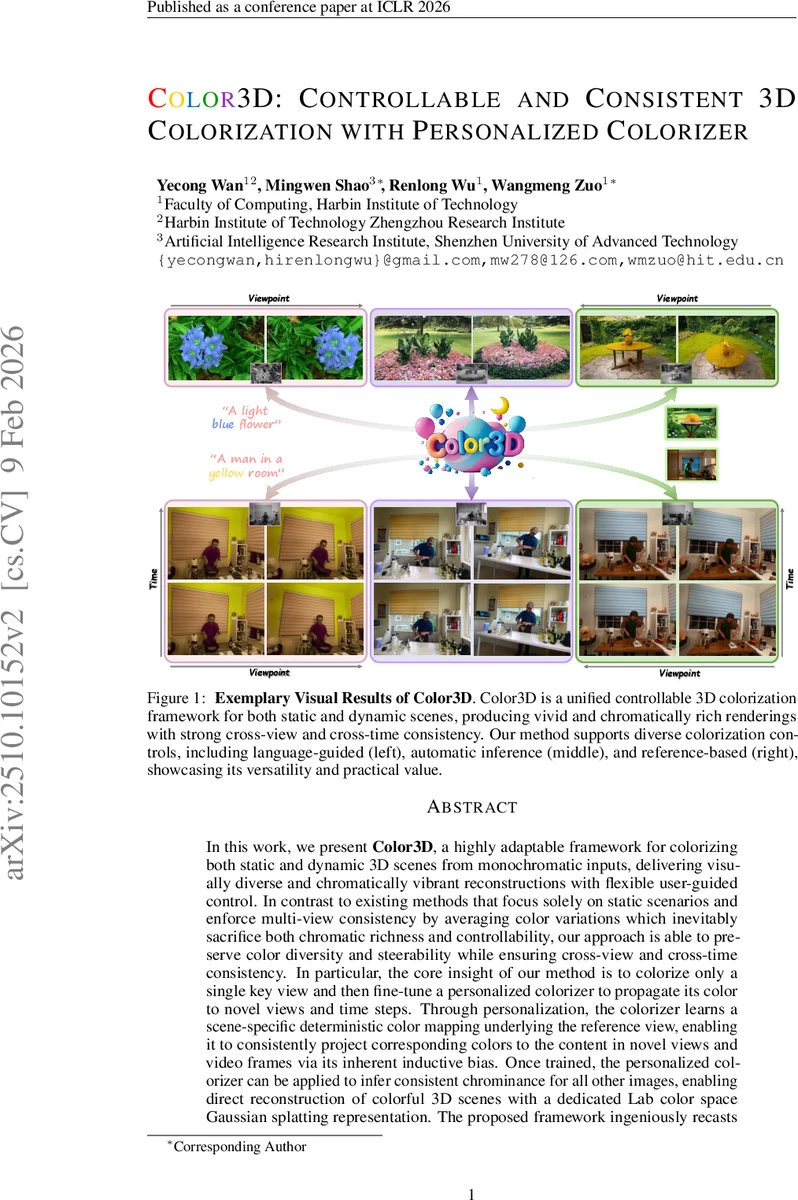

In this work, we present Color3D, a highly adaptable framework for colorizing both static and dynamic 3D scenes from monochromatic inputs, delivering visually diverse and chromatically vibrant reconstructions with flexible user-guided control. In contrast to existing methods that focus solely on static scenarios and enforce multi-view consistency by averaging color variations which inevitably sacrifice both chromatic richness and controllability, our approach is able to preserve color diversity and steerability while ensuring cross-view and cross-time consistency. In particular, the core insight of our method is to colorize only a single key view and then fine-tune a personalized colorizer to propagate its color to novel views and time steps. Through personalization, the colorizer learns a scene-specific deterministic color mapping underlying the reference view, enabling it to consistently project corresponding colors to the content in novel views and video frames via its inherent inductive bias. Once trained, the personalized colorizer can be applied to infer consistent chrominance for all other images, enabling direct reconstruction of colorful 3D scenes with a dedicated Lab color space Gaussian splatting representation. The proposed framework ingeniously recasts complicated 3D colorization as a more tractable single image paradigm, allowing seamless integration of arbitrary image colorization models with enhanced flexibility and controllability. Extensive experiments across diverse static and dynamic 3D colorization benchmarks substantiate that our method can deliver more consistent and chromatically rich renderings with precise user control. Project Page https://yecongwan.github.io/Color3D/.

💡 Research Summary

**

Color3D introduces a novel paradigm for coloring both static and dynamic 3D scenes from grayscale inputs by reducing the problem to a single‑image colorization task followed by scene‑specific propagation. The pipeline consists of three main stages: (1) key‑view selection, (2) personalized colorizer training, and (3) 3D scene reconstruction with a Lab‑space Gaussian splatting representation.

In the first stage, all input grayscale images or video frames are encoded with CLIP, and a pairwise cosine‑similarity matrix is built. For each view, an entropy is computed from the normalized similarity distribution; the view with the highest entropy—i.e., the one most evenly related to all others—is chosen as the key view because it contains the richest visual information about the whole scene.

The selected key view is then colored using any off‑the‑shelf 2D colorization model. The framework deliberately remains model‑agnostic, allowing automatic, language‑guided, or reference‑based colorization without additional modification. To avoid over‑fitting and to improve the robustness of the subsequent personalized colorizer, the authors propose a comprehensive single‑view augmentation scheme. This scheme combines generative augmentations (Stable Diffusion out‑painting, Stable Video Diffusion for synthetic motion, and Stable Virtual Camera for novel viewpoints) with classic augmentations (rotation, flip, grid shuffle, elastic deformation). The generative augmentations are guided by a caption generated by LLaVA, ensuring that the synthetic content shares the same chromatic style as the original key view.

The personalized colorizer is built on a frozen DDColor encoder, a trainable adapter, and a CNN decoder. It is fine‑tuned on the augmented dataset using a combination of L1 reconstruction loss, CLIP‑based semantic alignment loss, and an entropy‑based consistency loss that encourages the same content to retain identical colors across all augmentations. Because the model is trained on a single reference image and its consistent augmentations, it learns a deterministic one‑to‑one color mapping that can be applied to any novel view or video frame of the same scene.

Once the personalized colorizer is ready, it is applied to all remaining grayscale views or frames, producing Lab‑space color predictions. The authors then reconstruct the full 3D scene using 3D Gaussian Splatting for static scenes or 4D Gaussian Splatting for dynamic ones. A two‑stage optimization is employed: first, only the luminance (L) channel is optimized to secure geometric fidelity; second, the full Lab channels are jointly refined to inject vivid chrominance. This separation preserves structural accuracy while allowing rich, consistent color appearance.

Extensive experiments on static benchmarks (DTU, Tanks & Temples) and dynamic datasets (DynamicScenes, in‑the‑wild video captures) demonstrate that Color3D outperforms prior 3D‑colorization methods such as ColorNeRF and ChromaDistill. Quantitatively, it achieves higher PSNR (≈ +1.2 dB), improved SSIM (+0.03), and lower LPIPS, while also showing superior color‑consistency metrics across viewpoints and time. Qualitatively, the method produces saturated, diverse palettes and respects user‑provided controls (e.g., language prompts) without the color flattening observed in averaging‑based approaches.

The paper acknowledges two limitations: (1) the augmentation and personalized fine‑tuning steps increase overall runtime compared to a naïve 2D‑to‑3D pipeline, and (2) if the generative augmentations diverge significantly from the true scene content, the learned mapping may over‑generalize, leading to occasional color mismatches. Future work is suggested to develop automatic quality assessment for synthetic augmentations and to explore lighter‑weight personalized models that require fewer augmentations.

In summary, Color3D offers a unified, controllable, and consistent solution for 3D colorization by leveraging a scene‑specific colorizer trained on a single, richly augmented key view and by integrating this color information directly into a Lab‑space Gaussian splatting framework. This approach simultaneously addresses the long‑standing trade‑off between color richness, cross‑view consistency, and user controllability in 3D scene reconstruction.

Comments & Academic Discussion

Loading comments...

Leave a Comment