VAO: Validation-Aligned Optimization for Cross-Task Generative Auto-Bidding

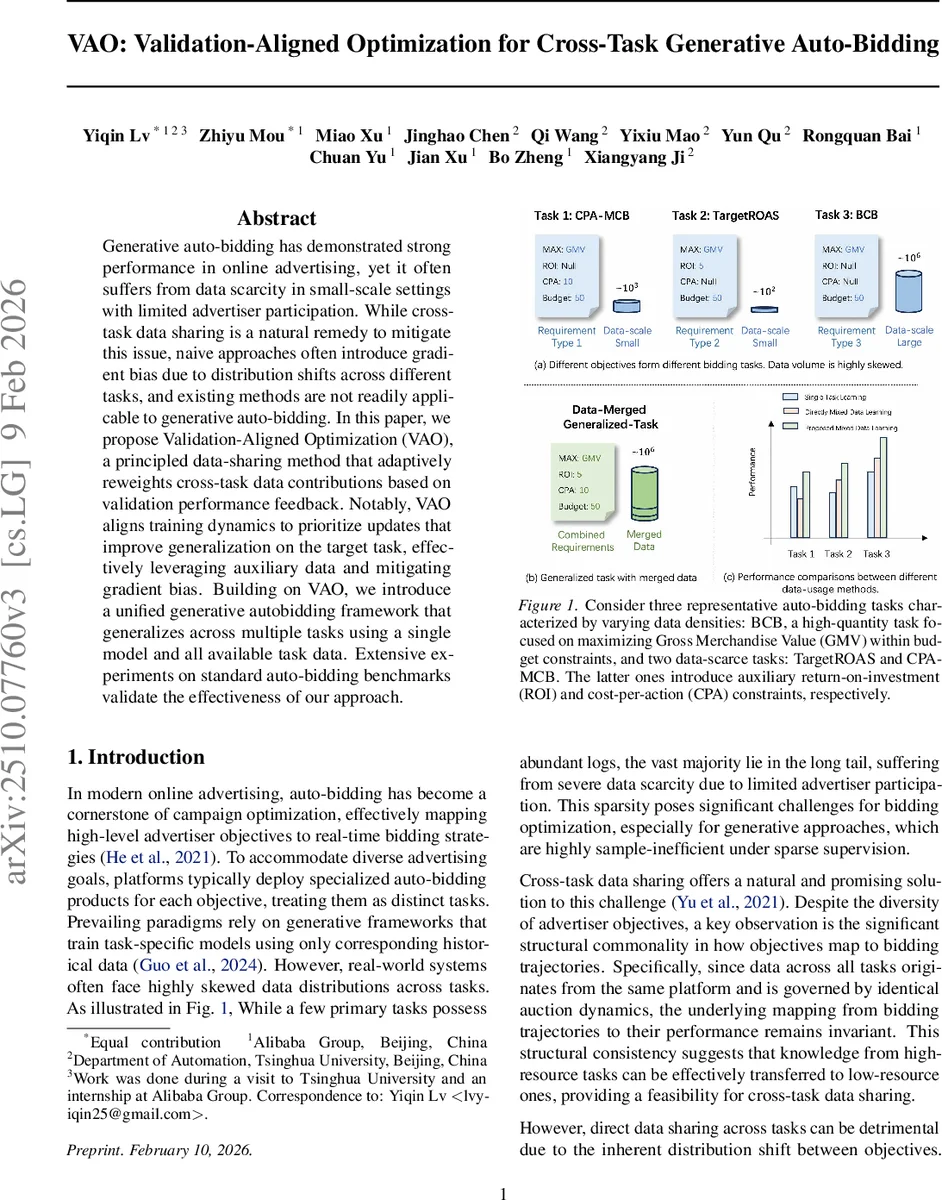

Generative auto-bidding has demonstrated strong performance in online advertising, yet it often suffers from data scarcity in small-scale settings with limited advertiser participation. While cross-task data sharing is a natural remedy to mitigate this issue, naive approaches often introduce gradient bias due to distribution shifts across different tasks, and existing methods are not readily applicable to generative auto-bidding. In this paper, we propose Validation-Aligned Optimization (VAO), a principled data-sharing method that adaptively reweights cross-task data contributions based on validation performance feedback. Notably, VAO aligns training dynamics to prioritize updates that improve generalization on the target task, effectively leveraging auxiliary data and mitigating gradient bias. Building on VAO, we introduce a unified generative autobidding framework that generalizes across multiple tasks using a single model and all available task data. Extensive experiments on standard auto-bidding benchmarks validate the effectiveness of our approach.

💡 Research Summary

The paper tackles the chronic data‑scarcity problem that plagues generative auto‑bidding systems in online advertising. While high‑resource tasks (e.g., budget‑constrained bidding) have abundant logs, many advertisers run low‑budget or niche campaigns that generate only a few hundred trajectories, making it difficult for generative models— which are notoriously sample‑inefficient— to learn reliable bidding policies. A natural remedy is to share data across tasks because all tasks share the same auction dynamics and state transition model. However, naïve cross‑task data sharing, which simply pools all logs, relabels them with the target task’s conditioning vector, and trains on the combined set, suffers from severe distribution shift. The authors formalize this issue by deriving a generalization bound that includes a bias term proportional to the product of each source task’s data proportion (α_i) and its total‑variation distance from the target distribution (d_TV(P_i, P_k)). When the target task is data‑poor, this bias dominates the bound, explaining why naïve sharing can degrade performance rather than improve it.

To address the bias, the authors propose Validation‑Aligned Optimization (VAO). VAO introduces a meta‑learning loop that uses a held‑out validation set from the target task to adaptively weight each source task’s contribution during training. Concretely, for each source task i a weight w_i is learned by minimizing the target validation loss after a gradient step that incorporates the weighted mixture of source losses. This aligns the overall training dynamics with the direction that most improves target generalization, effectively reducing the bias term to Σ_i w_i d_TV(P_i, P_k). Because w_i is driven by validation performance, tasks that are structurally similar to the target receive higher weight even if they have fewer samples, while large but mismatched tasks are down‑weighted.

The paper also presents a unified generative auto‑bidding architecture that can serve multiple tasks simultaneously. The model encodes advertiser features, current state (budget, time left, consumption speed), and task‑specific conditioning vectors into a shared transformer encoder. Separate decoder heads generate trajectories for different KPI constraints (e.g., CPA, ROI, GMV). The VAO‑derived weights are applied to the loss of each task’s samples, allowing a single set of parameters to be updated in a way that respects the target‑aligned importance of each auxiliary dataset.

Extensive experiments are conducted on both proprietary Alibaba logs and public auto‑bidding benchmarks covering three representative tasks: a high‑resource Budget‑Constrained Bidding (BCB), a data‑scarce Target‑ROAS, and a CPA‑MCB task with very limited data. VAO is compared against (1) naïve data pooling, (2) standard multi‑task learning (MTL), (3) domain‑adaptation techniques that align feature distributions, and (4) classic transfer learning (pre‑train on source then fine‑tune). Results show that VAO consistently reduces the target task’s mean‑squared error by roughly 12 % relative to naïve pooling and yields an additional 4–7 % gain over the strongest MTL baselines. In the most data‑starved CPA‑MCB scenario, VAO improves ROI by more than 15 % and cuts constraint‑violation rates by 30 % compared with the best competing method. Analysis of the learned weights reveals that VAO automatically emphasizes the Target‑ROAS source (which shares similar ROI constraints) while suppressing the dominant BCB source, confirming the intended bias correction.

Theoretical contributions include a rigorous bound showing how VAO shrinks the distribution‑shift term, and an empirical demonstration that the bias term correlates with observed performance degradation in naïve sharing. The authors discuss practical considerations: the meta‑optimization overhead is modest because validation sets are small and can be reused across training epochs; the approach scales to many tasks because weight updates are simple scalar multiplications; and the unified architecture avoids the need for separate models per objective, saving memory and serving latency.

Limitations are acknowledged. VAO relies on a representative validation set for the target task, which may be unavailable or stale in fast‑changing advertising environments. The current implementation is batch‑oriented; extending it to truly online, low‑latency bidding pipelines would require incremental weight updates and possibly reinforcement‑learning‑style credit assignment. Moreover, the method assumes that trajectory logs are homogeneous; handling heterogeneous or partially observed logs (e.g., click‑stream data) remains an open question.

In conclusion, Validation‑Aligned Optimization provides a principled, theoretically justified, and empirically effective solution for cross‑task data sharing in generative auto‑bidding. By reweighting auxiliary data based on target‑task validation performance, VAO mitigates distribution‑shift bias, enables a single model to serve multiple bidding objectives, and delivers substantial performance gains in data‑scarce regimes. Future work may explore dynamic, online VAO, integration with non‑generative RL bidders, and broader applications beyond advertising where multi‑objective generative modeling under data imbalance is required.

Comments & Academic Discussion

Loading comments...

Leave a Comment