Botender: Supporting Communities in Collaboratively Designing AI Agents through Case-Based Provocations

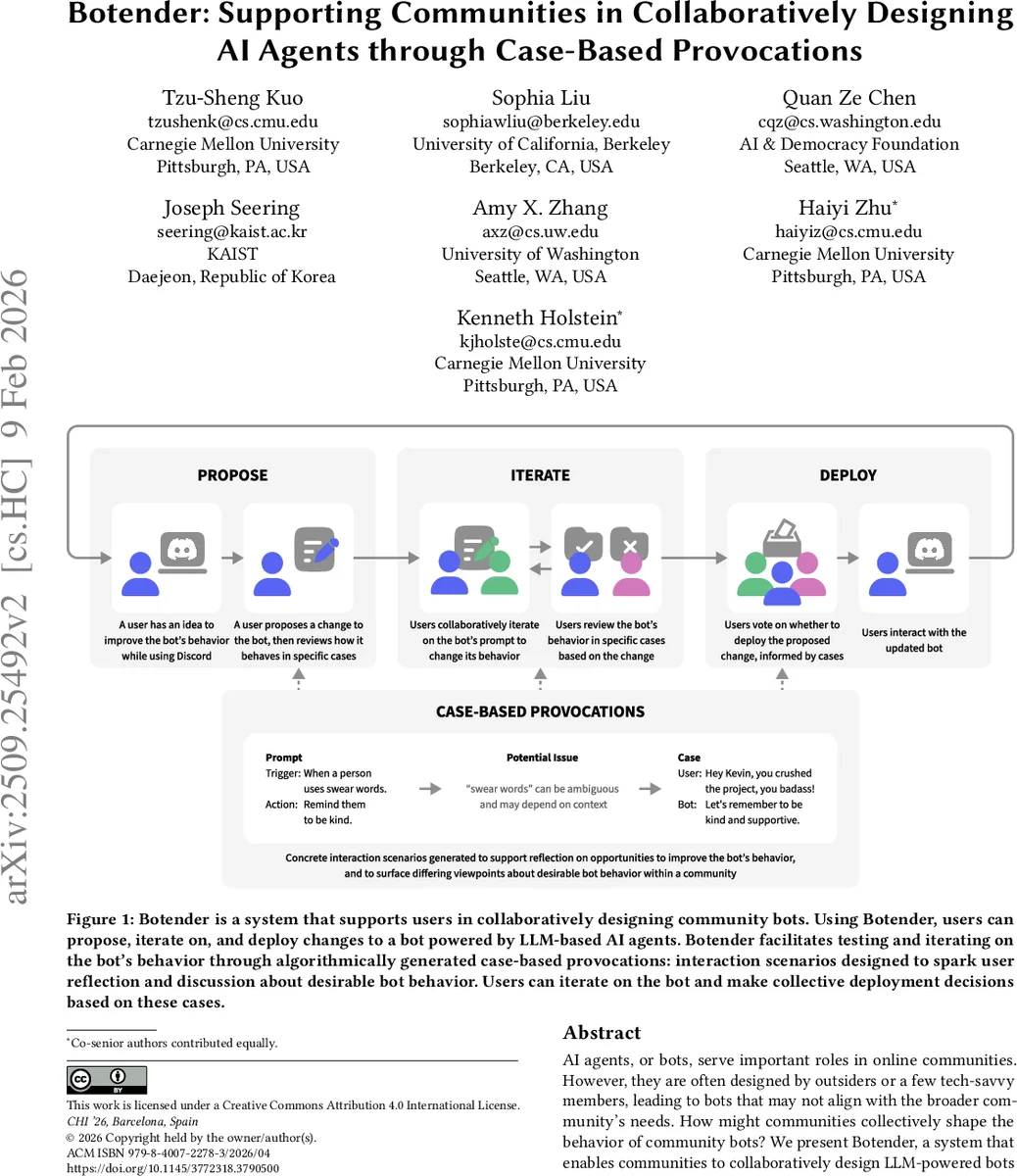

AI agents, or bots, serve important roles in online communities. However, they are often designed by outsiders or a few tech-savvy members, leading to bots that may not align with the broader community’s needs. How might communities collectively shape the behavior of community bots? We present Botender, a system that enables communities to collaboratively design LLM-powered bots without coding. With Botender, community members can directly propose, iterate on, and deploy custom bot behaviors tailored to community needs. Botender facilitates testing and iteration on bot behavior through case-based provocations: interaction scenarios generated to spark user reflection and discussion around desirable bot behavior. A validation study found these provocations more useful than standard test cases for revealing improvement opportunities and surfacing disagreements. During a five-day deployment across six Discord servers, Botender supported communities in tailoring bot behavior to their specific needs, showcasing the usefulness of case-based provocations in facilitating collaborative bot design.

💡 Research Summary

Botender is a novel system that enables online communities to collaboratively design, iterate, and deploy large‑language‑model (LLM) powered bots without any programming. The authors identify a key problem: most community bots are created by external developers or a small group of technically skilled members, which often leads to misalignment with the broader community’s values, norms, and needs. To address this, Botender introduces a workflow that centers on “case‑based provocations”—automatically generated interaction scenarios that expose how a proposed prompt (the textual specification of bot behavior) will actually behave across a variety of edge cases.

The workflow consists of three main stages. First, community members propose desired changes to a bot by writing or editing a natural‑language prompt. Second, Botender uses an LLM to generate a handful of concrete cases that probe the boundaries of the prompt, presenting the bot’s responses for each case. These provocations are displayed directly inside the community’s existing platform (the authors focus on Discord) so that users can discuss, critique, and suggest refinements. Third, after discussion, members vote on whether to deploy the updated prompt; deployment occurs once a predefined consensus threshold is met.

Two empirical evaluations support the design. In an online experiment with 120 participants, the case‑based provocation condition yielded 1.8× more identified improvement opportunities and 2.3× more instances of participants surfacing disagreements about desired bot behavior compared with a baseline that used generic test cases. A subsequent five‑day field deployment across six real Discord servers (each with 200–800 active members) demonstrated that communities could successfully propose, iterate, and deploy an average of 3.4 prompts per server, completing roughly 2.7 design cycles per prompt. Survey responses indicated that 84 % of participants felt the provocations helped them notice problems they would otherwise have missed, and non‑technical users reported meaningful participation in prompt design.

The paper also discusses limitations. Generating provocations incurs LLM inference costs, which may be prohibitive at larger scale. The quality of automatically generated cases varies with prompt complexity, suggesting a need for quality‑control mechanisms. Conflict resolution when multiple users submit contradictory prompts is not fully automated, requiring structured discussion or mediation. Finally, the current implementation is Discord‑specific, leaving open the challenge of adapting Botender to other platforms such as Slack, Reddit, or Microsoft Teams.

Future work directions include developing meta‑models to predict and improve provocation quality, designing negotiation interfaces for prompt conflicts, implementing caching or batch generation to reduce costs, extending the system to multiple platforms, and building longitudinal analytics to track how bot behavior evolves under community governance.

In sum, Botender demonstrates that case‑based provocations can effectively scaffold community‑driven AI design, allowing non‑experts to collaboratively shape LLM‑powered bots in ways that align with collective values. This contribution advances research at the intersection of human‑centered AI, collaborative design, and AI governance, offering a concrete tool for participatory bot development.

Comments & Academic Discussion

Loading comments...

Leave a Comment