Lifelong Learning with Behavior Consolidation for Vehicle Routing

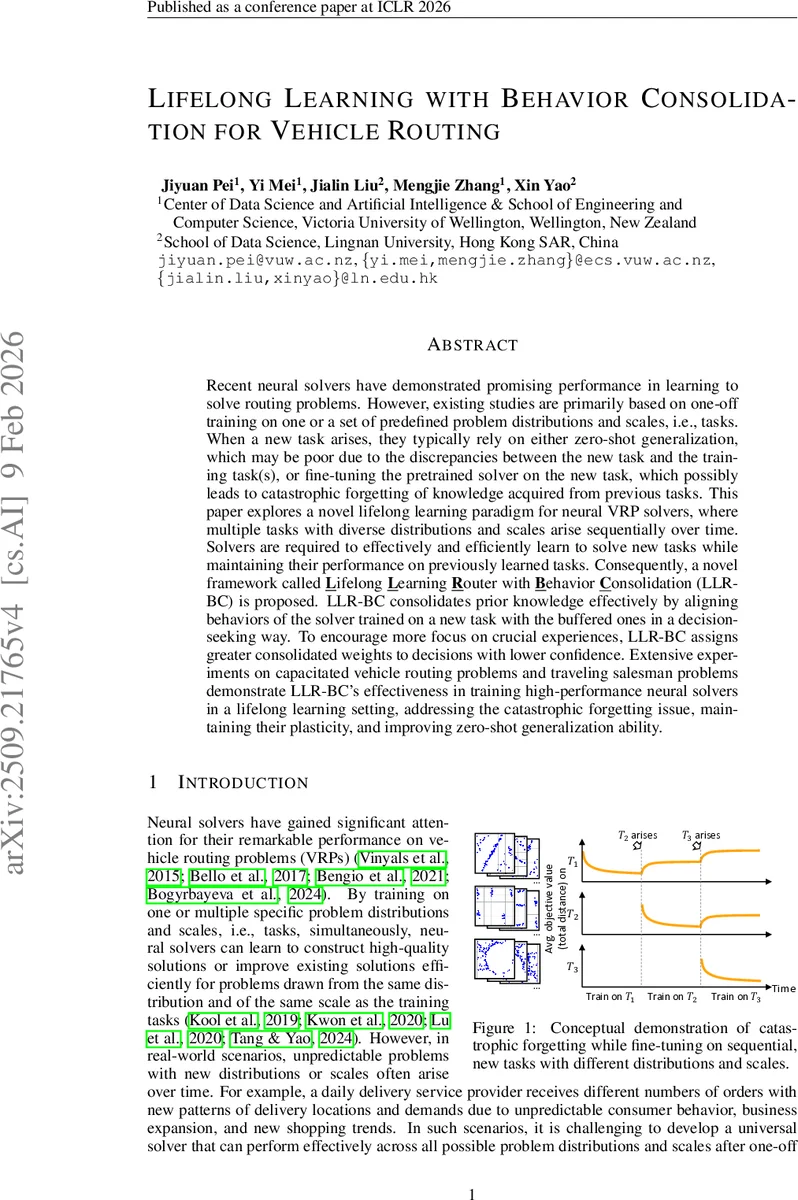

Recent neural solvers have demonstrated promising performance in learning to solve routing problems. However, existing studies are primarily based on one-off training on one or a set of predefined problem distributions and scales, i.e., tasks. When a new task arises, they typically rely on either zero-shot generalization, which may be poor due to the discrepancies between the new task and the training task(s), or fine-tuning the pretrained solver on the new task, which possibly leads to catastrophic forgetting of knowledge acquired from previous tasks. This paper explores a novel lifelong learning paradigm for neural VRP solvers, where multiple tasks with diverse distributions and scales arise sequentially over time. Solvers are required to effectively and efficiently learn to solve new tasks while maintaining their performance on previously learned tasks. Consequently, a novel framework called Lifelong Learning Router with Behavior Consolidation (LLR-BC) is proposed. LLR-BC consolidates prior knowledge effectively by aligning behaviors of the solver trained on a new task with the buffered ones in a decision-seeking way. To encourage more focus on crucial experiences, LLR-BC assigns greater consolidated weights to decisions with lower confidence. Extensive experiments on capacitated vehicle routing problems and traveling salesman problems demonstrate LLR-BC’s effectiveness in training high-performance neural solvers in a lifelong learning setting, addressing the catastrophic forgetting issue, maintaining their plasticity, and improving zero-shot generalization ability.

💡 Research Summary

This paper addresses a fundamental limitation of current neural solvers for vehicle routing problems (VRPs): they are typically trained once on a fixed set of problem distributions and scales, and when a new task appears they either rely on zero‑shot generalization, which often fails when the new task differs substantially, or they fine‑tune on the new task, which leads to catastrophic forgetting of previously learned tasks. To overcome this, the authors propose a lifelong‑learning framework called Lifelong Learning Router with Behavior Consolidation (LLR‑BC) that enables a neural VRP solver to continuously acquire knowledge from a stream of sequentially arriving tasks while preserving performance on all earlier tasks.

The core of LLR‑BC is an experience‑replay mechanism enriched with two novel components:

-

Confidence‑aware Experience Weighting (CaEW).

Each stored experience consists of a state (partial solution) and the solver’s behavior – the full probability distribution over the next node. The authors compute a confidence measure (e.g., entropy or variance) of this distribution. Experiences with low confidence (high uncertainty) receive larger consolidation weights because such decisions are more vulnerable to change during model updates and have a disproportionate impact on final route quality. This weighting focuses the replay on the most “crucial” experiences. -

Decision‑seeking Behavior Consolidation (DsBC).

Instead of regularizing parameters, DsBC directly aligns the current policy with the buffered behavior by minimizing a reverse Kullback–Leibler (KL) divergence. The reverse KL forces the current policy to mimic the past policy, especially on low‑confidence decisions, thereby preserving the actual decision‑making behavior rather than just the parameter values. The loss is weighted by CaEW, so the most uncertain past decisions are reinforced most strongly.

The experience buffer is maintained using reservoir sampling, guaranteeing that every experience collected over time has an equal chance of being stored despite a fixed memory budget. Experiences are stored at a fine granularity (state‑action probability pairs) rather than whole problem instances, which allows the model to rehearse specific decision points that are critical for route construction.

Training proceeds as follows: for each new task, the solver is trained on fresh instances using a standard reinforcement‑learning objective (e.g., REINFORCE or PPO). Simultaneously, a minibatch of buffered experiences is sampled, weighted by CaEW, and the DsBC loss is computed. The total loss is the sum of the task‑specific reward loss and the weighted behavior‑consolidation loss. This joint optimization enables the model to learn the new task (plasticity) while retaining the behavior learned on previous tasks (stability).

The authors evaluate LLR‑BC on two canonical routing domains—Capacitated Vehicle Routing Problem (CVRP) and Traveling Salesman Problem (TSP)—across multiple scales (100, 200, 500 nodes) and diverse distributions (uniform, clustered, real‑world demand patterns). They integrate LLR‑BC with two state‑of‑the‑art neural construction solvers, POMO and INVIT, demonstrating that the framework is architecture‑agnostic. Baselines include plain fine‑tuning, simple experience replay without weighting, and recent lifelong‑learning methods limited to scale‑only variations.

Key findings:

- Catastrophic forgetting is effectively eliminated. After training on a new task, LLR‑BC maintains the average optimality gap on all previously learned tasks within 1–2 %, whereas fine‑tuning can degrade performance by more than 10 %.

- Plasticity is preserved. Convergence speed on the new task is comparable to or slightly faster than fine‑tuning, showing that the additional consolidation loss does not hinder learning.

- Zero‑shot generalization improves. When evaluated on unseen distributions or scales, models trained with LLR‑BC achieve 3–4 % lower gaps than baselines, highlighting the benefit of accumulating transferable knowledge.

- Memory efficiency. A buffer sized at only 5 % of the total experiences (≈10 k entries) suffices; reservoir sampling ensures older tasks remain represented without explicit task identifiers.

- Model‑independence. Both POMO and INVIT exhibit similar gains, confirming that LLR‑BC can be plugged into any constructive neural VRP solver.

The paper also provides an ablation study showing that removing CaEW reduces performance, especially on large‑scale TSP instances where low‑confidence decisions dominate. Likewise, replacing reverse KL with standard KL or L2 regularization leads to higher forgetting, underscoring the importance of behavior‑level consolidation.

In discussion, the authors acknowledge that the choice of confidence metric (entropy vs. variance) and buffer size may need tuning for different domains, and that the current approach is tied to reinforcement‑learning based construction methods. Extending LLR‑BC to improvement‑type solvers or to other combinatorial problems (e.g., multi‑objective routing) is left for future work.

Overall, LLR‑BC offers a principled, scalable, and empirically validated solution for lifelong learning in neural vehicle routing, combining confidence‑driven experience weighting with decision‑focused behavior consolidation to achieve a rare balance of stability and plasticity. This work paves the way for deploying adaptive, continuously learning routing systems in real‑world logistics where demand patterns and problem scales evolve over time.

Comments & Academic Discussion

Loading comments...

Leave a Comment