VIMD: Monocular Visual-Inertial Motion and Depth Estimation

Accurate and efficient dense metric depth estimation is crucial for 3D visual perception in robotics and XR. In this paper, we develop a monocular visual-inertial motion and depth (VIMD) learning framework to estimate dense metric depth by leveraging accurate and efficient MSCKF-based monocular visual-inertial motion tracking. At the core the proposed VIMD is to exploit multi-view information to iteratively refine per-pixel scale, instead of globally fitting an invariant affine model as in the prior work. The VIMD framework is highly modular, making it compatible with a variety of existing depth estimation backbones. We conduct extensive evaluations on the TartanAir and VOID datasets and demonstrate its zero-shot generalization capabilities on the AR Table dataset. Our results show that VIMD achieves exceptional accuracy and robustness, even with extremely sparse points as few as 10-20 metric depth points per image. This makes the proposed VIMD a practical solution for deployment in resource constrained settings, while its robust performance and strong generalization capabilities offer significant potential across a wide range of scenarios.

💡 Research Summary

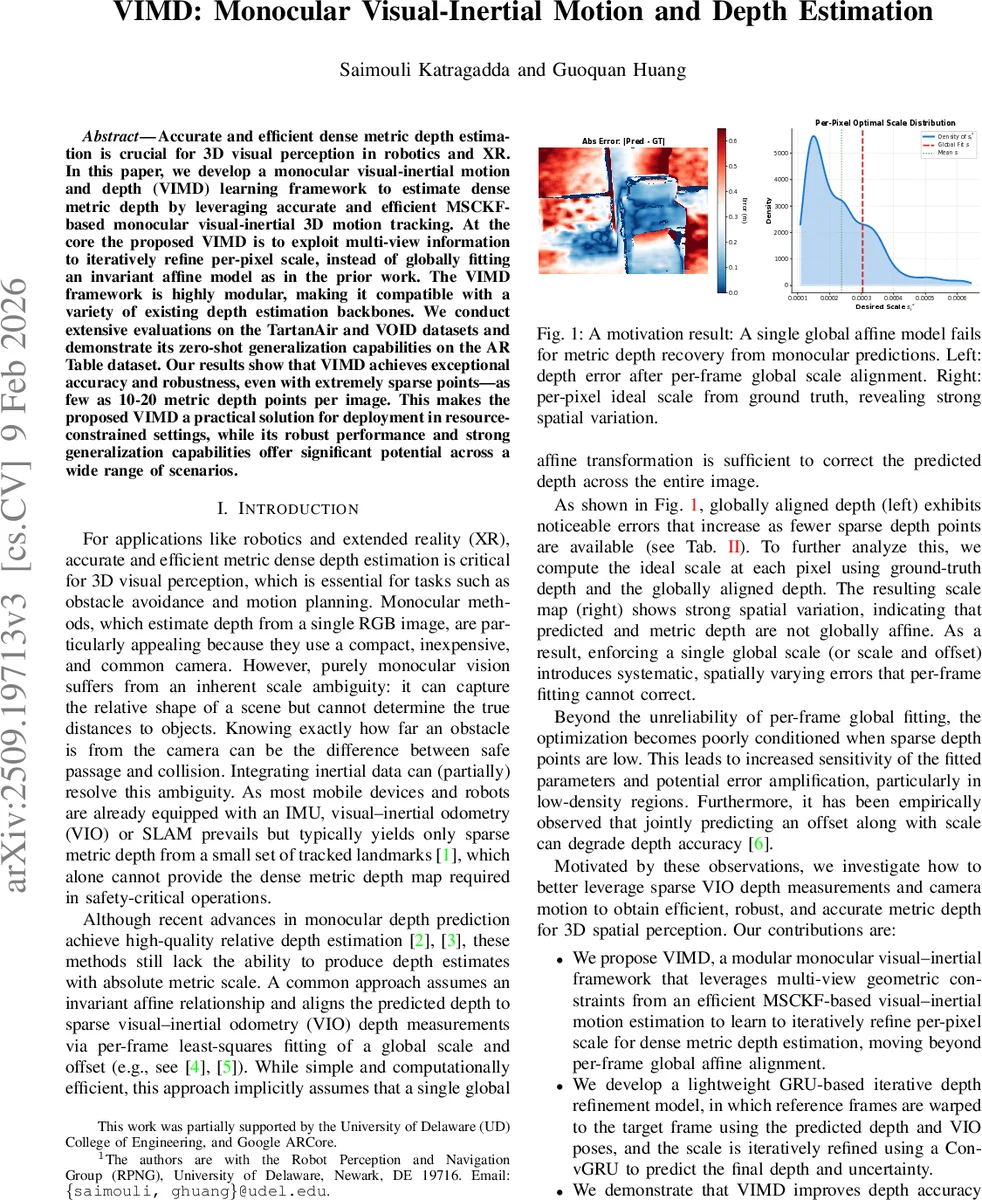

The paper introduces VIMD (Visual‑Inertial Motion and Depth), a modular learning framework that produces dense metric depth from a single RGB camera and an IMU. Traditional monocular depth networks output only relative depth, and a common remedy is to align the prediction to sparse metric points from visual‑inertial odometry (VIO) using a global affine transformation (scale + offset). The authors demonstrate that this global model is insufficient because the optimal per‑pixel scale varies strongly across the image, especially when the number of sparse points is very low (10‑20 points per frame).

VIMD addresses this limitation with two key components. First, a scale‑map scaffold is built from the sparse metric points produced by an MSCKF‑based VIO. For each sparse point, a local scale factor σ_j is computed as the ratio between the globally aligned inverse depth and the network’s inverse depth prediction. These σ_j values are interpolated across the image using linear scattered interpolation, yielding a dense per‑pixel prior S(u,v) that is fed to the depth refinement network. This prior compensates for local residual errors that remain after the initial global alignment (GA).

Second, an Iterative Refined Metric Depth Module refines the depth estimate using multi‑view geometric consistency. A lightweight ResNet‑18 extracts features from a target frame I_t and two reference frames I_r. Using the current depth hypothesis Z_k^inv, each reference feature map is warped into the target view via the known VIO poses and camera intrinsics. Cosine similarity between warped and target features produces a cost map c. The cost and the current depth are separately projected through convolutional streams, concatenated with the context features derived from the scale‑map, and fed into a separable ConvGRU. The GRU updates its hidden state, from which a multiplicative scale correction Δs_k and a log‑variance log σ_k^2 are predicted. The depth is updated as Z_{k+1}^inv = clamp(Z_k^inv ⊙ Δs_k) and the process repeats three times. The final inverse depth is up‑sampled to full resolution, and an uncertainty map is output alongside the depth.

Training supervision uses multi‑scale metric inverse depth and an uncertainty‑weighted Laplace negative log‑likelihood: L_i = |D_i − D*| · b_i + log b_i, where b_i = exp(log σ_i^2 + ε). Losses from coarse to fine scales are exponentially weighted (γ = 0.85). Depth predictions are clamped to a valid range (0.1 m – 8 m) to avoid extreme outliers.

Experiments on the TartanAir and VOID datasets compare VIMD against state‑of‑the‑art methods such as VI‑Depth, VOICED, and KBNet. Using only 150 sparse depth points per image, VIMD achieves RMSE = 155.23 mm, MAE = 88.42 mm, iRMSE = 67.21 km⁻¹, and iMAE = 40.18 km⁻¹, outperforming all baselines by 10‑30 % across metrics. When the number of sparse points is reduced to 10‑20, VIMD’s performance degrades only marginally, whereas global‑affine methods suffer severe accuracy loss. Qualitative results show sharp, low‑noise metric depth maps in both indoor and outdoor scenes. Zero‑shot generalization is demonstrated on the AR Table dataset, where VIMD, without any fine‑tuning, still produces accurate depth at real‑time speed (~30 fps on an NVIDIA A4500).

The contributions are threefold: (1) exposing the spatial variability of optimal scale and replacing a global affine fit with a per‑pixel scale‑map scaffold; (2) designing a lightweight ConvGRU‑based iterative refinement that leverages multi‑view consistency and provides per‑pixel uncertainty; (3) delivering a modular pipeline that can be combined with any monocular depth backbone and runs in real time.

Limitations include the assumption of synchronized IMU‑camera data and reliance on the accuracy of the underlying VIO. In highly dynamic scenes or under rapid motion, VIO errors could propagate into the depth refinement. Future work is suggested on asynchronous sensor calibration, dynamic object masking, and extending the iterative scheme to longer temporal windows for even more robust scale recovery. Overall, VIMD represents a significant step toward practical, dense metric depth estimation for resource‑constrained robotics and extended‑reality applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment