CoBEVMoE: Heterogeneity-aware Feature Fusion with Dynamic Mixture-of-Experts for Collaborative Perception

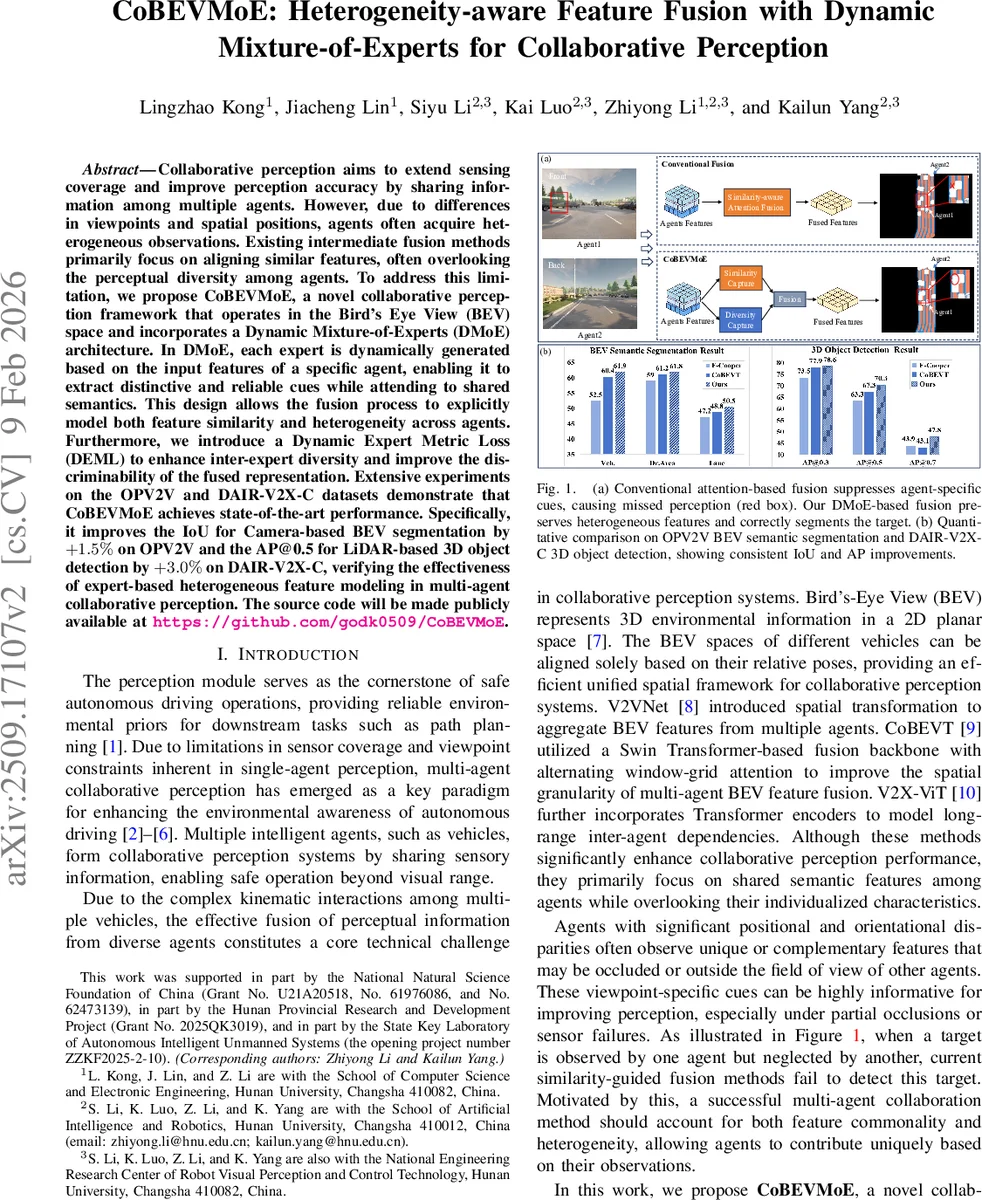

Collaborative perception aims to extend sensing coverage and improve perception accuracy by sharing information among multiple agents. However, due to differences in viewpoints and spatial positions, agents often acquire heterogeneous observations. Existing intermediate fusion methods primarily focus on aligning similar features, often overlooking the perceptual diversity among agents. To address this limitation, we propose CoBEVMoE, a novel collaborative perception framework that operates in the Bird’s Eye View (BEV) space and incorporates a Dynamic Mixture-of-Experts (DMoE) architecture. In DMoE, each expert is dynamically generated based on the input features of a specific agent, enabling it to extract distinctive and reliable cues while attending to shared semantics. This design allows the fusion process to explicitly model both feature similarity and heterogeneity across agents. Furthermore, we introduce a Dynamic Expert Metric Loss (DEML) to enhance inter-expert diversity and improve the discriminability of the fused representation. Extensive experiments on the OPV2V and DAIR-V2X-C datasets demonstrate that CoBEVMoE achieves state-of-the-art performance. Specifically, it improves the IoU for Camera-based BEV segmentation by +1.5% on OPV2V and the AP@0.5 for LiDAR-based 3D object detection by +3.0% on DAIR-V2X-C, verifying the effectiveness of expert-based heterogeneous feature modeling in multi-agent collaborative perception. The source code will be made publicly available at https://github.com/godk0509/CoBEVMoE.

💡 Research Summary

Collaborative perception seeks to overcome the limited field‑of‑view and occlusion problems of single‑vehicle perception by sharing sensor information among multiple agents. Existing intermediate‑fusion approaches mainly align and aggregate similar semantic features, which often suppresses agent‑specific cues that arise from different viewpoints and positions. The paper “CoBEVMoE: Heterogeneity‑aware Feature Fusion with Dynamic Mixture‑of‑Experts for Collaborative Perception” introduces a novel framework that explicitly models both similarity and heterogeneity in the Bird’s‑Eye‑View (BEV) space.

The core of CoBEVMoE is a Dynamic Mixture‑of‑Experts (DMoE) module. For each collaborating vehicle, a dedicated expert is generated on‑the‑fly from that vehicle’s BEV feature map. The generation pipeline consists of global average pooling, a lightweight MLP, and a deconvolution block that outputs a 3×3 convolution kernel. This kernel parameterizes a dynamic convolutional layer that processes the vehicle’s feature map, thereby extracting viewpoint‑specific patterns. All experts’ outputs are combined by a gating network that takes the globally pooled fused feature, passes it through a softmax, and multiplies by a binary mask indicating communication availability. The gating weights thus reflect both the reliability of each agent and the complementarity of its contribution.

To prevent the experts from collapsing into redundant representations, the authors propose a Dynamic Expert Metric Loss (DEML). DEML contains two terms: (1) a distance loss that pulls each expert’s output toward the final fused representation, ensuring that each expert remains relevant to the overall scene; (2) a contrastive term that pushes different experts apart (e.g., via a cosine‑margin loss), encouraging diversity. The total training objective combines the task‑specific loss (semantic segmentation cross‑entropy or 3D detection regression) with DEML, balancing accuracy and expert heterogeneity.

Experiments are conducted on two large‑scale V2X datasets. On OPV2V (camera‑based BEV semantic segmentation) CoBEVMoE improves mean IoU by 1.5 % over the previous state‑of‑the‑art (CoBEVT, AttFusion, etc.). On DAIR‑V2X‑C (LiDAR‑based 3D object detection) it raises AP@0.5 by 3.0 %. Ablation studies show that removing DMoE or DEML degrades performance, confirming that both components are essential. Qualitative visualizations illustrate cases where a target is visible only to one vehicle; traditional similarity‑focused fusion misses it, while CoBEVMoE correctly detects and segments it, demonstrating the benefit of preserving heterogeneous cues.

The paper’s contributions are: (1) reframing collaborative perception to explicitly consider agent‑specific diversity; (2) designing a dynamic expert generation mechanism that conditions expert parameters on each agent’s local features; (3) introducing DEML to enforce inter‑expert diversity while maintaining coherence with the fused representation; (4) achieving consistent gains across modalities and tasks on real‑world datasets.

Limitations include the linear scaling of expert count with the number of agents, which may become computationally expensive in dense traffic scenarios, and the reliance on a centralized aggregator, which may be vulnerable to communication delays or packet loss in real V2X networks. Future work could explore parameter sharing, expert pruning, sparse routing, and fully decentralized DMoE architectures to improve scalability and robustness. Overall, CoBEVMoE presents a compelling approach to harnessing both common and complementary information in multi‑agent perception, pushing the frontier of collaborative autonomous driving.

Comments & Academic Discussion

Loading comments...

Leave a Comment