WeTok: Powerful Discrete Tokenization for High-Fidelity Visual Reconstruction

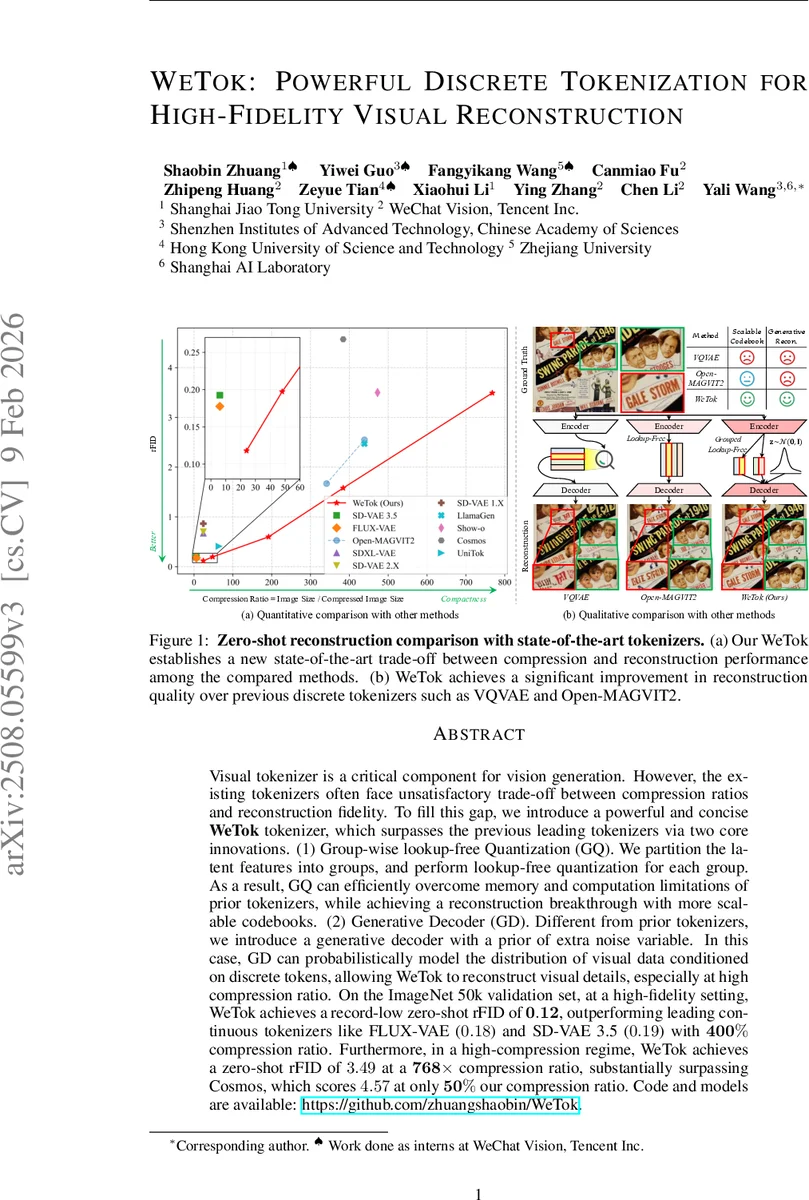

Visual tokenizer is a critical component for vision generation. However, the existing tokenizers often face unsatisfactory trade-off between compression ratios and reconstruction fidelity. To fill this gap, we introduce a powerful and concise WeTok tokenizer, which surpasses the previous leading tokenizers via two core innovations. (1) Group-wise lookup-free Quantization (GQ). We partition the latent features into groups, and perform lookup-free quantization for each group. As a result, GQ can efficiently overcome memory and computation limitations of prior tokenizers, while achieving a reconstruction breakthrough with more scalable codebooks. (2) Generative Decoder (GD). Different from prior tokenizers, we introduce a generative decoder with a prior of extra noise variable. In this case, GD can probabilistically model the distribution of visual data conditioned on discrete tokens, allowing WeTok to reconstruct visual details, especially at high compression ratio. On the ImageNet 50k validation set, at a high-fidelity setting, WeTok achieves a record-low zero-shot rFID of 0.12, outperforming leading continuous tokenizers like FLUX-VAE (0.18) and SD-VAE 3.5 (0.19) with 400% compression ratio. Furthermore, in a high-compression regime, WeTok achieves a zero-shot rFID of 3.49 at a 768$\times$ compression ratio, substantially surpassing Cosmos, which scores 4.57 at only 50% our compression ratio. Code and models are available: https://github.com/zhuangshaobin/WeTok.

💡 Research Summary

WeTok introduces a novel discrete visual tokenizer that dramatically improves the trade‑off between compression ratio and reconstruction fidelity. The first innovation, Group‑wise Lookup‑Free Quantization (GQ), partitions the latent feature map along the channel dimension into g groups and applies a fixed binary codebook (‑1, +1) to each group. By reformulating the token and codebook entropy losses as sums over the groups, GQ eliminates the memory explosion associated with traditional lookup‑free quantization (LFQ) while preserving or even reducing approximation error compared to Binary Spherical Quantization (BSQ). This design enables virtually unlimited codebook scaling with constant memory usage, as demonstrated by ablations showing that increasing g improves reconstruction quality without OOM failures.

The second innovation, the Generative Decoder (GD), augments the deterministic decoder with a Gaussian noise vector z sampled from 𝒩(0, I). By concatenating z with the quantized latent U_Q, the decoder becomes a conditional generative model that can sample diverse high‑frequency details from the distribution of possible images corresponding to a highly compressed token. Training proceeds in two stages: (1) a standard reconstruction phase using L2, LPIPS, GAN, and GQ losses, and (2) a fine‑tuning phase where the decoder’s channel dimension is expanded and the new channels are zero‑initialized to accept z, ensuring a smooth transition from deterministic to stochastic behavior.

Extensive experiments on ImageNet‑50k validate the approach. At a 400 % compression ratio, WeTok achieves a record‑low zero‑shot rFID of 0.12, outperforming continuous tokenizers FLUX‑VAE (0.18) and SD‑VAE 3.5 (0.19). In an extreme 768× compression regime, it records rFID 3.49 versus Cosmos’s 4.57, despite Cosmos operating at only half the compression rate. Memory profiling shows GQ consistently uses ~10.5 GB across codebook dimensions (d = 8–40), whereas LFQ and BSQ quickly run out of memory at higher dimensions. Ablation studies confirm that the number of groups, base channel width (C = 256), and residual block count (B = 4) are critical hyper‑parameters for optimal performance. Pre‑training on a 400 M general‑domain dataset yields models with strong generalization (higher PSNR/SSIM) while still fitting the target distribution well.

In summary, WeTok’s combination of scalable, memory‑efficient group‑wise quantization and a conditional generative decoder establishes a new state‑of‑the‑art for discrete visual tokenization, enabling high‑compression, high‑fidelity image reconstruction and opening avenues for more efficient latent‑space vision generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment