Can AR Embedded Visualizations Foster Appropriate Reliance on AI in Spatial Decision-Making? A Comparative Study of AR X-Ray vs. 2D Minimap

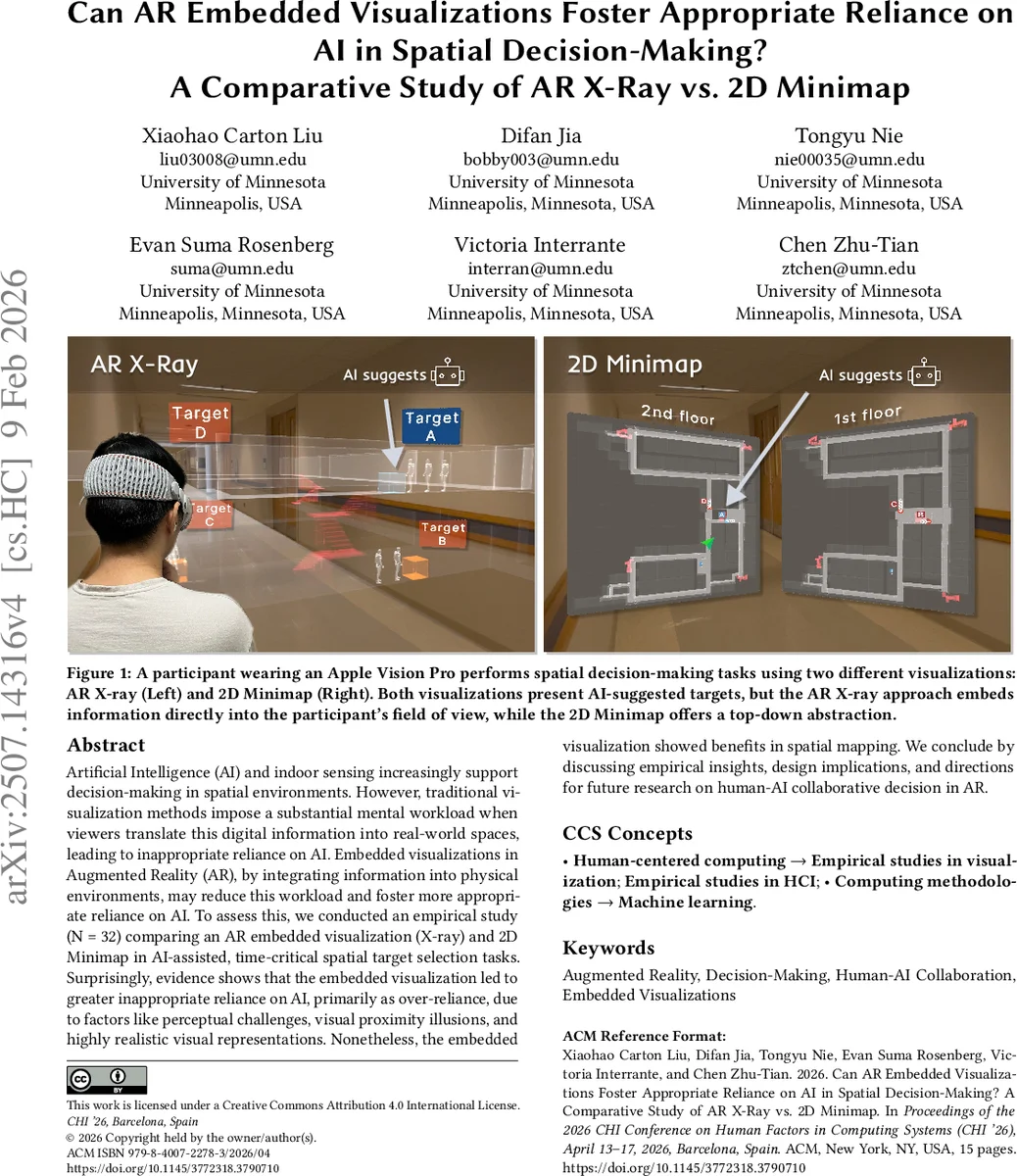

Artificial Intelligence (AI) and indoor sensing increasingly support decision-making in spatial environments. However, traditional visualization methods impose a substantial mental workload when viewers translate this digital information into real-world spaces, leading to inappropriate reliance on AI. Embedded visualizations in Augmented Reality (AR), by integrating information into physical environments, may reduce this workload and foster more appropriate reliance on AI. To assess this, we conducted an empirical study (N = 32) comparing an AR embedded visualization (X-ray) and 2D Minimap in AI-assisted, time-critical spatial target selection tasks. Surprisingly, evidence shows that the embedded visualization led to greater inappropriate reliance on AI, primarily as over-reliance, due to factors like perceptual challenges, visual proximity illusions, and highly realistic visual representations. Nonetheless, the embedded visualization demonstrated benefits in spatial mapping. We conclude by discussing empirical insights, design implications, and directions for future research on human-AI collaborative decision in AR.

💡 Research Summary

**

The paper investigates whether embedding AI‑generated information directly into the physical environment via augmented reality (AR) can promote appropriate reliance on AI during time‑critical spatial decision‑making. The authors compare two visualization approaches: an AR “X‑ray” view that overlays AI‑suggested targets onto the real‑world scene (an embedded visualization) and a conventional 2D minimap displayed on a separate panel (a situated visualization). Thirty‑two participants performed a target‑selection task in a two‑floor indoor setting while wearing an Apple Vision Pro headset. An AI model with 75 % accuracy suggested the optimal target among four possibilities; participants had to choose a target within a strict time limit.

Quantitative results show that the X‑ray condition slightly improves spatial mapping efficiency (faster response times) but paradoxically increases inappropriate reliance on the AI. When the AI suggestion was correct, participants accepted it 92 % of the time in the X‑ray condition versus 78 % with the minimap. More critically, when the AI suggestion was wrong, participants still followed it 68 % of the time with X‑ray, compared to only 42 % with the minimap. This pattern indicates a pronounced over‑reliance effect in the embedded visualization. Overall decision accuracy did not differ significantly between conditions, suggesting that the higher reliance did not translate into better performance.

Qualitative interviews reveal three primary mechanisms behind the over‑reliance observed with the X‑ray view. First, the visual proximity illusion makes the overlaid digital cues appear physically attached to real objects, leading users to treat them as trustworthy environmental features. Second, the high realism and seamless integration of the X‑ray rendering boost perceived credibility of the AI, creating a “real‑world” bias. Third, AR’s limited field‑of‑view and depth‑perception ambiguities increase cognitive load in subtle ways, prompting users to rely on fast, intuitive (System 1) judgments rather than deliberate (System 2) evaluation of the AI’s suggestion.

The authors argue that these findings challenge the intuitive assumption that embedding information in AR automatically reduces mental effort and fosters more critical AI use. While AR improves spatial cognition and egocentric awareness, it simultaneously introduces perceptual and trust‑related biases that can miscalibrate reliance.

Design implications are proposed: (1) mitigate visual proximity by adjusting opacity, distance‑based color scaling, or adding a virtual buffer zone; (2) surface AI uncertainty (e.g., confidence scores, recent performance metrics) alongside the suggestion to encourage users to assess reliability; (3) incorporate cognitive forcing functions such as mandatory confirmation prompts or brief delays before accepting AI advice; and (4) provide training that makes users aware of AR‑specific illusion effects and the need for systematic verification.

Future work should explore varying AI accuracy levels, richer uncertainty visualizations, longitudinal studies of trust development, and comparisons with other AR visualization paradigms (e.g., annotation‑based cues). Overall, the study contributes an early empirical baseline for understanding how AR‑embedded visualizations influence human‑AI collaboration in spatial decision contexts, highlighting both their promise for spatial mapping and their potential to exacerbate over‑reliance on AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment