"PhyWorldBench": A Comprehensive Evaluation of Physical Realism in Text-to-Video Models

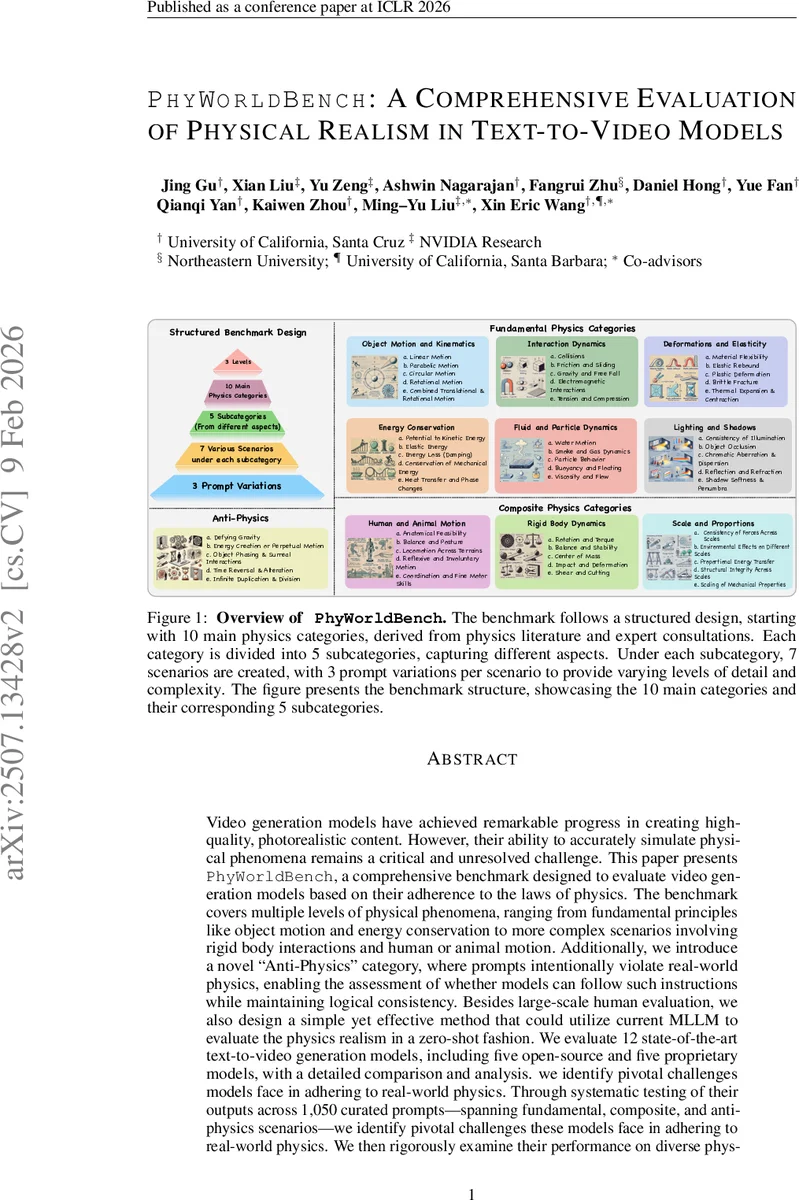

Video generation models have achieved remarkable progress in creating high-quality, photorealistic content. However, their ability to accurately simulate physical phenomena remains a critical and unresolved challenge. This paper presents PhyWorldBench, a comprehensive benchmark designed to evaluate video generation models based on their adherence to the laws of physics. The benchmark covers multiple levels of physical phenomena, ranging from fundamental principles such as object motion and energy conservation to more complex scenarios involving rigid body interactions and human or animal motion. Additionally, we introduce a novel Anti-Physics category, where prompts intentionally violate real-world physics, enabling the assessment of whether models can follow such instructions while maintaining logical consistency. Besides large-scale human evaluation, we also design a simple yet effective method that utilizes current multimodal large language models to evaluate physics realism in a zero-shot fashion. We evaluate 12 state-of-the-art text-to-video generation models, including five open-source and five proprietary models, with detailed comparison and analysis. Through systematic testing across 1050 curated prompts spanning fundamental, composite, and anti-physics scenarios, we identify pivotal challenges these models face in adhering to real-world physics. We further examine their performance under diverse physical phenomena and prompt types, and derive targeted recommendations for crafting prompts that enhance fidelity to physical principles.

💡 Research Summary

PhyWorldBench is a newly introduced benchmark that systematically measures how well text‑to‑video (T2V) models respect the laws of physics. Building on expert consultations and physics textbooks, the authors define ten main physics categories—Object Motion & Kinematics, Interaction Dynamics, Energy Conservation, Fluid & Particle Dynamics, Rigid‑Body Dynamics, Lighting & Shadows, Deformations & Elasticity, Scale & Proportions, Human & Animal Motion, and Anti‑Physics. Each main category is split into five sub‑categories, each containing seven distinct scenarios. For every scenario three prompt variants are provided: a concise event prompt, a physics‑enhanced prompt that subtly adds physical consequences, and a detailed narrative prompt rich in visual detail. This design yields a total of 1 050 prompts covering fundamental, composite, and deliberately unphysical (“anti‑physics”) cases.

The benchmark creation pipeline consists of three stages. First, physics experts and the authors jointly define the taxonomy. Second, large language models (GPT‑4o and Gemini‑1.5‑Pro) generate an initial pool of event prompts; human annotators then select seven diverse, physics‑representative prompts per scenario and refine them into physics‑enhanced and detailed versions. Third, a simple Yes/No evaluation metric is adopted to decide whether a generated video aligns with the physical expectations of its prompt. Human evaluators apply this metric to 12 600 videos (1 050 per model), while a zero‑shot multimodal large language model (e.g., GPT‑o1) is used to replicate the assessment, showing high correlation with human judgments and dramatically lowering evaluation cost.

Twelve state‑of‑the‑art T2V models are evaluated: six open‑source models (Hunyuan 720p, Open‑Sora 2.0, Open‑Sora‑Plan 1.3, CogV ideoX‑1.5, Step‑video‑T2V, W‑anx‑2.1, LTX‑Video) and six proprietary models (Sora‑Turbo, Gen‑3, Kling 1.6, Pika 2.0, Luma). Overall success rates are modest—averaging 18 %—with the best open‑source model (W‑anx‑2.1) achieving 31 % and the top proprietary model (Pika 2.0) 26 %. Performance varies dramatically across physics categories: simple linear or parabolic motion is relatively well‑handled (≈45 % success), whereas composite dynamics such as collisions with fragmentation, fluid bubbling, and human/animal joint coordination fall below 10 % success. Anti‑physics prompts expose another weakness: models often fail to follow deliberately impossible instructions while maintaining visual coherence, indicating reliance on statistical patterns rather than genuine physical reasoning.

Prompt analysis reveals that richer textual context improves physical fidelity. Detailed narrative prompts raise average success by roughly 12 % compared with minimal event prompts, and physics‑enhanced prompts add about 8 % improvement. However, overloading prompts with technical physics terminology can confuse models and degrade performance, suggesting a balance between clarity and detail.

Key contributions of the paper are: (1) the construction of a large‑scale, multi‑dimensional physics benchmark that surpasses prior works in both prompt count (1 050 vs. ≤400) and category breadth; (2) a hybrid evaluation framework that combines large‑scale human assessment with zero‑shot multimodal LLM scoring; (3) a comprehensive empirical study exposing current T2V models’ deficiencies in simulating realistic dynamics, especially in high‑complexity scenarios; and (4) actionable prompt‑design guidelines for practitioners seeking to improve physical realism in generated videos.

The authors conclude that while visual quality of T2V models has progressed, true physical realism remains an open challenge. Future directions include integrating explicit physics simulators or differentiable physics layers into diffusion pipelines, training models with physics‑conditioned objectives, and developing tighter feedback loops between multimodal LLM evaluators and generative models to iteratively refine physical consistency.

Comments & Academic Discussion

Loading comments...

Leave a Comment