Scaling Laws for Uncertainty in Deep Learning

Deep learning has recently revealed the existence of scaling laws, demonstrating that model performance follows predictable trends based on dataset and model sizes. Inspired by these findings and fascinating phenomena emerging in the over-parameterized regime, we examine a parallel direction: do similar scaling laws govern predictive uncertainties in deep learning? In identifiable parametric models, such scaling laws can be derived in a straightforward manner by treating model parameters in a Bayesian way. In this case, for example, we obtain $O(1/N)$ contraction rates for epistemic uncertainty with respect to the number of data $N$. However, in over-parameterized models, these guarantees do not hold, leading to largely unexplored behaviors. In this work, we empirically show the existence of scaling laws associated with various measures of predictive uncertainty with respect to dataset and model sizes. Through experiments on vision and language tasks, we observe such scaling laws for in- and out-of-distribution predictive uncertainty estimated through popular approximate Bayesian inference and ensemble methods. Besides the elegance of scaling laws and the practical utility of extrapolating uncertainties to larger data or models, this work provides strong evidence to dispel recurring skepticism against Bayesian approaches: “In many applications of deep learning we have so much data available: what do we need Bayes for?”. Our findings show that “so much data” is typically not enough to make epistemic uncertainty negligible.

💡 Research Summary

The paper “Scaling Laws for Uncertainty in Deep Learning” presents a groundbreaking empirical investigation into whether predictive uncertainties in deep learning models follow predictable scaling patterns with respect to dataset and model size, analogous to the well-known scaling laws for model performance.

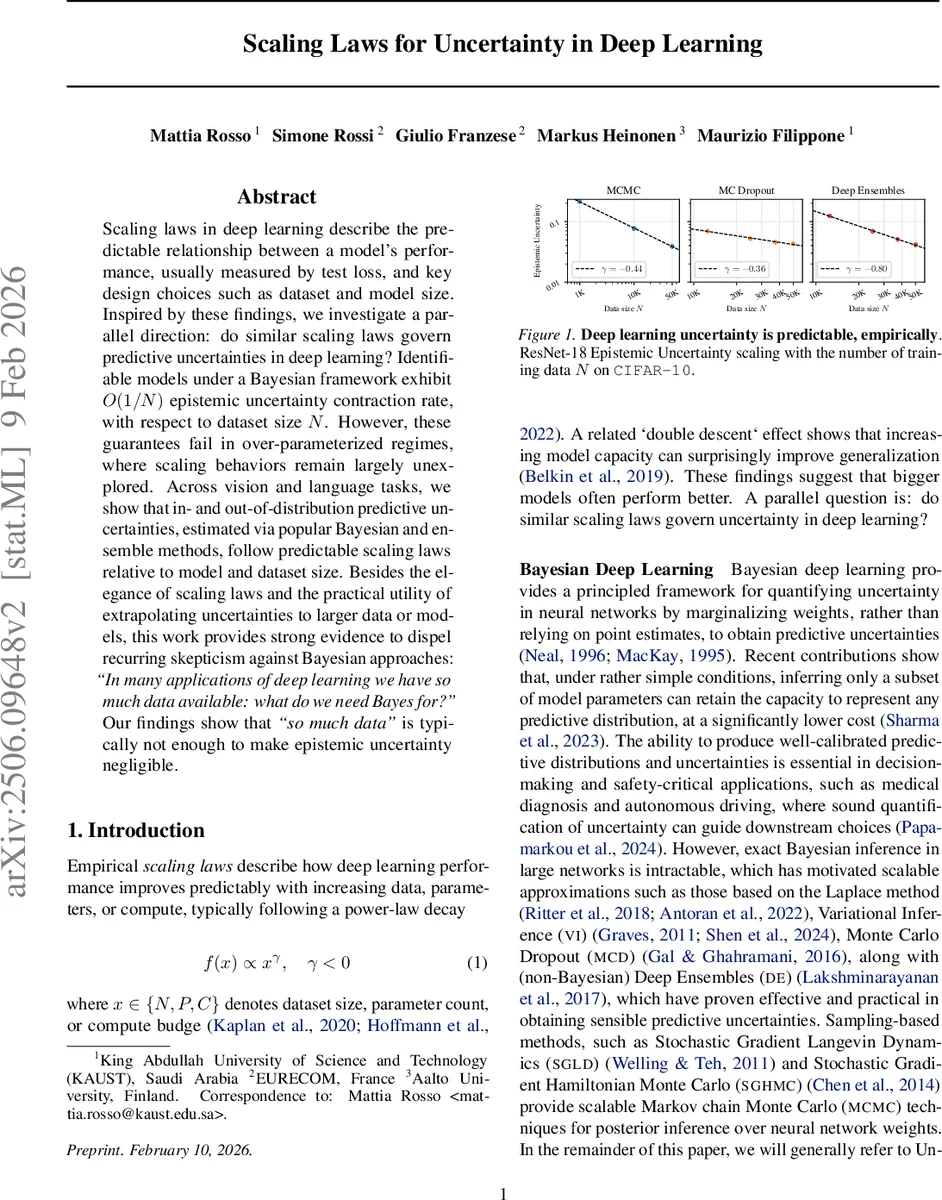

The authors begin by noting that in identifiable parametric models under a Bayesian framework, epistemic uncertainty is guaranteed to contract at a rate of O(1/N). However, these theoretical guarantees break down in the over-parameterized regimes typical of modern deep learning, leaving uncertainty scaling behavior largely unexplored. To address this, the paper conducts extensive experiments across vision (CIFAR-10, CIFAR-100, ImageNet) and language tasks, employing a wide array of popular Uncertainty Quantification (UQ) methods. These include Monte Carlo Dropout, Deep Ensembles, Markov Chain Monte Carlo (MCMC), and Variational Inference techniques, applied to architectures like ResNets, WideResNets, and Vision Transformers.

The core finding is a clear, empirical demonstration that predictive uncertainties—decomposed into Total Uncertainty (TU), Aleatoric Uncertainty (AU), and Epistemic Uncertainty (EU)—obey predictable power-law scaling relationships with both dataset size (N) and model size (P). For instance, the epistemic uncertainty for a ResNet-18 on CIFAR-10 estimated via MCMC scales as N^(−0.44). These power-law trends hold consistently across different UQ methods, architectures, and tasks, although the specific scaling exponents vary. The study also shows that these scaling laws persist for out-of-distribution data and are influenced by factors like optimization techniques (e.g., combining dropout with Sharpness-Aware Minimization).

Beyond the empirical cataloging of these laws, the paper provides important theoretical context. It discusses the limitations of applying classical Bayesian asymptotic theory to singular, over-parameterized neural networks and explores potential explanations through the lens of Singular Learning Theory (SLT). The authors formally connect generalization error in SLT to total uncertainty in linear models, suggesting a promising pathway for future theoretical understanding of the observed scaling phenomena.

The implications of this work are twofold. Practically, the existence of uncertainty scaling laws allows for the extrapolation of uncertainty estimates to larger datasets or models, which is valuable for planning and safety-critical applications. Philosophically and scientifically, it delivers a robust counterargument to recurring skepticism about the necessity of Bayesian methods in deep learning, succinctly captured by the question, “In many applications of deep learning we have so much data available: what do we need Bayes for?” The results conclusively show that “so much data” is often insufficient to render epistemic uncertainty negligible, thereby affirming the enduring relevance of uncertainty quantification in the era of big data and large models.

Comments & Academic Discussion

Loading comments...

Leave a Comment