Parallel Layer Normalization for Universal Approximation

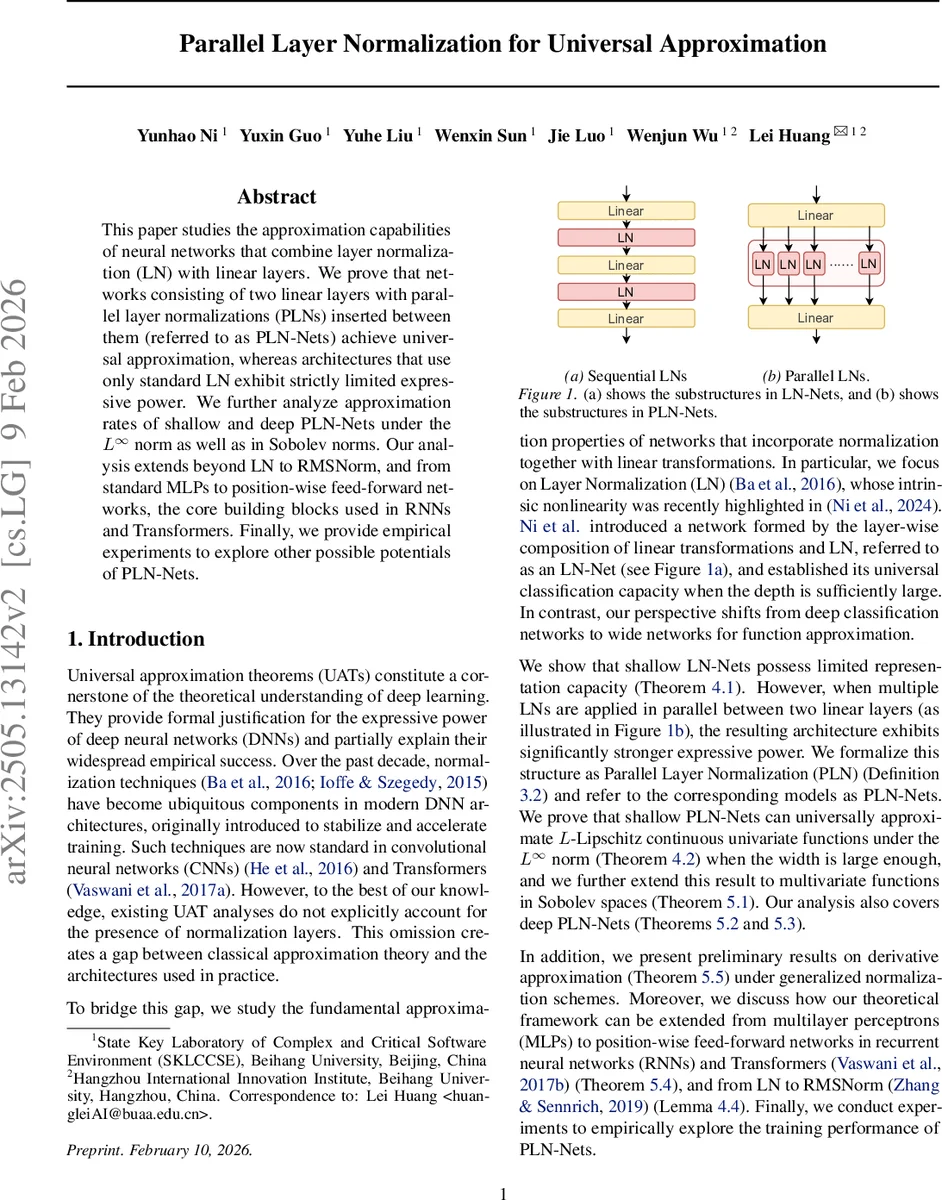

This paper studies the approximation capabilities of neural networks that combine layer normalization (LN) with linear layers. We prove that networks consisting of two linear layers with parallel layer normalizations (PLNs) inserted between them (referred to as PLN-Nets) achieve universal approximation, whereas architectures that use only standard LN exhibit strictly limited expressive power.We further analyze approximation rates of shallow and deep PLN-Nets under the $L^\infty$ norm as well as in Sobolev norms. Our analysis extends beyond LN to RMSNorm, and from standard MLPs to position-wise feed-forward networks, the core building blocks used in RNNs and Transformers.Finally, we provide empirical experiments to explore other possible potentials of PLN-Nets.

💡 Research Summary

This paper investigates the expressive power of neural networks that incorporate layer normalization (LN) together with linear transformations. While classical universal approximation theorems (UATs) focus on activation functions and linear layers, they typically ignore normalization layers due to analytical difficulty. The authors first show that shallow networks consisting of a linear layer, a single LN, and another linear layer (referred to as LN‑Nets) have severely limited representation capability. By exploiting the fact that LN forces zero mean and unit variance across a hidden vector, they prove that any LN‑Net can at best represent functions with a single stationary point; for example, the smooth function cos(πx) cannot be approximated within error less than 1 regardless of width. This establishes a negative result: LN alone does not endow a shallow network with universal approximation ability.

To overcome this limitation, the authors introduce Parallel Layer Normalization (PLN). PLN partitions the hidden vector into m equal‑size groups and applies LN independently to each group. Mathematically, if h =

Comments & Academic Discussion

Loading comments...

Leave a Comment