EgoFSD: Ego-Centric Fully Sparse Paradigm with Uncertainty Denoising and Iterative Refinement for Efficient End-to-End Self-Driving

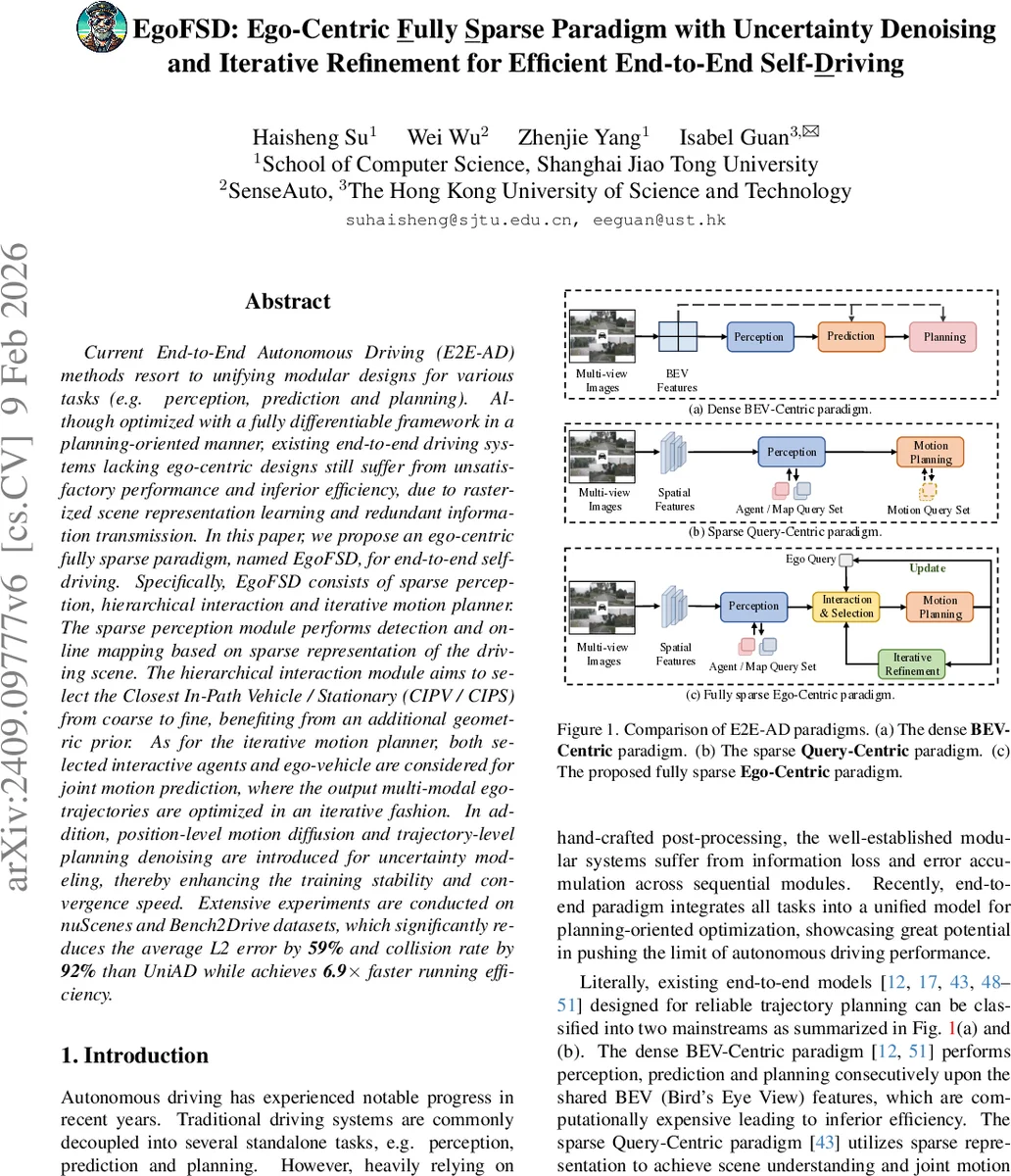

Current End-to-End Autonomous Driving (E2E-AD) methods resort to unifying modular designs for various tasks (e.g. perception, prediction and planning). Although optimized with a fully differentiable framework in a planning-oriented manner, existing end-to-end driving systems lacking ego-centric designs still suffer from unsatisfactory performance and inferior efficiency, due to rasterized scene representation learning and redundant information transmission. In this paper, we propose an ego-centric fully sparse paradigm, named EgoFSD, for end-to-end self-driving. Specifically, EgoFSD consists of sparse perception, hierarchical interaction and iterative motion planner. The sparse perception module performs detection and online mapping based on sparse representation of the driving scene. The hierarchical interaction module aims to select the Closest In-Path Vehicle / Stationary (CIPV / CIPS) from coarse to fine, benefiting from an additional geometric prior. As for the iterative motion planner, both selected interactive agents and ego-vehicle are considered for joint motion prediction, where the output multi-modal ego-trajectories are optimized in an iterative fashion. In addition, position-level motion diffusion and trajectory-level planning denoising are introduced for uncertainty modeling, thereby enhancing the training stability and convergence speed. Extensive experiments are conducted on nuScenes and Bench2Drive datasets, which significantly reduces the average L2 error by 59% and collision rate by 92% than UniAD while achieves 6.9x faster running efficiency.

💡 Research Summary

The paper introduces EgoFSD, a novel end‑to‑end autonomous driving framework that departs from the dense bird‑eye‑view (BEV) representations used by most recent methods. Instead, it adopts an ego‑centric “fully sparse” paradigm that processes multi‑view camera images into a set of sparse queries representing dynamic agents and static map elements. The system consists of four main components: (1) a visual encoder that extracts multi‑scale features from the raw images; (2) a sparse perception module that simultaneously performs object detection, tracking, and online vectorized mapping using query‑based decoders; (3) a hierarchical interaction module that selects the most relevant surrounding agents—specifically the Closest In‑Path Vehicle or Stationary (CIPV/CIPS)—through a coarse‑to‑fine, dual‑interaction mechanism; and (4) an iterative motion planner that jointly predicts future trajectories for the ego‑vehicle and the selected agents, refining them over multiple iterations.

In the sparse perception stage, each detected object is encoded as an 11‑dimensional anchor (position, size, yaw, velocity) and refined by a cascade of N decoder layers that incorporate hybrid attention between current queries and a temporal memory queue. A parallel branch with identical architecture generates online maps as polylines of Np points, eliminating the need for expensive BEV rasterization.

The hierarchical interaction module introduces three key ideas. First, an ego‑object dual‑interaction layer combines ego‑centric cross‑attention (ego query attending to all surrounding queries) with object‑centric self‑attention, using concatenated positional embeddings to preserve geometric cues. Second, an intention‑guided geometric attention module encodes the ego vehicle’s velocity, acceleration, angular velocity, and high‑level driving command into an intention vector. This vector is concatenated with dense BEV grid positional embeddings, passed through a squeeze‑and‑excitation block, and supervised to predict a response map that reflects the minimum distance from each grid cell to the future ego trajectory. From this response map a reference line and a normalized distance map are derived, yielding a geometric score for each surrounding query. Third, the final interaction score is obtained by element‑wise multiplication of the attention score, geometric score, and classification confidence, effectively weighting each candidate by both learned relevance and physical proximity.

Selection proceeds in a coarse‑to‑fine manner: multiple dual‑interaction layers are stacked, and after each layer a Top‑K operation retains only the highest‑scoring queries. This dramatically reduces the number of agents that later modules must handle (typically only a handful of CIPV/CIPS candidates).

The iterative motion planner receives the selected interactive queries together with the ego query. A joint decoder predicts a set of multimodal ego trajectories. To model uncertainty, two complementary denoising mechanisms are applied. Position‑level motion diffusion adds Gaussian noise to the predicted positions of interactive agents during training, encouraging robustness to localization errors. Trajectory‑level denoising injects random offsets into the initial ego trajectory proposals and then refines them iteratively, updating the reference line and ego query at each step. This iterative refinement aligns the ego plan with both the intended motion (as encoded by the intention vector) and the dynamic constraints imposed by the selected agents.

Extensive experiments on the nuScenes and Bench2Drive datasets demonstrate the efficacy of the approach. Compared with UniAD—a strong dense‑BEV baseline—EgoFSD reduces average L2 trajectory error by 59 % and collision rate by 92 %. Moreover, because the entire pipeline operates on sparse queries rather than dense feature maps, inference speed improves by a factor of 6.9, with substantially lower FLOPs and memory consumption. Ablation studies confirm that each component (sparse perception, intention‑guided attention, coarse‑to‑fine selection, motion diffusion, and trajectory denoising) contributes meaningfully to the overall performance gains.

The authors argue that EgoFSD more closely mirrors human driving behavior, where drivers focus on the nearest relevant obstacles rather than processing the whole scene. By embedding this principle into a fully sparse, ego‑centric architecture, the method achieves both higher safety (lower collisions) and higher efficiency (faster inference). Limitations include reliance solely on camera data; integration of lidar or radar could further improve robustness. Additionally, the fixed Top‑K selection might miss critical agents in highly congested scenarios, suggesting future work on adaptive selection thresholds or dynamic query budgeting.

In summary, EgoFSD presents a compelling shift toward sparse, ego‑centric modeling for end‑to‑end autonomous driving, delivering substantial improvements in accuracy, safety, and computational efficiency, and opening a promising direction for next‑generation self‑driving systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment