DeltaKV: Residual-Based KV Cache Compression via Long-Range Similarity

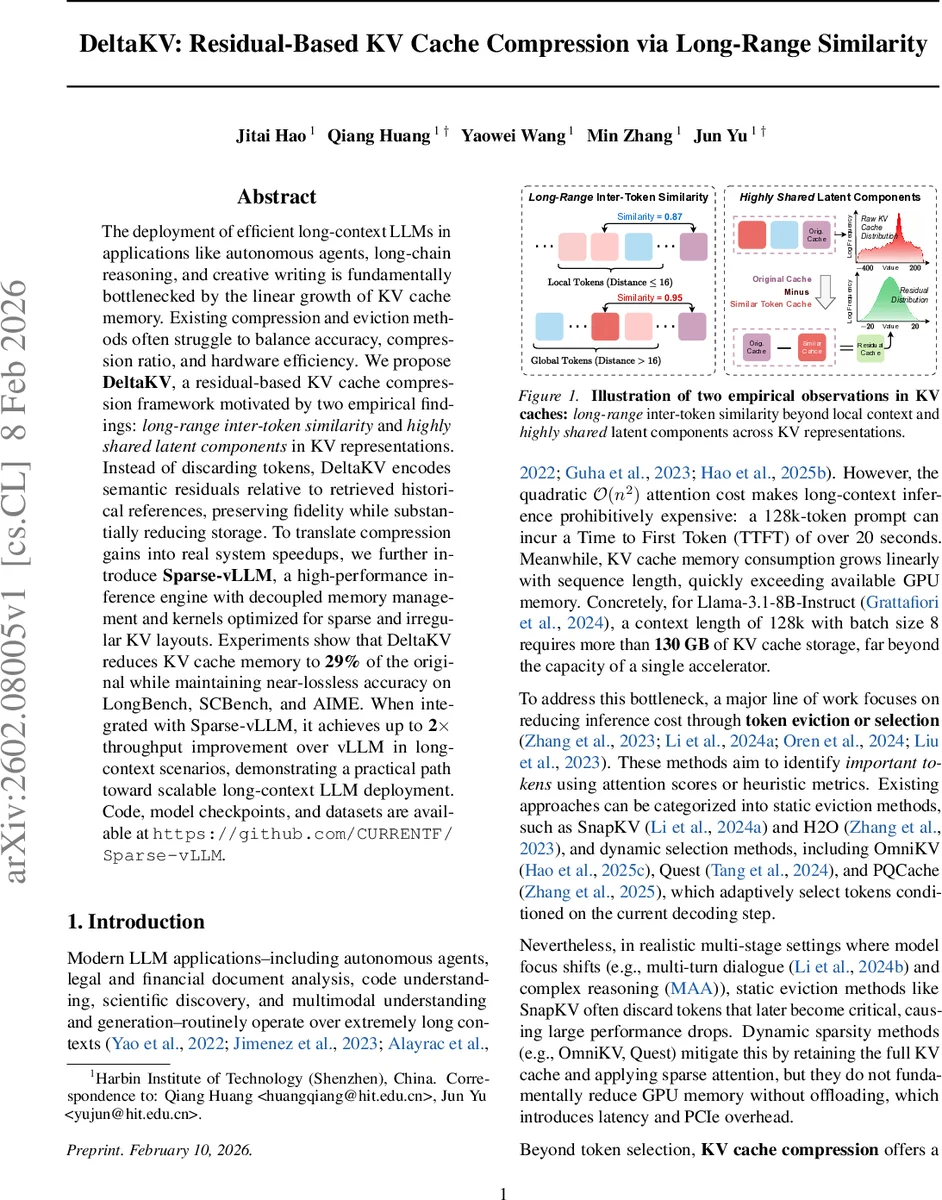

The deployment of efficient long-context LLMs in applications like autonomous agents, long-chain reasoning, and creative writing is fundamentally bottlenecked by the linear growth of KV cache memory. Existing compression and eviction methods often struggle to balance accuracy, compression ratio, and hardware efficiency. We propose DeltaKV, a residual-based KV cache compression framework motivated by two empirical findings: long-range inter-token similarity and highly shared latent components in KV representations. Instead of discarding tokens, DeltaKV encodes semantic residuals relative to retrieved historical references, preserving fidelity while substantially reducing storage. To translate compression gains into real system speedups, we further introduce Sparse-vLLM, a high-performance inference engine with decoupled memory management and kernels optimized for sparse and irregular KV layouts. Experiments show that DeltaKV reduces KV cache memory to 29% of the original while maintaining near-lossless accuracy on LongBench, SCBench, and AIME. When integrated with Sparse-vLLM, it achieves up to 2$\times$ throughput improvement over vLLM in long-context scenarios, demonstrating a practical path toward scalable long-context LLM deployment. Code, model checkpoints, and datasets are available at https://github.com/CURRENTF/Sparse-vLLM.

💡 Research Summary

**

The paper tackles one of the most pressing bottlenecks in deploying large language models (LLMs) for long‑context applications: the linear growth of key‑value (KV) cache memory during inference. Existing solutions—static token eviction, dynamic token selection, or low‑rank KV compression—either discard information that later becomes important, rely on off‑loading to CPU memory (incurring PCIe latency), or require complex multi‑stage pipelines that are unfriendly to GPUs.

Through an extensive empirical study on Qwen2.5‑7B‑Instruct, the authors discover two fundamental properties of KV caches. First, “long‑range inter‑token similarity”: more than 60 % of the most similar token pairs are separated by more than 16 positions, showing that semantic redundancy is not confined to local neighborhoods. Second, “highly shared latent components”: a small set of high‑norm directions dominates the KV space, as revealed by singular‑value decomposition (SVD). Removing these shared components leaves low‑energy residuals whose L2 norms are dramatically smaller and whose value distribution collapses around zero.

Guided by these observations, the authors propose DeltaKV, a residual‑based KV compression framework. Instead of compressing raw KV vectors, DeltaKV maintains a strided set of reference tokens (e.g., every 8th token). For each new token i, it retrieves the top‑k nearest references from this set, computes their mean KV_R, and then projects both the current KV_i and KV_R through a lightweight MLP f_c (2d_k → d_h → d_c). The residual z_Δ = f_c(KV_i) – f_c(KV_R) is stored; reconstruction adds back the decoded residual (via a decoder f_d, either MLP or linear) to KV_R. The whole process is fully parallel on the GPU, adds less than 5 % extra parameters, and can be trained in roughly 8 GPU‑hours.

To make the compression practical, the authors introduce Sparse‑vLLM, a new inference engine that abandons the page‑based KV layout of vLLM and instead supports sparse, irregular KV buffers. Sparse‑vLLM decouples memory management from compute kernels, allowing on‑the‑fly reconstruction of only the tokens selected by a downstream sparse‑attention method (e.g., OmniKV). This design eliminates unnecessary memory traffic, maximizes GPU utilization, and integrates seamlessly with DeltaKV.

Experimental results on Llama‑3.1‑8B‑Instruct and Qwen2.5‑7B‑Instruct demonstrate that DeltaKV reduces KV memory to about 29 % of the original while incurring less than 0.1 % absolute accuracy loss on three long‑context benchmarks (LongBench, SCBench, AIME). When combined with Sparse‑vLLM, throughput improves up to 2× compared with the vanilla vLLM in 128k‑token contexts with batch size 8, and the extra compute for compression/decompression accounts for under 5 % of total inference time. The memory savings also enable a 1.5× increase in batch size, further boosting overall system efficiency.

In summary, the paper makes three key contributions: (1) a quantitative analysis revealing global redundancy and shared latent structure in KV caches; (2) DeltaKV, a simple yet effective residual‑based compression method that is GPU‑friendly and near‑lossless; (3) Sparse‑vLLM, an inference framework that natively handles compressed, sparse KV layouts and delivers real‑world speedups. The work opens avenues for deeper multi‑stage residual compression, token‑wise adaptive compression dimensions, and extensions to multimodal models, while also suggesting hardware‑level optimizations for irregular memory access patterns.

Comments & Academic Discussion

Loading comments...

Leave a Comment