Which private attributes do VLMs agree on and predict well?

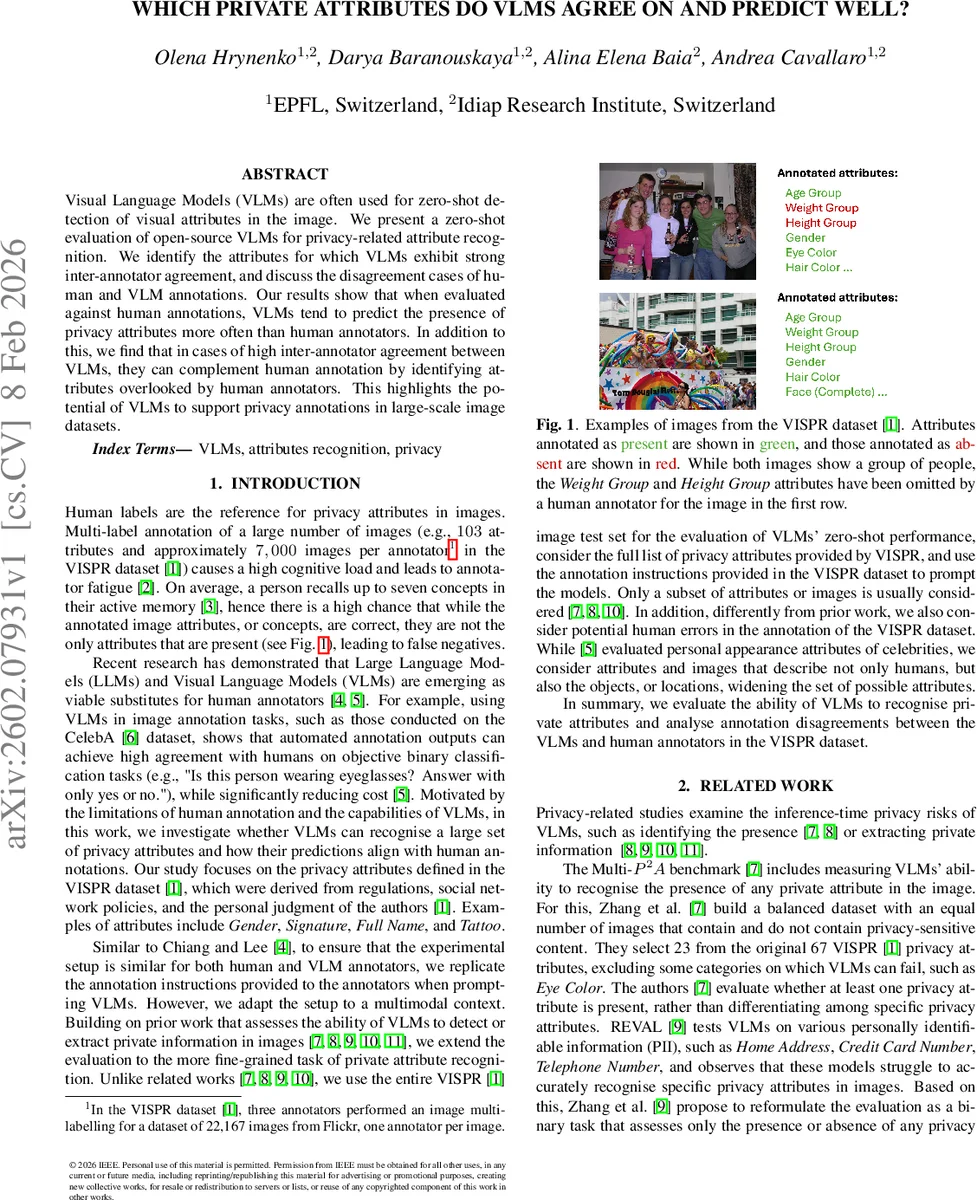

Visual Language Models (VLMs) are often used for zero-shot detection of visual attributes in the image. We present a zero-shot evaluation of open-source VLMs for privacy-related attribute recognition. We identify the attributes for which VLMs exhibit strong inter-annotator agreement, and discuss the disagreement cases of human and VLM annotations. Our results show that when evaluated against human annotations, VLMs tend to predict the presence of privacy attributes more often than human annotators. In addition to this, we find that in cases of high inter-annotator agreement between VLMs, they can complement human annotation by identifying attributes overlooked by human annotators. This highlights the potential of VLMs to support privacy annotations in large-scale image datasets.

💡 Research Summary

This paper presents a comprehensive zero‑shot evaluation of three open‑source instruction‑following visual‑language models (VLMs)—Gemma‑3‑4b‑it, Qwen2.5‑VL‑7B‑Instruct, and Llama‑3.2‑11B‑Vision‑Instruct—on the full set of 67 privacy‑related attributes defined in the VISPR dataset. The authors motivate the study by highlighting the high cognitive load and fatigue associated with multi‑label human annotation of large image collections, which can lead to missed attributes (false negatives). They aim to determine whether VLMs can reliably recognize a broad range of privacy attributes and how their predictions align with human annotators.

The experimental protocol mirrors the original VISPR annotation instructions: each of the 8,000 test images is queried with a separate prompt for every attribute, asking the model to answer “Present” or “Absent”. Because model outputs can be free‑form sentences, a two‑step parsing pipeline is employed—first a secondary prompt maps the open‑ended reply to a closed‑set label, then a simple substring rule extracts the final label, with a special “NONE” class for inconclusive answers. Stochastic decoding (sampling) is enabled to increase output diversity.

Performance is measured using precision, recall, balanced accuracy, and macro‑averaged F1. Across all attributes, the models achieve high recall for both present and absent classes, indicating they rarely miss an attribute that is truly present. However, precision for the “Present” class is substantially lower than for “Absent”, leading to many false positives. The authors argue that this may reflect human annotator omissions rather than pure model error, given the known limits of human memory (≈7 concepts at a time). Balanced accuracy exceeds 0.75 for most attribute‑model pairs, but macro‑F1 scores vary: Qwen2.5‑VL‑7B‑Instruct consistently outperforms the other two, achieving the highest F1 for 64 of the 67 attributes and exceeding 0.6 for 55 attributes. Gemma‑3‑4b‑it and Llama‑3.2‑11B‑Vision‑Instruct lag behind, with macro‑F1 often between 0.4 and 0.6.

To explore disagreements, the authors compute Fleiss’ κ among the three VLMs and select the five attributes with the highest inter‑model agreement (Age Group, Gender, Hair Color, Spectators, Face (Partial)). For each attribute they manually inspect 50 images where the VLMs label the attribute as present while human annotators label it absent. They then re‑annotate these images with two fresh annotators, excluding non‑human subjects (drawings, statues, dolls). The analysis reveals that a substantial proportion of these “VLM‑present / human‑absent” cases actually contain other human‑defining attributes (e.g., Face (Complete), Race, Skin Color). Specifically, 32 % of Age Group, 10 % of Gender, and 14 % of Hair Color cases have at least six additional human‑defined attributes, suggesting the original human label likely missed the attribute. For Spectators, 56 % of disagreement cases also contain at least one other relationship‑defining attribute (Personal Relationships, Social Circle, etc.). For Face (Partial), 59 % of disagreement images contain at least one other face‑defining attribute, indicating that a face is indeed present even if partially occluded.

The paper further compares the labeling behavior of the three VLMs. Qwen2.5‑VL‑7B‑Instruct tends to predict “Partial” more often than “Complete” for faces, whereas Llama‑3.2‑11B‑Vision‑Instruct shows the opposite trend. Human annotators label both variants consistently (≈75 % agreement), but the models diverge, highlighting differences in how they interpret the annotation guidelines.

Overall, the study demonstrates that VLMs can complement human annotators by detecting privacy attributes that humans frequently overlook, especially for attributes with high inter‑model agreement. Nevertheless, the relatively low precision for the “Present” class underscores a tendency of VLMs to over‑predict attributes, which could be problematic in privacy‑sensitive applications. The authors recommend post‑processing, threshold tuning, or hybrid human‑machine workflows to mitigate false positives.

In conclusion, this work provides empirical evidence that open‑source VLMs are capable of fine‑grained privacy attribute recognition at scale, offering a promising avenue to reduce annotation cost and improve coverage in large image datasets, while also identifying key challenges that must be addressed before deployment in real‑world privacy‑preserving pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment