Patches of Nonlinearity: Instruction Vectors in Large Language Models

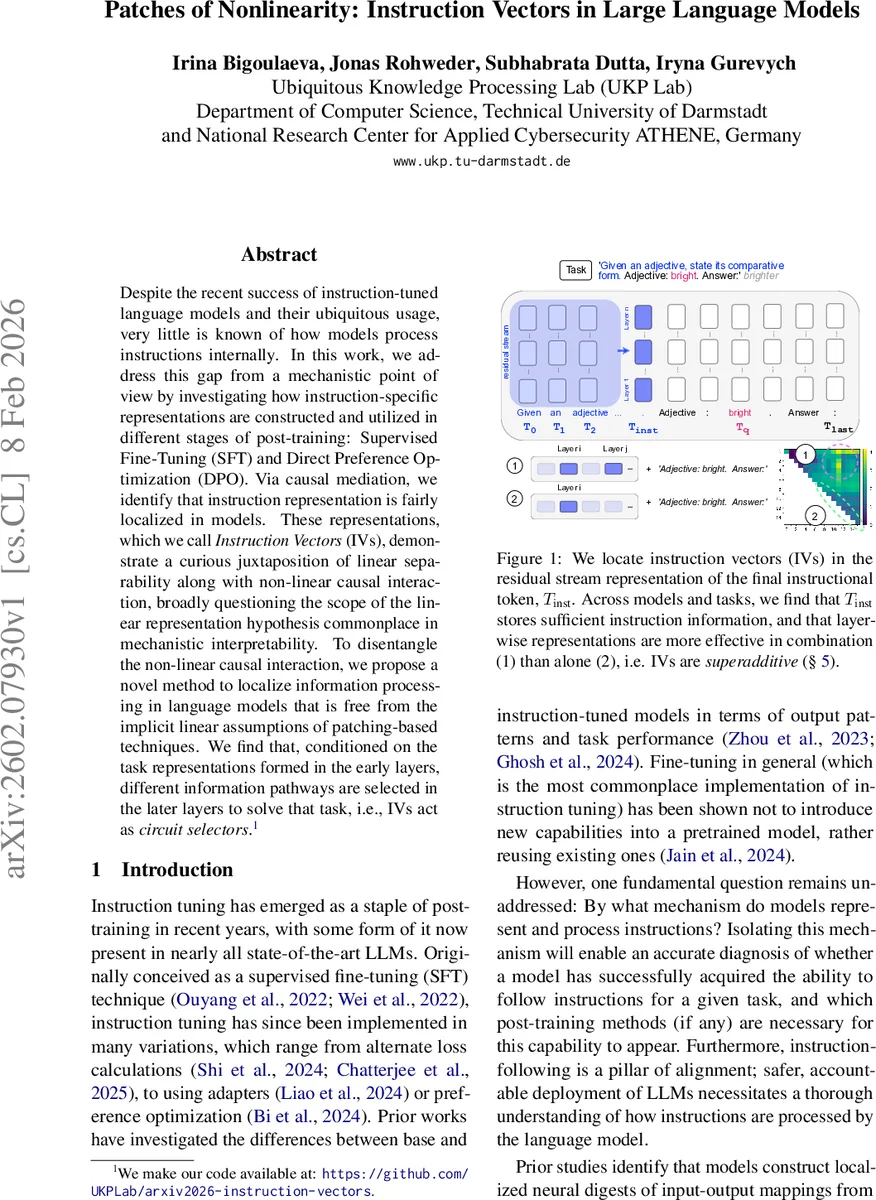

Despite the recent success of instruction-tuned language models and their ubiquitous usage, very little is known of how models process instructions internally. In this work, we address this gap from a mechanistic point of view by investigating how instruction-specific representations are constructed and utilized in different stages of post-training: Supervised Fine-Tuning (SFT) and Direct Preference Optimization (DPO). Via causal mediation, we identify that instruction representation is fairly localized in models. These representations, which we call Instruction Vectors (IVs), demonstrate a curious juxtaposition of linear separability along with non-linear causal interaction, broadly questioning the scope of the linear representation hypothesis commonplace in mechanistic interpretability. To disentangle the non-linear causal interaction, we propose a novel method to localize information processing in language models that is free from the implicit linear assumptions of patching-based techniques. We find that, conditioned on the task representations formed in the early layers, different information pathways are selected in the later layers to solve that task, i.e., IVs act as circuit selectors.

💡 Research Summary

The paper investigates how instruction‑tuned large language models (LLMs) internally represent and use the textual instructions that guide their behavior. While instruction fine‑tuning (SFT) and direct preference optimization (DPO) have become standard post‑training steps, the mechanistic details of instruction processing remain largely unexplored. The authors introduce the notion of “Instruction Vectors” (IVs) – compact, localized representations of the instruction that appear in the residual stream after the final instruction token (T_inst).

Using OLMo‑2 models (1 B and 7 B parameters) in three variants – a base model, an SFT‑fine‑tuned model, and a DPO‑fine‑tuned model – they construct a suite of eight contrastive tasks (e.g., adjective comparative vs. antonym) and four additional BigBench tasks. Each task pairs the same query with different instructions, allowing the authors to isolate the effect of the instruction. Model performance is measured both by exact‑match accuracy and a bespoke “Instruction Accuracy” metric that tolerates minor answer variations.

The core methodological contribution is a causal mediation analysis based on activation patching. For each layer l, the residual representation X_l is swapped from a source run (full prompt) into a target run (query only with a filler token). The impact on the logit of the correct answer token is recorded. Two key phenomena emerge:

-

Localization – Across tasks, a small set of middle‑to‑late layers yields the largest logit improvements when patched, indicating that the instruction information is concentrated in those layers rather than being distributed throughout the network.

-

Superadditivity – When two layers are patched simultaneously, the improvement often exceeds the sum of the individual layer effects. This reveals that IVs, while linearly separable (a linear hyperplane can discriminate between different instructions), their causal influence on downstream computation is not additive.

Standard patch‑based interpretability tools assume additive interactions and therefore cannot capture this superadditive behavior. To address this limitation, the authors propose a novel non‑linear causal discovery technique called Locally Linear Maps (LLM). LLM approximates each layer’s transformation by a locally linear map, then composes selected layers to reconstruct the overall function. By measuring how different layer subsets affect the final output, the method isolates the true causal pathways without imposing linearity.

Applying LLM, the authors find that IVs act as circuit selectors: the instruction digest formed early in the forward pass determines which later sub‑circuits (specific MLP blocks or attention heads) become active for the query. In the base model, such instruction digests exist but are not used to steer computation. After SFT, the digests become functional, and DPO further strengthens the non‑linear coupling between the digest and the selected circuit, leading to more robust instruction following.

The paper draws several broader implications. First, the eager computation of IVs suggests a potential information bottleneck in instruction processing, which could be exploited to improve robustness against adversarial perturbations of the instruction. Second, the discovery of superadditive causal effects challenges the prevailing linear representation hypothesis and the common assumption of additive causal graphs in mechanistic interpretability. It calls for new theoretical frameworks that can model synergistic interactions among latent variables.

In summary, the study reveals that LLMs encode instructions as localized, linearly‑separable vectors that nevertheless exert a non‑linear, superadditive influence on downstream layers, effectively selecting computational circuits for the query. This insight bridges a gap between representation‑centric and circuit‑centric interpretability, highlights the distinct roles of SFT and DPO in shaping instruction processing, and opens avenues for more nuanced model analysis, safety testing, and alignment strategies.

Comments & Academic Discussion

Loading comments...

Leave a Comment