MedCoG: Maximizing LLM Inference Density in Medical Reasoning via Meta-Cognitive Regulation

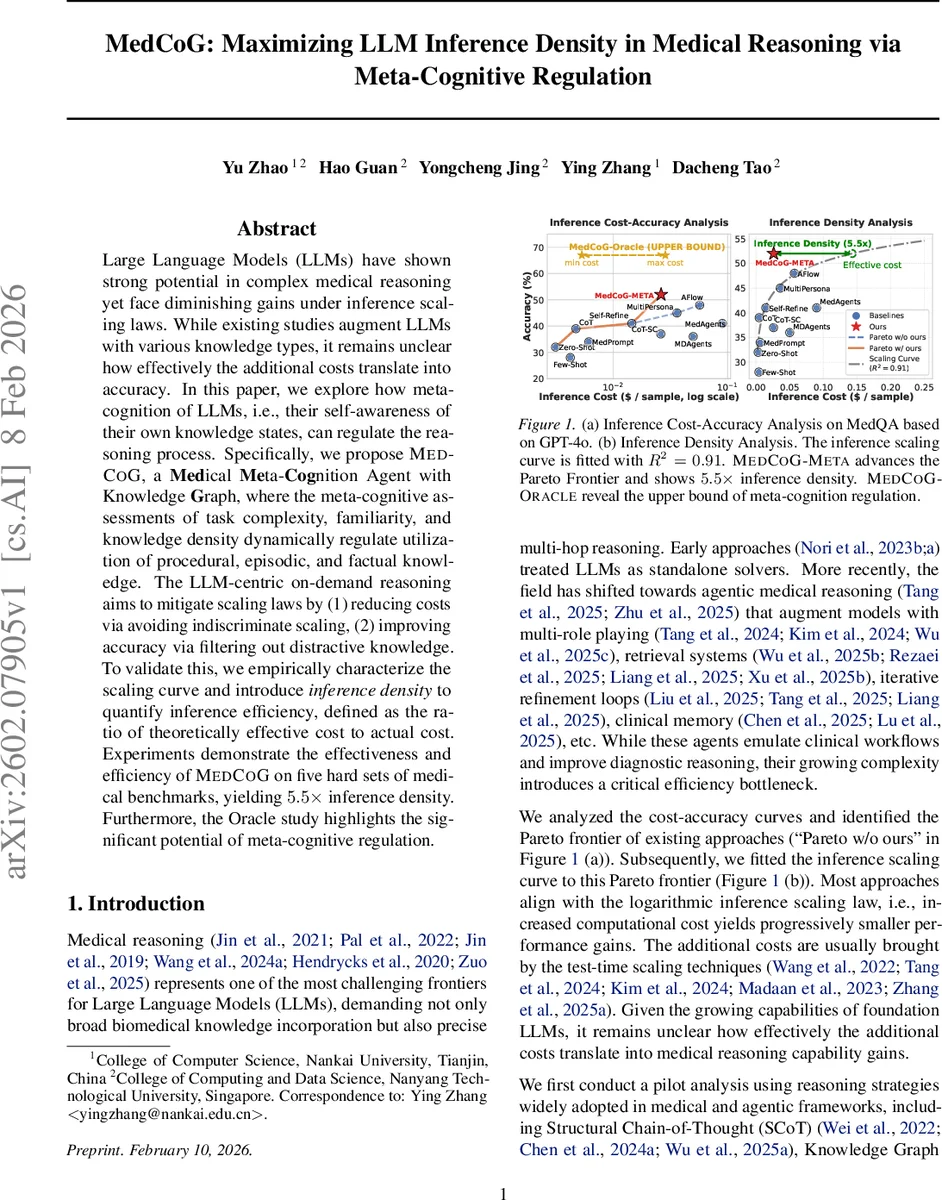

Large Language Models (LLMs) have shown strong potential in complex medical reasoning yet face diminishing gains under inference scaling laws. While existing studies augment LLMs with various knowledge types, it remains unclear how effectively the additional costs translate into accuracy. In this paper, we explore how meta-cognition of LLMs, i.e., their self-awareness of their own knowledge states, can regulate the reasoning process. Specifically, we propose MedCoG, a Medical Meta-Cognition Agent with Knowledge Graph, where the meta-cognitive assessments of task complexity, familiarity, and knowledge density dynamically regulate utilization of procedural, episodic, and factual knowledge. The LLM-centric on-demand reasoning aims to mitigate scaling laws by (1) reducing costs via avoiding indiscriminate scaling, (2) improving accuracy via filtering out distractive knowledge. To validate this, we empirically characterize the scaling curve and introduce inference density to quantify inference efficiency, defined as the ratio of theoretically effective cost to actual cost. Experiments demonstrate the effectiveness and efficiency of MedCoG on five hard sets of medical benchmarks, yielding 5.5x inference density. Furthermore, the Oracle study highlights the significant potential of meta-cognitive regulation.

💡 Research Summary

The paper addresses a critical bottleneck in applying large language models (LLMs) to complex medical reasoning: the diminishing returns of simply scaling inference cost. While many recent works augment LLMs with external knowledge sources such as knowledge graphs (KG) or memory of past cases, these additions often increase computational expense without proportional gains in accuracy, and can even degrade performance due to noise and over‑reasoning.

To overcome this, the authors introduce MedCoG (Medical Meta‑Cognition Agent with Knowledge Graph), a system that endows the LLM with a form of meta‑cognition—self‑awareness of its own knowledge state—and uses this awareness to regulate which knowledge sources are invoked for each individual question. The core of MedCoG is the Meta‑Cognition Regulator, which evaluates a medical query along three dimensions:

- Complexity – how structurally intricate the problem is (e.g., multi‑hop reasoning required).

- Familiarity – how typical or textbook‑like the case is, indicating whether past experience is likely useful.

- Knowledge Density – the extent to which precise factual information (specific entities, relationships) is needed.

These three scores form a state vector **s =

Comments & Academic Discussion

Loading comments...

Leave a Comment