Rethinking Code Complexity Through the Lens of Large Language Models

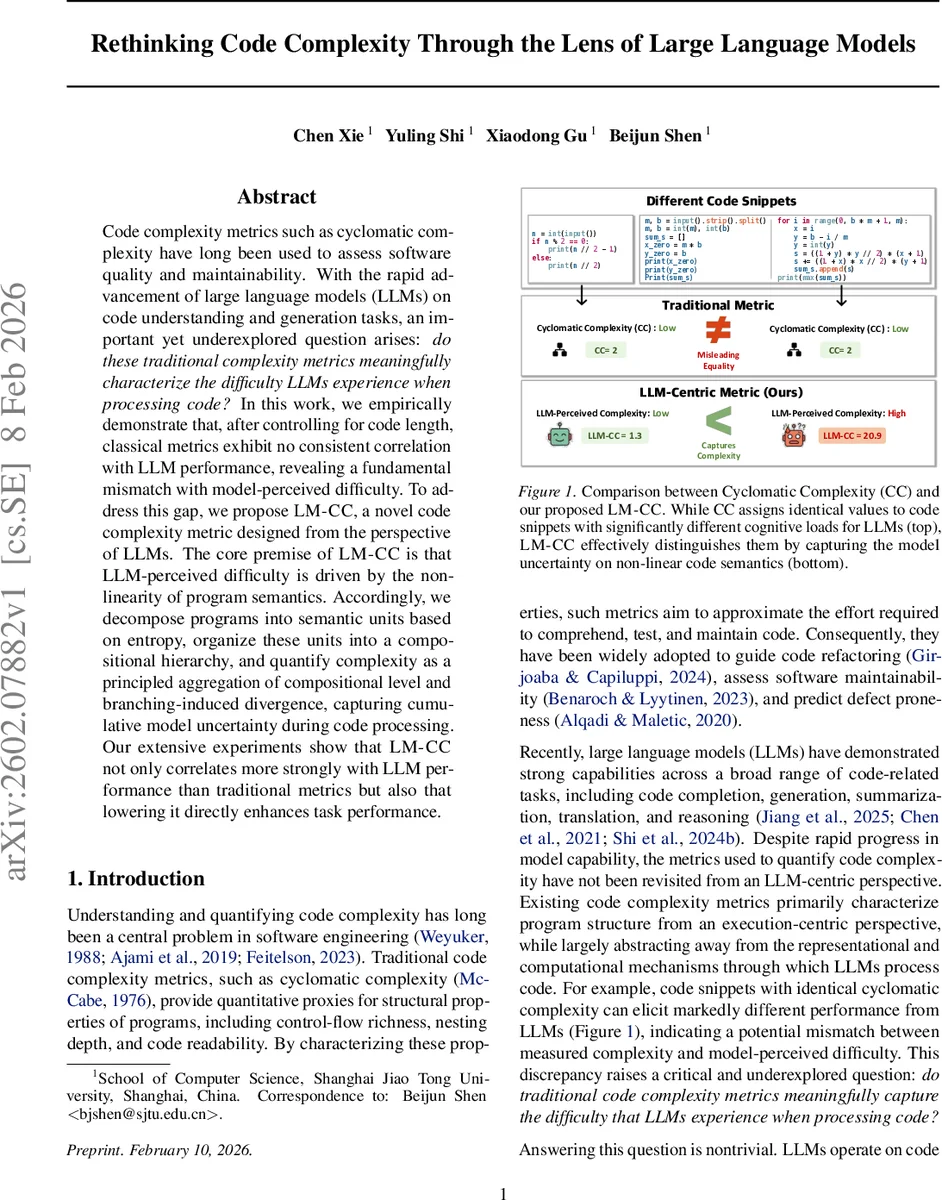

Code complexity metrics such as cyclomatic complexity have long been used to assess software quality and maintainability. With the rapid advancement of large language models (LLMs) on code understanding and generation tasks, an important yet underexplored question arises: do these traditional complexity metrics meaningfully characterize the difficulty LLMs experience when processing code? In this work, we empirically demonstrate that, after controlling for code length, classical metrics exhibit no consistent correlation with LLM performance, revealing a fundamental mismatch with model-perceived difficulty. To address this gap, we propose LM-CC, a novel code complexity metric designed from the perspective of LLMs. The core premise of LM-CC is that LLM-perceived difficulty is driven by the nonlinearity of program semantics. Accordingly, we decompose programs into semantic units based on entropy, organize these units into a compositional hierarchy, and quantify complexity as a principled aggregation of compositional level and branching-induced divergence, capturing cumulative model uncertainty during code processing. Our extensive experiments show that LM-CC not only correlates more strongly with LLM performance than traditional metrics but also that lowering it directly enhances task performance.

💡 Research Summary

The paper asks whether traditional software‑engineering complexity metrics (cyclomatic complexity, Halstead measures, Maintainability Index, Cognitive Complexity) actually capture the difficulty that large language models (LLMs) experience when processing code. To answer this, the authors conduct a systematic empirical study using DeepSeek‑V3.1 on three representative code tasks: program repair, code translation, and execution reasoning (datasets: xCodeEval‑APR, xCodeEval‑CT, HumanEval). They compute pass@1 as the performance metric and evaluate zero‑order and length‑controlled partial correlations between each traditional metric and model performance. After controlling for code length, none of the classic metrics shows a stable, statistically significant relationship across all tasks; most correlations either disappear or become weak and inconsistent. This demonstrates a fundamental mismatch: traditional metrics focus on human‑centric structural properties (control‑flow richness, nesting depth, operator/operand counts) while LLMs process code as token sequences and their difficulty stems from non‑linear semantic composition and long‑range hierarchical dependencies.

Motivated by this gap, the authors propose LM‑CC (LLM‑Centric Complexity), a metric explicitly designed from the LLM’s perspective. The central hypothesis is that LLM difficulty is driven by the “semantic non‑linearity” of programs: branching, nesting, and scope create multiple semantic paths that increase model uncertainty. LM‑CC quantifies this by (1) decomposing code into semantic units using token‑level entropy (computed with a pretrained language model) and structural delimiters; (2) organizing these units into a hierarchical tree that reflects how the model’s attention must jump across non‑linear control structures; (3) extracting two predictive features from the hierarchy: compositional level (depth of nesting) and branching factor (fan‑out at each level). Each unit receives a local complexity score based on its depth and the number of child units; the final LM‑CC score is a weighted aggregation of these local scores, with weights learned to maximize correlation with LLM performance.

Extensive experiments show that LM‑CC achieves very strong negative partial Spearman correlations with pass@1 (r ≈ –0.92 to –0.97) across all three tasks, far surpassing traditional metrics (which rarely exceed |r| ≈ 0.7 and often become non‑significant after length control). Moreover, the authors demonstrate a practical utility: by applying semantics‑preserving code rewrites that reduce LM‑CC (e.g., flattening deep nesting, simplifying branching), they observe consistent performance gains, up to a 20.9 % increase in pass@1. This confirms that LM‑CC is not only a better predictor of difficulty but also an actionable optimization target.

The paper’s contributions are threefold: (1) the first systematic empirical evidence that widely used code complexity metrics fail to align with LLM‑perceived difficulty; (2) the design of LM‑CC, a metric grounded in token‑entropy and hierarchical semantic composition, capturing the joint effect of depth and branching on model uncertainty; (3) comprehensive validation showing LM‑CC’s superior correlation and its direct impact on improving LLM‑driven code tasks. The authors also provide a theoretical discussion linking LM‑CC’s components to LLM inference dynamics, arguing that entropy‑based boundaries identify points where the model’s predictive distribution becomes diffuse, and that hierarchical composition amplifies this uncertainty.

In summary, the work argues for a paradigm shift: code complexity for LLM‑assisted software engineering should be measured not by human‑oriented structural counts but by model‑centric semantic non‑linearity. LM‑CC offers a concrete, empirically validated metric that can guide both evaluation and automated code transformation pipelines, paving the way for more effective LLM‑based code intelligence systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment