SPD-Faith Bench: Diagnosing and Improving Faithfulness in Chain-of-Thought for Multimodal Large Language Models

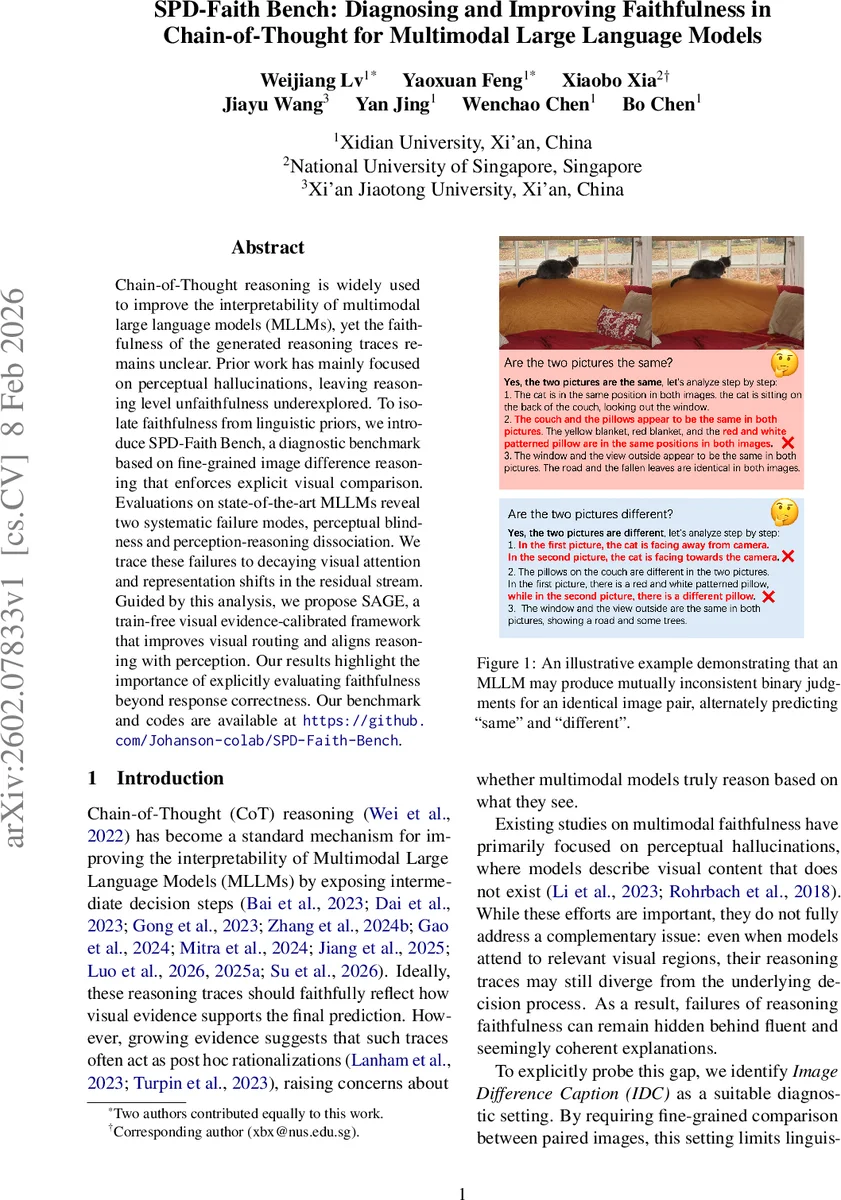

Chain-of-Thought reasoning is widely used to improve the interpretability of multimodal large language models (MLLMs), yet the faithfulness of the generated reasoning traces remains unclear. Prior work has mainly focused on perceptual hallucinations, leaving reasoning level unfaithfulness underexplored. To isolate faithfulness from linguistic priors, we introduce SPD-Faith Bench, a diagnostic benchmark based on fine-grained image difference reasoning that enforces explicit visual comparison. Evaluations on state-of-the-art MLLMs reveal two systematic failure modes, perceptual blindness and perception-reasoning dissociation. We trace these failures to decaying visual attention and representation shifts in the residual stream. Guided by this analysis, we propose SAGE, a train-free visual evidence-calibrated framework that improves visual routing and aligns reasoning with perception. Our results highlight the importance of explicitly evaluating faithfulness beyond response correctness. Our benchmark and codes are available at https://github.com/Johanson-colab/SPD-Faith-Bench.

💡 Research Summary

This paper tackles the often‑overlooked problem of faithfulness in chain‑of‑thought (CoT) reasoning for multimodal large language models (MLLMs). While prior work has mainly examined perceptual hallucinations—cases where models describe visual elements that do not exist—this study focuses on “behavioral unfaithfulness,” i.e., the mismatch between a model’s internal visual perception and the textual reasoning it produces. To isolate visual grounding from linguistic priors, the authors introduce SPD‑Faith Bench, a diagnostic benchmark built around fine‑grained Image Difference Caption (IDC) tasks.

Benchmark construction proceeds in three stages. First, a diverse set of real‑world images is collected and annotated for instance statistics. Second, GPT‑4o designs atomic edits (color change, object removal, position shift) that are realized with LaMa in‑painting; human verification guarantees accurate ground‑truth differences. Third, the resulting 3,000 image pairs are stratified into single‑difference (Easy/Medium/Hard) and multi‑difference (2–5 edits) subsets. This design forces models to explicitly compare two images, eliminating shortcuts that could be exploited in single‑image settings.

Evaluation is organized along three hierarchical dimensions, each with two metrics (six in total):

- Global perception – Difference Sensitivity (DS) measures whether a model detects any change; Difference Quantity Recall (DQR) measures how well it counts the number of changes.

- Faithful perception – Type‑Level F1 (TF1) evaluates correct classification of the modification type (color vs. removal vs. position); Category‑Level F1 (CF1) evaluates correct identification of the object categories involved.

- Faithful reasoning – Contradiction Rate (CR) penalizes inconsistent answers to symmetric binary queries (“same?” vs. “different?”); Difference Reasoning Faithfulness (DRF) quantifies how closely the generated CoT explanations align with the actual visual differences.

Twelve state‑of‑the‑art MLLMs (including GPT‑4o, Claude‑4.5‑Haiku, Gemini‑2.5‑Pro, GLM‑4.5V, Qwen‑VL variants, DeepSeek‑VL2, InternVL, MiniCPM‑V) are evaluated. Results reveal two systematic failure modes:

- Perceptual blindness – visual attention to the changed region decays sharply as the model proceeds through CoT steps, leading to missed differences especially in hard (small‑area) cases.

- Perception‑reasoning dissociation – even when a model correctly perceives a difference (high TF1/CF1), its reasoning chain often ignores that evidence, producing explanations that are contradictory or unrelated.

Mechanistic analysis shows that in the residual stream, visual token representations become increasingly diluted while textual tokens dominate, explaining the observed attention decay and reasoning drift.

Guided by these insights, the authors propose SAGE (Visual Evidence‑Calibrated Framework), a train‑free post‑hoc module consisting of:

- Visual routing reinforcement – re‑weights attention matrices to preserve visual token influence throughout the reasoning horizon, preventing the gradual loss of visual signal.

- Evidence calibration – extracts the model’s textual difference description, aligns it with the ground‑truth visual change, and either corrects the output or flags uncertainty.

SAGE can be plugged into any existing MLLM without additional fine‑tuning. Empirically, applying SAGE improves DRF by an average of 12 percentage points and reduces CR by over 30 % across the evaluated models, demonstrating a substantial boost in reasoning faithfulness while maintaining or slightly improving global perception scores.

The paper’s contributions are threefold: (1) a novel benchmark and a comprehensive set of metrics that explicitly evaluate visual‑grounded reasoning faithfulness; (2) a mechanistic diagnosis of two pervasive failure modes in current MLLMs; (3) a practical, training‑free solution (SAGE) that aligns visual evidence with chain‑of‑thought generation. The authors release the benchmark, code, and detailed analysis, inviting the community to further explore more complex scenarios (temporal changes, multi‑object interactions) and human‑in‑the‑loop methods for enhancing multimodal reasoning reliability.

Comments & Academic Discussion

Loading comments...

Leave a Comment