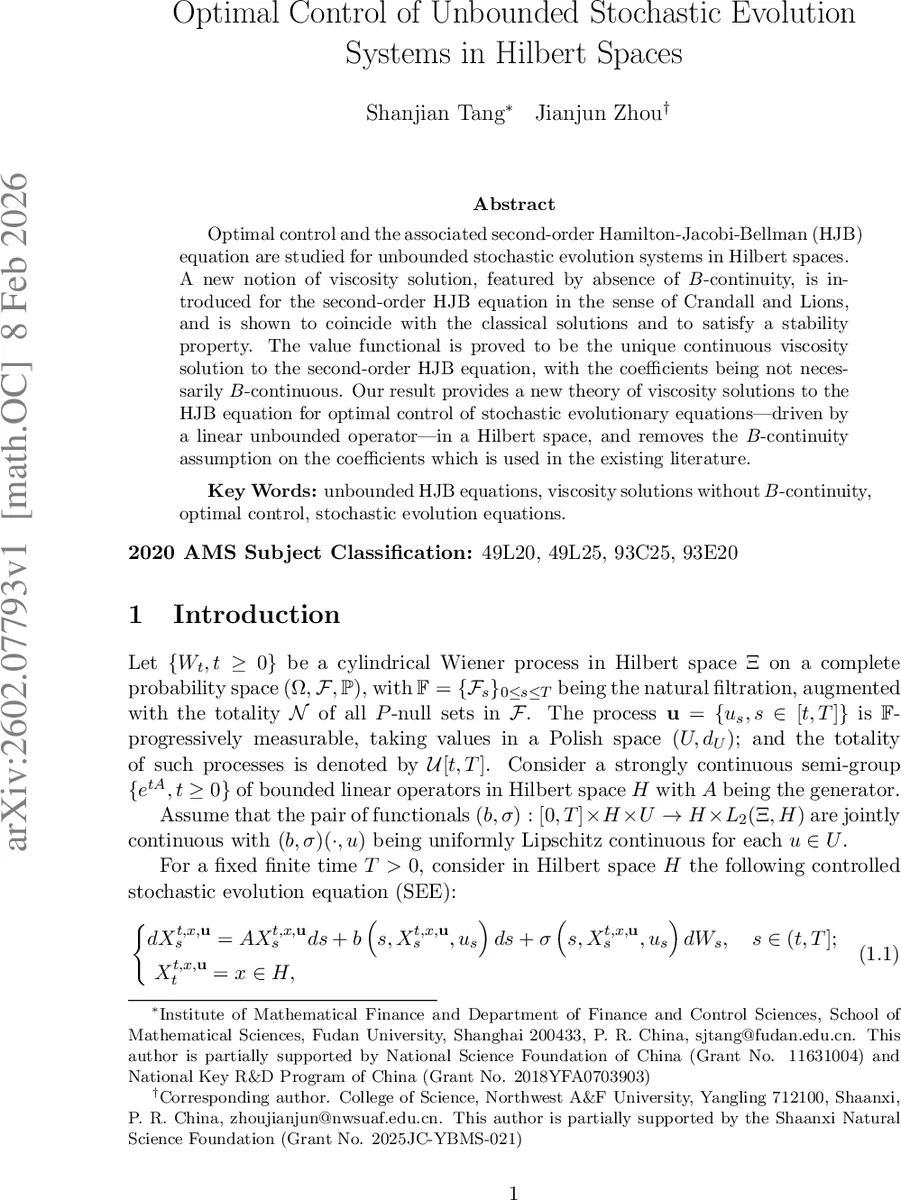

Optimal Control of Unbounded Stochastic Evolution Systems in Hilbert Spaces

Optimal control and the associated second-order Hamilton-Jacobi-Bellman (HJB) equation are studied for unbounded stochastic evolution systems in Hilbert spaces. A new notion of viscosity solution, featured by absence of B-continuity, is introduced for the second-order HJB equation in the sense of Crandall and Lions, and is shown to coincide with the classical solutions and to satisfy a stability property. The value functional is proved to be the unique continuous viscosity solution to the second-order HJB equation, with the coefficients being not necessarily B-continuous. Our result provides a new theory of viscosity solutions to the HJB equation for optimal control of stochastic evolutionary equations-driven by a linear unbounded operator-in a Hilbert space, and removes the B-continuity assumption on the coefficients which is used in the existing literature.

💡 Research Summary

The paper addresses optimal control for stochastic evolution equations (SEE) in a Hilbert space H where the dynamics are driven by a possibly unbounded linear operator A (the generator of a C₀‑semigroup) together with Lipschitz continuous drift b and diffusion σ. The control enters both drift and diffusion, and the performance criterion is defined via a backward stochastic differential equation (BSDE) with terminal payoff ϕ and running cost q. The value function V(t,x) is the essential supremum of the BSDE solution over admissible controls.

Classical dynamic programming leads to a second‑order Hamilton‑Jacobi‑Bellman (HJB) equation \

Comments & Academic Discussion

Loading comments...

Leave a Comment