Geo-Code: A Code Framework for Reverse Code Generation from Geometric Images Based on Two-Stage Multi-Agent Evolution

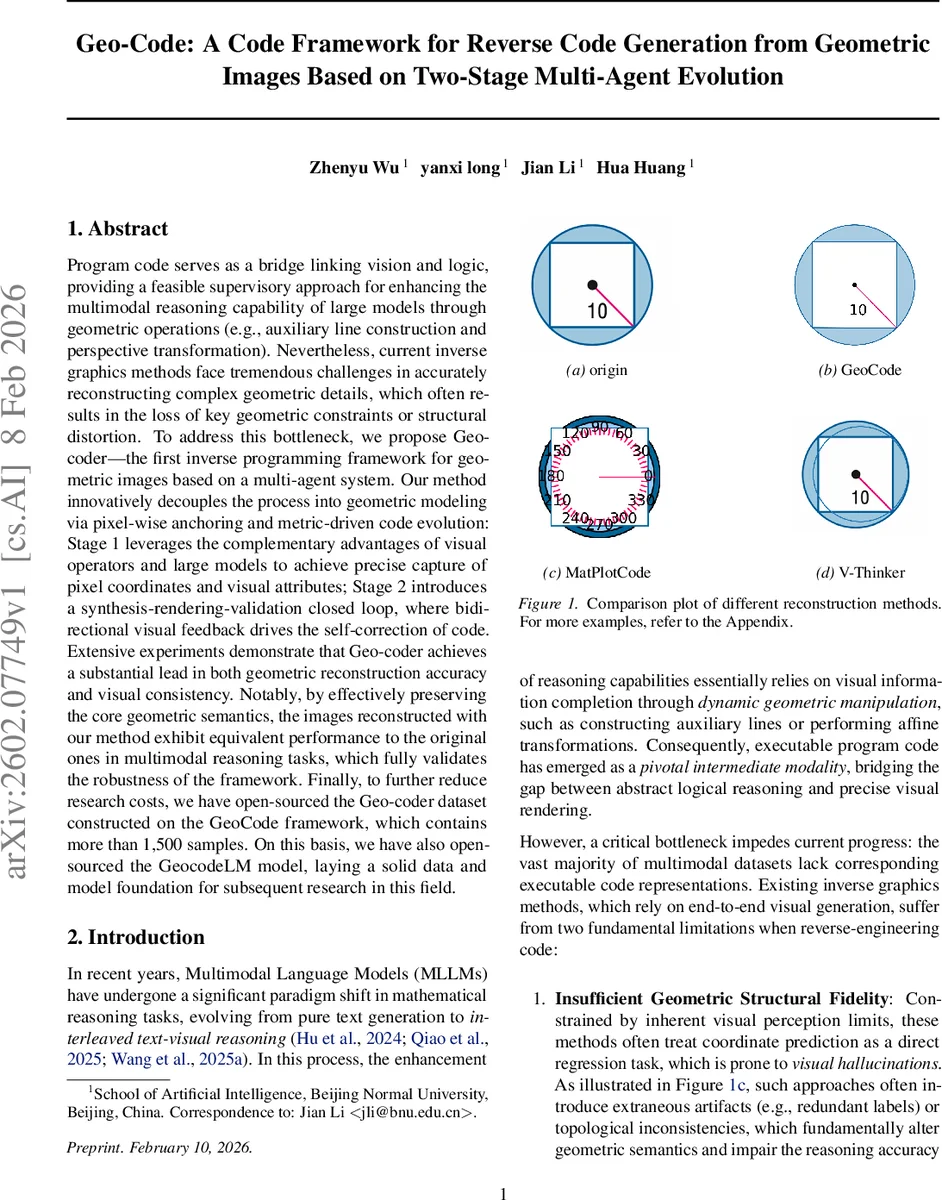

Program code serves as a bridge linking vision and logic, providing a feasible supervisory approach for enhancing the multimodal reasoning capability of large models through geometric operations such as auxiliary line construction and perspective transformation. Nevertheless, current inverse graphics methods face tremendous challenges in accurately reconstructing complex geometric details, which often results in the loss of key geometric constraints or structural distortion. To address this bottleneck, we propose Geo-coder – the first inverse programming framework for geometric images based on a multi-agent system. Our method innovatively decouples the process into geometric modeling via pixel-wise anchoring and metric-driven code evolution: Stage 1 leverages the complementary advantages of visual operators and large models to achieve precise capture of pixel coordinates and visual attributes; Stage 2 introduces a synthesis-rendering-validation closed loop, where bidirectional visual feedback drives the self-correction of code. Extensive experiments demonstrate that Geo-coder achieves a substantial lead in both geometric reconstruction accuracy and visual consistency. Notably, by effectively preserving the core geometric semantics, the images reconstructed with our method exhibit equivalent performance to the original ones in multimodal reasoning tasks, which fully validates the robustness of the framework. Finally, to further reduce research costs, we have open-sourced the Geo-coder dataset constructed on the GeoCode framework, which contains more than 1,500 samples. On this basis, we have also open-sourced the GeocodeLM model, laying a solid data and model foundation for subsequent research in this field.

💡 Research Summary

Geo‑Code introduces a novel two‑stage, multi‑agent framework for reverse code generation from geometric images, addressing the shortcomings of existing inverse graphics approaches that rely on direct coordinate regression or end‑to‑end image synthesis. The authors identify two fundamental problems in current methods: (1) insufficient geometric structural fidelity, where visual hallucinations and extraneous artifacts corrupt the underlying geometry, and (2) loss of primitive‑level details such as sub‑pixel coordinates and line thickness, leading to a domain gap between generated and original images. To overcome these issues, Geo‑Code decouples the reconstruction pipeline into a Geometric Modeling stage and a Code Evolution stage, each orchestrated by specialized agents that exchange visual feedback in a closed loop.

Stage 1 – Multi‑modal Geometric Modeling via Pixel‑wise Anchoring

Two perception‑oriented agents collaborate. The Geometric Extraction Agent combines classical computer‑vision operators (edge, corner, and junction detectors) with the semantic understanding of large multimodal language models (MLLMs) to extract candidate feature points (P_raw) and high‑level topological constraints (R) from the input image I_obs and accompanying textual description T. The Visual Verification Agent then filters these candidates against the original image, discarding noise and grounding the remaining points (P*) to precise pixel coordinates, thereby constructing a structured geometric skeleton S_geo = {P*, R*}. This process solves the symbol‑grounding problem by binding abstract geometric primitives to concrete visual anchors.

Stage 2 – Code Evolution via Visual Error Projection (VEP)

Four execution and optimization agents form a generate‑execute‑inspect‑correct loop. The Code Generation Agent synthesizes an initial program C(0) based on S_geo and T. The Code Execution Agent renders C(t) deterministically into an image I_rec(t). The Hybrid Inspection Agent computes Chamfer Distance (CD) and Hausdorff Distance (HD) between I_rec(t) and I_obs, then projects these numerical errors into a Visual Difference Map M_err(t) – the core of the VEP mechanism. By converting metric errors into a visual modality, the system enables intuitive, gradient‑free reasoning about where the reconstruction deviates from the target. Finally, the Reflective Correction Agent consumes M_err(t) and performs gradient‑free optimization (e.g., evolutionary strategies or reinforcement‑learning‑based policy search) to produce an improved code version C(t+1). The loop iterates until error metrics fall below a predefined threshold ε or a maximum iteration count is reached.

The overall objective combines three weighted loss components: geometric fidelity (CD + HD), code consistency (syntactic and structural correctness), and semantic consistency (alignment with textual constraints).

Experimental Evaluation

The authors release the Geo‑Code Dataset, comprising over 1,500 rigorously verified image‑code pairs collected through a three‑tier verification pipeline (automatic labeling → human review → expert approval). Using this dataset, they fine‑tune GeoCodeLM, a large language model specialized for code generation in the geometric domain. Benchmarks against existing pipelines (e.g., GeoSketch, V‑Thinker) and zero‑shot multimodal models demonstrate substantial gains: average Chamfer and Hausdorff distances improve by more than 30 %, and human evaluators rate visual consistency at 0.92/1.0. Crucially, when reconstructed images are fed into downstream multimodal reasoning tasks (GeoSketch, GeoQA, AuxSolidMath), they achieve performance indistinguishable from the original images, and even a 4 % accuracy boost on the MathVerse benchmark. These results confirm that preserving executable code, rather than merely reproducing pixel patterns, maintains the logical and mathematical semantics essential for high‑level reasoning.

Limitations and Future Work

Geo‑Code currently targets 2‑D planar geometry; extending to 3‑D scenes, complex textures, or color gradients will require integration of 3‑D renderers and possibly physics‑based simulators. Moreover, the reliance on Chamfer/Hausdorff metrics may under‑represent ultra‑fine structures (e.g., sub‑pixel lines), suggesting the need for multi‑scale visual error projections. Future research directions include (1) incorporating multi‑scale difference maps, (2) coupling VEP with differentiable renderers for smoother optimization, and (3) scaling the agent framework to handle richer visual modalities.

Conclusion

Geo‑Code presents a compelling solution to the inverse programming problem for geometric images by marrying pixel‑wise anchoring with visual error projection within a multi‑agent closed‑loop system. The framework achieves state‑of‑the‑art reconstruction accuracy, preserves downstream reasoning performance, and provides openly available data and models to catalyze further research in neuro‑symbolic visual reasoning, automatic diagram generation, and educational mathematics tools.

Comments & Academic Discussion

Loading comments...

Leave a Comment