G2P: Gaussian-to-Point Attribute Alignment for Boundary-Aware 3D Semantic Segmentation

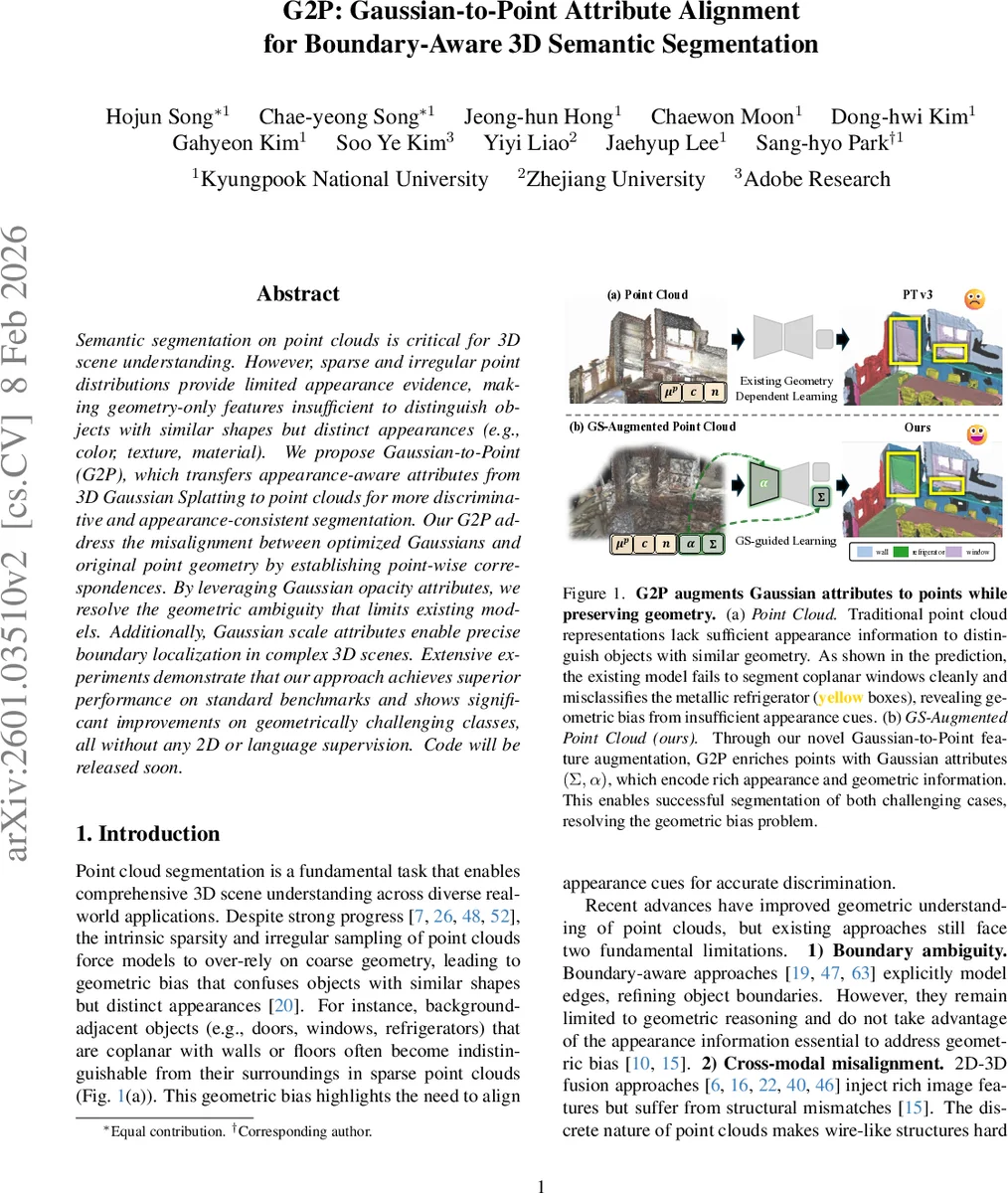

Semantic segmentation on point clouds is critical for 3D scene understanding. However, sparse and irregular point distributions provide limited appearance evidence, making geometry-only features insufficient to distinguish objects with similar shapes but distinct appearances (e.g., color, texture, material). We propose Gaussian-to-Point (G2P), which transfers appearance-aware attributes from 3D Gaussian Splatting to point clouds for more discriminative and appearance-consistent segmentation. Our G2P address the misalignment between optimized Gaussians and original point geometry by establishing point-wise correspondences. By leveraging Gaussian opacity attributes, we resolve the geometric ambiguity that limits existing models. Additionally, Gaussian scale attributes enable precise boundary localization in complex 3D scenes. Extensive experiments demonstrate that our approach achieves superior performance on standard benchmarks and shows significant improvements on geometrically challenging classes, all without any 2D or language supervision.

💡 Research Summary

The paper tackles two fundamental challenges in 3D point‑cloud semantic segmentation: geometric bias caused by sparse, shape‑only features, and boundary ambiguity where objects share planar surfaces with the background. To overcome these issues, the authors introduce Gaussian‑to‑Point (G2P), a framework that transfers appearance‑rich attributes from 3D Gaussian Splatting (GS) to the original point cloud while preserving the exact geometry of the points.

G2P consists of three main components. First, Gaussian‑to‑Point Feature Augmentation aligns each point with a set of nearby Gaussians. Because optimized Gaussians often drift from their initial positions, a naïve nearest‑neighbor match would be inaccurate. The authors therefore select all Gaussians within a Euclidean radius r of a point, then compute the Mahalanobis distance using each Gaussian’s full covariance matrix (which encodes anisotropic scale and rotation). The k Gaussians with the smallest Mahalanobis distances are kept, and inverse‑distance weights are normalized to aggregate the Gaussian scale tensor S and opacity α. This yields an augmented point feature (µ_p, c, n, S′, α′) of dimension 13, where S′ and α′ carry geometric and appearance cues respectively.

Second, Scale‑based Boundary Extraction exploits the observation that small‑scale Gaussians concentrate at object edges, while large‑scale Gaussians cover smooth planar regions. After augmentation, the L2 norm of each point’s scale vector is computed. Points whose scale magnitude falls below a dynamically chosen threshold (the bottom η percent) are treated as boundary pseudo‑labels (B_scale). By first discarding background classes, the method focuses on object‑centric boundaries, improving detection of coplanar objects such as doors and windows.

Third, GS Appearance Distillation uses a self‑supervised appearance encoder trained on the augmented point cloud (µ_p, c, α′). The encoder learns to map the opacity‑enhanced color information into a robust appearance embedding. During the main segmentation training, this embedding is distilled into a conventional point‑cloud segmentation network (which receives only the original geometric inputs µ_p, c, n). The overall loss combines (1) standard semantic cross‑entropy, (2) binary cross‑entropy on the boundary pseudo‑labels, and (3) an appearance distillation term that aligns the network’s intermediate features with the encoder’s output.

Extensive experiments on ScanNet, S3DIS, and other indoor benchmarks demonstrate that G2P consistently outperforms state‑of‑the‑art methods. Notably, classes that are geometrically ambiguous—doors, windows, refrigerators—show 4–6 percentage‑point gains in mIoU, while overall mIoU and mAcc improve by 2–3 percentage points. Importantly, the approach requires no 2D image data, language models, or external supervision; all gains stem from the intrinsic volumetric information encoded in the Gaussians.

The paper also highlights the efficiency advantage of operating entirely in 3D. By using Mahalanobis distance for alignment, G2P avoids the projection errors, occlusion losses, and spatial misalignments that plague 2D‑3D fusion pipelines. However, the current nearest‑neighbor search scales linearly with the number of Gaussians, which could become a bottleneck for very large scenes. Future work may incorporate approximate nearest‑neighbor structures (e.g., KD‑trees, locality‑sensitive hashing) or multi‑scale Gaussian hierarchies to improve scalability. Additional directions include real‑time deployment, integration with LiDAR data, and extending the boundary extraction to multi‑class, multi‑object scenarios.

Overall, G2P establishes a novel paradigm: leveraging the continuous, appearance‑aware representation of 3D Gaussian Splatting to enrich point‑cloud features, thereby resolving geometric bias and enhancing boundary awareness without any cross‑modal supervision.

Comments & Academic Discussion

Loading comments...

Leave a Comment