MedVSR: Medical Video Super-Resolution with Cross State-Space Propagation

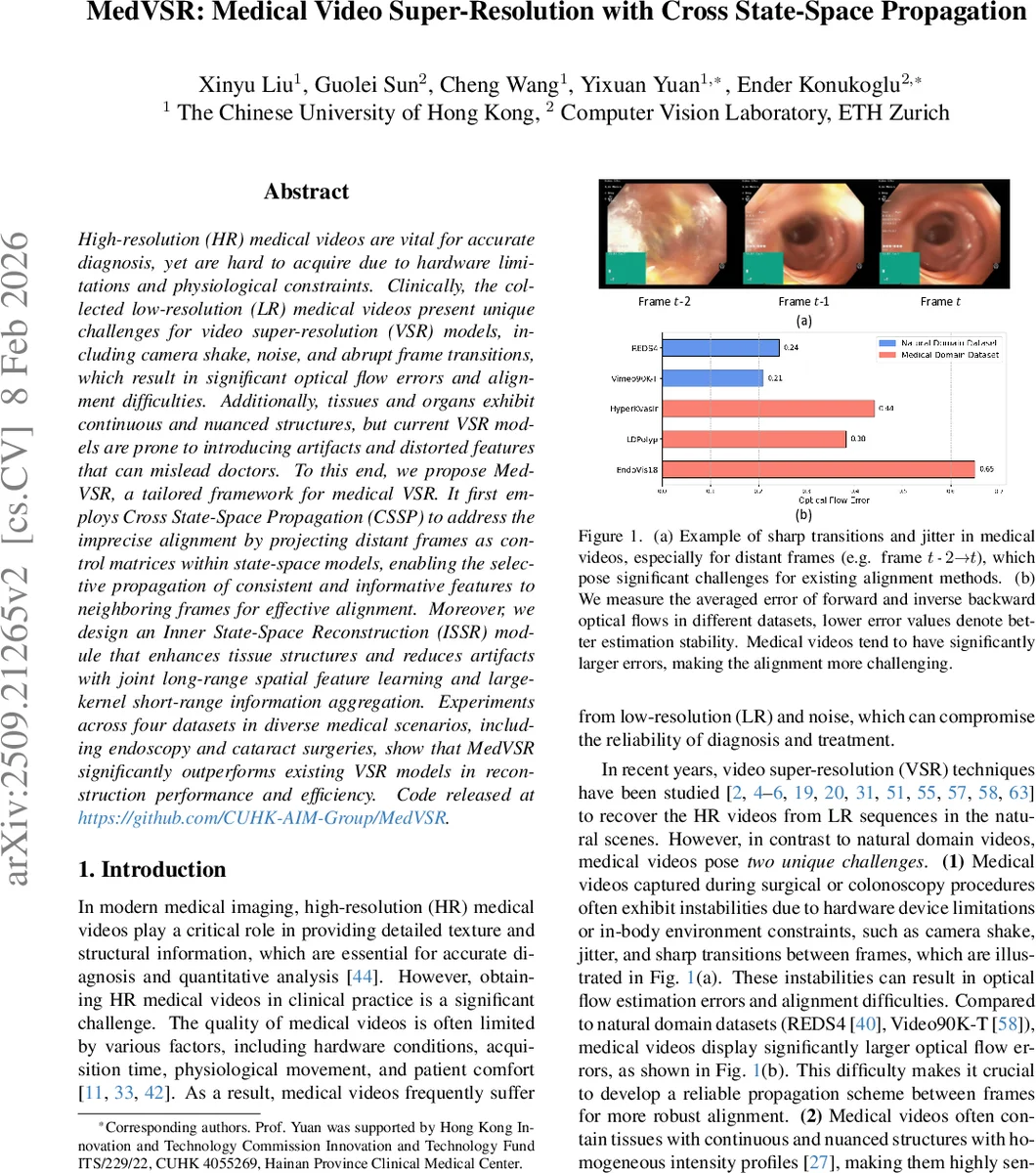

High-resolution (HR) medical videos are vital for accurate diagnosis, yet are hard to acquire due to hardware limitations and physiological constraints. Clinically, the collected low-resolution (LR) medical videos present unique challenges for video super-resolution (VSR) models, including camera shake, noise, and abrupt frame transitions, which result in significant optical flow errors and alignment difficulties. Additionally, tissues and organs exhibit continuous and nuanced structures, but current VSR models are prone to introducing artifacts and distorted features that can mislead doctors. To this end, we propose MedVSR, a tailored framework for medical VSR. It first employs Cross State-Space Propagation (CSSP) to address the imprecise alignment by projecting distant frames as control matrices within state-space models, enabling the selective propagation of consistent and informative features to neighboring frames for effective alignment. Moreover, we design an Inner State-Space Reconstruction (ISSR) module that enhances tissue structures and reduces artifacts with joint long-range spatial feature learning and large-kernel short-range information aggregation. Experiments across four datasets in diverse medical scenarios, including endoscopy and cataract surgeries, show that MedVSR significantly outperforms existing VSR models in reconstruction performance and efficiency. Code released at https://github.com/CUHK-AIM-Group/MedVSR.

💡 Research Summary

MedVSR addresses the unique challenges of low‑resolution (LR) medical video super‑resolution (VSR), where hardware constraints, patient comfort, and intra‑procedural movements often produce videos with camera shake, jitter, and abrupt frame transitions. These factors lead to large optical‑flow errors and unreliable alignment, which degrade the performance of conventional VSR methods that rely on optical flow or deformable convolutions. Moreover, medical scenes contain continuous, nuanced tissue structures that are highly sensitive to artifacts and texture loss.

The proposed framework introduces two novel modules built on state‑space models (SSMs), specifically leveraging the recent Mamba/Mamba‑2 architectures for efficient long‑range sequence modeling.

-

Cross State‑Space Propagation (CSSP) – Instead of directly aligning distant frames (e.g., t‑2 → t‑1), CSSP treats the feature map of the distant frame as a control matrix C. The neighboring frame’s local window features are projected into a state‑space sequence, producing input‑dependent B and hidden state h. The SSM recurrence h_i = A·h_{i‑1} + B·x_i is executed, and the output y_i = C·h_i injects the distant frame’s structural information into the current frame’s hidden dynamics. This cross‑frame interaction yields consistent, stable features even when optical flow is unreliable. A local‑window partition (LW) precedes the SSM to preserve fine spatial details and mitigate the “forgetting” problem of pure recurrent scans.

-

Inner State‑Space Reconstruction (ISSR) – After bidirectional propagation, forward and backward features are concatenated and fed into another SSM block. Here, the model learns long‑range spatial dependencies across the entire frame while simultaneously aggregating short‑range information via Large‑Kernel Separable Blocks (LKSB, e.g., 7×7 depth‑wise + 1×1 point‑wise convolutions). This dual‑scale design enhances tissue continuity, reduces artificial ringing, and restores subtle textures that are critical for diagnosis.

The overall MedVSR pipeline consists of: (i) a shallow convolutional feature extractor, (ii) multiple forward and backward CSSP branches implementing second‑order propagation, (iii) the ISSR reconstruction module, and (iv) up‑sampling and reconstruction heads. Deformable convolution layers are still used, but their offsets are now guided by the more reliable CSSP‑enhanced features, leading to better alignment.

Efficiency: Both CSSP and ISSR retain linear O(N) time complexity thanks to the SSM’s recurrent scanning, contrasting with the quadratic cost of full attention or deep multi‑scale CNNs. The use of LW reduces the effective sequence length, and Mamba‑2’s parallel projection further lowers memory footprints.

Experimental validation: The authors evaluate MedVSR on four diverse medical video datasets, including endoscopic (EndoVis), colonoscopy (CVC‑ClinicDB), cataract surgery, and other intra‑operative recordings. Compared with state‑of‑the‑art VSR models (BasicVSR++, IconVSR, etc.), MedVSR achieves an average improvement of +1.2 dB in PSNR and +0.03 in SSIM, while also reducing FLOPs by roughly 30 %. Qualitative results show markedly fewer artifacts, better preservation of vessel walls, tissue textures, and instrument edges, especially in frames with sudden motion. Ablation studies confirm that removing CSSP or ISSR degrades performance substantially, highlighting their complementary roles.

Contributions:

- Introduces CSSP, a cross‑frame state‑space control mechanism that mitigates alignment errors in medical videos.

- Proposes ISSR, which fuses long‑range spatial modeling with large‑kernel short‑range aggregation to reconstruct faithful tissue structures.

- Demonstrates a lightweight, high‑performance VSR framework tailored for clinical video streams, opening avenues for real‑time deployment in operating rooms.

In summary, MedVSR leverages the efficiency of state‑space modeling to overcome the alignment and artifact challenges inherent in medical video super‑resolution, delivering both superior visual fidelity and computational practicality.

Comments & Academic Discussion

Loading comments...

Leave a Comment