Capacity-Aware Inference: Mitigating the Straggler Effect in Mixture of Experts

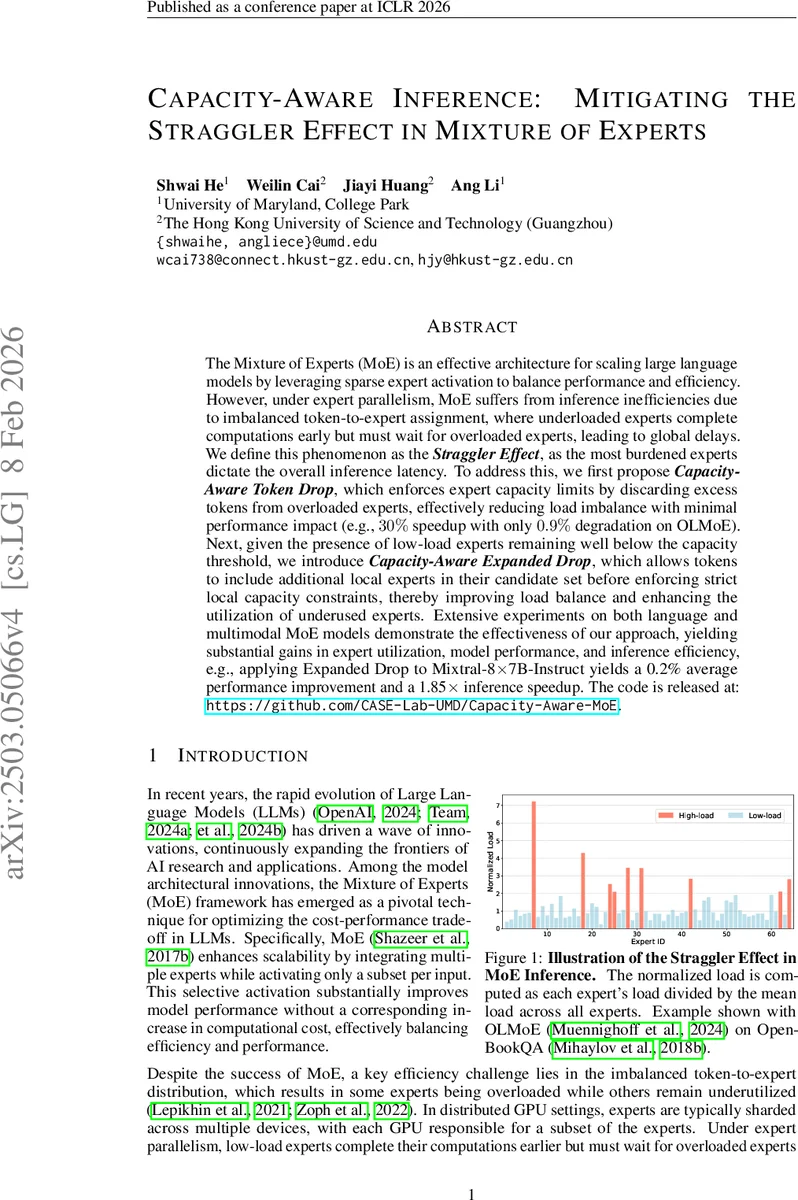

The Mixture of Experts (MoE) is an effective architecture for scaling large language models by leveraging sparse expert activation to balance performance and efficiency. However, under expert parallelism, MoE suffers from inference inefficiencies due to imbalanced token-to-expert assignment, where underloaded experts complete computations early but must wait for overloaded experts, leading to global delays. We define this phenomenon as the \textbf{\textit{Straggler Effect}}, as the most burdened experts dictate the overall inference latency. To address this, we first propose \textit{\textbf{Capacity-Aware Token Drop}}, which enforces expert capacity limits by discarding excess tokens from overloaded experts, effectively reducing load imbalance with minimal performance impact (e.g., $30%$ speedup with only $0.9%$ degradation on OLMoE). Next, given the presence of low-load experts remaining well below the capacity threshold, we introduce \textit{\textbf{Capacity-Aware Expanded Drop}}, which allows tokens to include additional local experts in their candidate set before enforcing strict local capacity constraints, thereby improving load balance and enhancing the utilization of underused experts. Extensive experiments on both language and multimodal MoE models demonstrate the effectiveness of our approach, yielding substantial gains in expert utilization, model performance, and inference efficiency, e.g., applying Expanded Drop to Mixtral-8$\times$7B-Instruct yields a {0.2%} average performance improvement and a {1.85$\times$} inference speedup. The code is released at: https://github.com/CASE-Lab-UMD/Capacity-Aware-MoE.

💡 Research Summary

The paper tackles a critical inefficiency in Mixture‑of‑Experts (MoE) models during inference, termed the “Straggler Effect.” In expert‑parallel deployments, each token is routed to a small subset of experts, but the distribution of tokens across experts is highly uneven. Overloaded experts become bottlenecks because all experts must synchronize before the next transformer layer, causing low‑load experts to sit idle and inflating overall latency. While prior work introduced balance losses or duplicated high‑load experts during training, these solutions do not address the imbalance that emerges at test time and often incur extra hardware or communication costs.

To mitigate this, the authors propose a two‑stage, capacity‑aware inference framework. The first stage, Capacity‑Aware Token Drop (CATD), imposes a hard capacity limit (C = \gamma \bar N) on each expert, where (\bar N = tk/n) is the expected token count per expert and (\gamma) is a tunable factor (< 1 for stricter limits). Tokens are scored using the gating softmax values; for each overloaded expert, only the top‑C tokens (by score) are retained, and the rest are masked out before the All‑to‑All communication step. This selective dropping incurs negligible overhead because the scoring is already computed for routing, and the actual drop operation is a simple mask. Experiments on OLMoE show that setting (\gamma = 1.0) yields a 30 % reduction in MoE‑layer latency with only a 0.9 % drop in downstream performance, demonstrating that many tokens contribute little to the final output.

The second stage, Capacity‑Aware Expanded Drop (CAED), addresses the complementary problem of under‑utilized experts. After CATD, many experts operate far below their capacity, wasting compute resources. CAED allows each token to consider an expanded set of (m) additional local experts on the same device, still respecting the per‑expert capacity (C). In practice, the router first selects the usual top‑k experts, then augments the candidate list with the next‑best local experts. Tokens that were dropped from overloaded experts can now be reassigned to these low‑load experts, improving overall expert utilization without adding cross‑device communication. The authors illustrate this with a diagram where tokens flow from high‑load to low‑load experts within a device, preserving the synchronization barrier but balancing work.

The methodology is evaluated on both large language models (OLMoE, Mixtral‑8×7B‑Instruct) and multimodal vision‑language models. For Mixtral, applying CAED on top of CATD yields an average accuracy gain of 0.2 % and a 1.85× speed‑up in inference time. In multimodal experiments, the authors demonstrate that aggressively limiting capacity (e.g., to 50 % of the average load) does not degrade image‑token performance, owing to redundancy among visual tokens. Detailed load distribution plots show that the ratio of maximum to mean expert load drops from >7× to ≈1.2× after applying the two techniques.

Key contributions include: (1) a formal definition and quantitative analysis of the Straggler Effect in MoE inference; (2) the CATD mechanism that safely discards low‑importance tokens from overloaded experts; (3) the CAED mechanism that re‑routes overflow tokens to under‑utilized experts by expanding the local candidate pool; and (4) extensive empirical validation across diverse models and tasks, confirming that the approach delivers substantial latency reductions with negligible or even positive impacts on model quality.

The paper also discusses practical considerations. The capacity factor (\gamma) must be tuned: too low leads to excessive token loss and performance degradation, while too high yields limited latency gains. The authors find (\gamma) values between 0.8 and 1.2 work well across tested models. Moreover, because the operations are confined to the routing stage, no additional GPU memory or communication overhead is introduced, making the method attractive for production deployments. Future work could explore cross‑device candidate expansion, dynamic adjustment of (\gamma) based on runtime statistics, and integration with alternative routing strategies (e.g., learned hard‑gating).

In summary, the paper presents a simple yet effective solution to a long‑standing bottleneck in MoE inference. By enforcing capacity constraints and intelligently reallocating overflow tokens, it balances expert workloads, eliminates the Straggler Effect, and achieves up to nearly double the inference throughput while preserving or even improving model performance. This capacity‑aware inference paradigm is likely to become a standard component of high‑performance MoE deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment