The Geometry of Refusal in Large Language Models: Concept Cones and Representational Independence

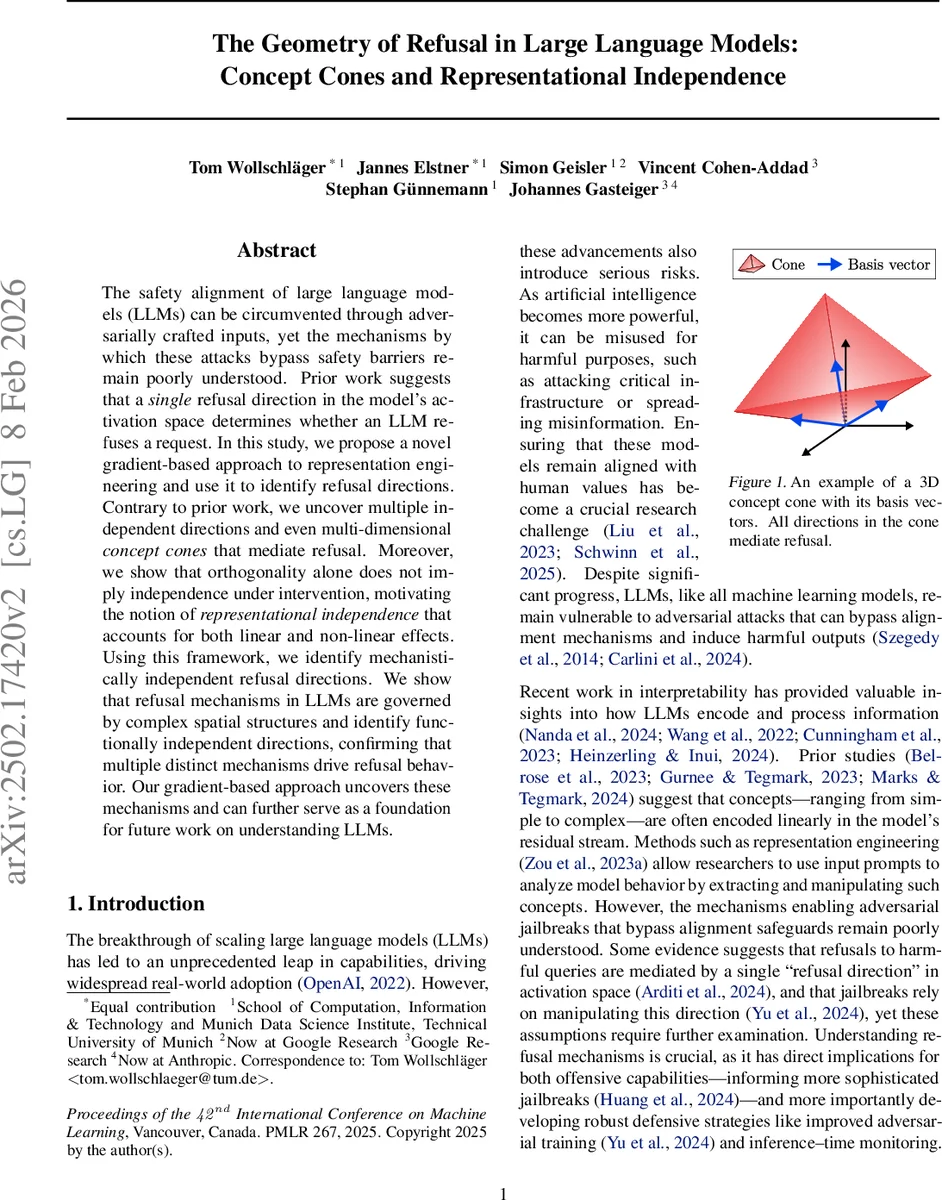

The safety alignment of large language models (LLMs) can be circumvented through adversarially crafted inputs, yet the mechanisms by which these attacks bypass safety barriers remain poorly understood. Prior work suggests that a single refusal direction in the model’s activation space determines whether an LLM refuses a request. In this study, we propose a novel gradient-based approach to representation engineering and use it to identify refusal directions. Contrary to prior work, we uncover multiple independent directions and even multi-dimensional concept cones that mediate refusal. Moreover, we show that orthogonality alone does not imply independence under intervention, motivating the notion of representational independence that accounts for both linear and non-linear effects. Using this framework, we identify mechanistically independent refusal directions. We show that refusal mechanisms in LLMs are governed by complex spatial structures and identify functionally independent directions, confirming that multiple distinct mechanisms drive refusal behavior. Our gradient-based approach uncovers these mechanisms and can further serve as a foundation for future work on understanding LLMs.

💡 Research Summary

The paper investigates how large language models (LLMs) refuse or comply with user requests, challenging the prevailing view that a single linear “refusal direction” in activation space governs this behavior. The authors introduce a gradient‑based method called Refusal Direction Optimization (RDO) that directly optimizes a vector r to satisfy two desiderata: (1) Monotonic Scaling – adding or subtracting α·r at a chosen layer should cause the model’s refusal probability to change monotonically with α; (2) Surgical Ablation – projecting the residual stream orthogonal to r should eliminate refusals on harmful prompts while leaving harmless inputs unaffected.

RDO’s loss combines three terms: (i) an ablation loss (cross‑entropy between the model’s output after ablation and a target “answer” for harmful prompts), (ii) an addition loss (cross‑entropy between the model’s output after adding r and a target “refusal” for harmless prompts), and (iii) a retain loss (KL divergence ensuring that ablation does not disturb the model’s normal behavior on harmless inputs). By freezing all model parameters and updating only r via gradient descent, the method discovers directions that are both effective at steering refusal and minimally invasive to other capabilities.

Experiments are conducted on several state‑of‑the‑art open‑source models (Gemma‑2, Qwen2.5, Llama‑3) using paired harmful/harmless prompts from ALPACA and SALAD‑BENCH. The authors compare RDO against the traditional Difference‑in‑Means (DIM) approach, evaluating (a) jailbreak success rate (ASR) on the JailbreakBench suite with a strong‑reject judge, and (b) side‑effect impact on standard benchmarks such as TruthfulQA. RDO matches or exceeds DIM in ASR for both ablation‑based and addition‑based attacks, achieving a 3–5 percentage‑point gain on average for ablation attacks. Crucially, RDO reduces performance degradation on TruthfulQA by roughly 40 % relative to DIM, indicating that the learned direction interferes less with factual reasoning.

Beyond performance metrics, the authors uncover multiple independent refusal directions and multidimensional concept cones. By initializing RDO from different random seeds and targeting various layers, they find several vectors that are pairwise orthogonal yet remain functionally independent under intervention. Visualizing these vectors reveals a polyhedral cone in activation space: any direction inside the cone induces refusal, confirming that refusal is not confined to a single line but to a higher‑dimensional region. This finding directly contradicts earlier work that posited a solitary linear subspace.

To formalize independence, the paper introduces Representational Independence, arguing that orthogonality alone does not guarantee non‑interference because non‑linear transformations (MLPs, attention) can cause interactions when multiple directions are active simultaneously. The authors propose an “intervention interaction score” to quantify such effects and demonstrate empirically that some orthogonal pairs still exhibit measurable cross‑talk, whereas the directions identified by RDO satisfy the stricter independence criterion.

The contributions are threefold: (1) a gradient‑based representation‑engineering pipeline that more precisely isolates refusal‑mediating directions; (2) a conceptual framework (Representational Independence) that captures both linear and non‑linear dependencies among interventions; and (3) empirical evidence that LLM refusal mechanisms are governed by complex spatial structures—multiple independent directions and multidimensional cones—rather than a single linear axis.

Overall, the work reshapes our understanding of safety‑related behavior in LLMs, provides a powerful tool for probing and manipulating internal representations, and offers a foundation for designing more robust alignment strategies and for anticipating sophisticated jailbreak attacks.

Comments & Academic Discussion

Loading comments...

Leave a Comment