Efficient Planning in Reinforcement Learning via Model Introspection

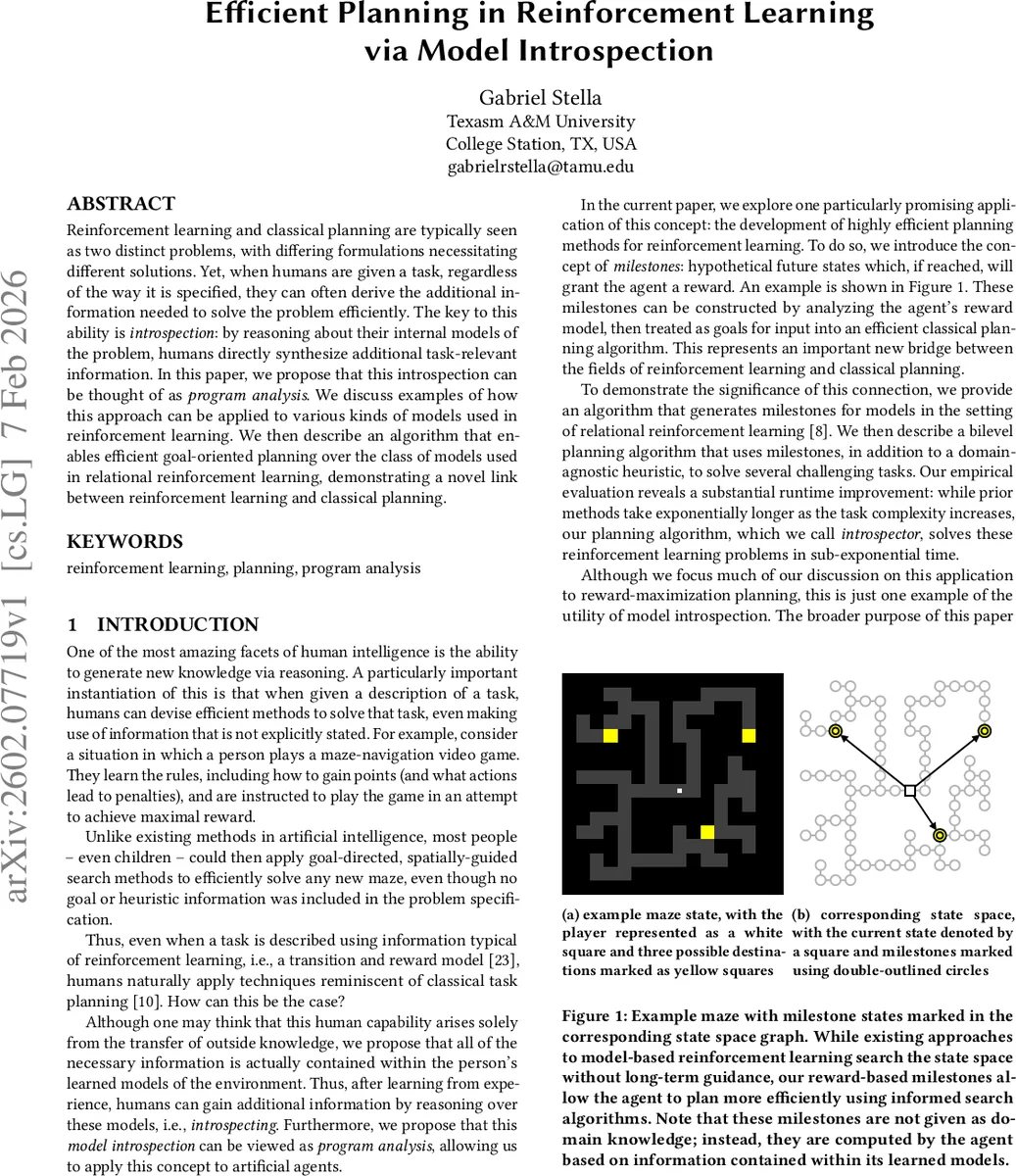

Reinforcement learning and classical planning are typically seen as two distinct problems, with differing formulations necessitating different solutions. Yet, when humans are given a task, regardless of the way it is specified, they can often derive the additional information needed to solve the problem efficiently. The key to this ability is introspection: by reasoning about their internal models of the problem, humans directly synthesize additional task-relevant information. In this paper, we propose that this introspection can be thought of as program analysis. We discuss examples of how this approach can be applied to various kinds of models used in reinforcement learning. We then describe an algorithm that enables efficient goal-oriented planning over the class of models used in relational reinforcement learning, demonstrating a novel link between reinforcement learning and classical planning.

💡 Research Summary

The paper proposes a novel perspective on bridging reinforcement learning (RL) and classical planning by treating the human ability to “introspect” on internal models as a form of program analysis. The authors argue that, after an agent has learned accurate transition (ˆT) and reward (ˆR) models, all the information needed for efficient planning is already embedded within these models. By applying static and dynamic program‑analysis techniques to the learned models, the agent can automatically synthesize additional task‑relevant knowledge without external supervision.

The central construct introduced is the “milestone”: a hypothetical future state that, if reached, yields the maximal possible reward r_max. Formally, a milestone g satisfies ∃ s, a : T(s,a)=g ∧ R(s,a,g)=r_max (or equivalently, a state from which a single action yields r_max). Milestones are not supplied by the environment; they are derived from the reward structure encoded in ˆR. This turns the RL problem, which traditionally lacks explicit goal states, into a goal‑oriented planning problem where milestones serve as intermediate goals.

To demonstrate the practicality of this idea, the authors focus on relational reinforcement learning (RRL), where states are collections of objects and relations and models are expressed as logical programs. This representation makes it natural to perform program analysis: predicates, action schemas, and conditional branches can be inspected to locate states that satisfy the milestone condition. The paper presents an algorithm that scans the reward program, extracts all maximal‑reward actions, and groups their pre‑states into a milestone set G.

Planning then proceeds in a bilevel fashion. The outer level computes the milestone set G. The inner level treats each milestone as a goal and runs a classical heuristic search (e.g., A*) over the transition model ˆT. The heuristic is domain‑agnostic, derived solely from the transition program (e.g., using relaxed planning graphs). Because milestones provide long‑range guidance, the search does not rely on sparse intermediate rewards as traditional model‑based RL methods (value iteration, Monte‑Carlo Tree Search, etc.) do. Consequently, the planner can focus its expansion on promising regions of the state space, dramatically reducing the number of explored nodes.

Empirical evaluation on several challenging RRL benchmarks (block‑stacking, object‑manipulation puzzles, and maze‑like environments) shows that the proposed “Introspector” algorithm scales sub‑exponentially with problem size, whereas prior model‑based planners exhibit exponential growth. In particular, for tasks with extremely sparse rewards, Introspector solves instances that would cause MCTS or value iteration to time out. The runtime improvements are attributed to two factors: (1) the automatic generation of reward‑driven milestones that act as high‑level sub‑goals, and (2) the use of classical planning heuristics that exploit the structure of ˆT.

The paper’s contributions can be summarized as follows:

- A conceptual reframing of model introspection as program analysis, providing a unified language to discuss RL models and planning heuristics.

- The definition of milestones as reward‑maximizing intermediate states, and an algorithm for extracting them from relational reward programs.

- A bilevel planning architecture that combines milestone generation with domain‑agnostic heuristic search, yielding substantial computational gains.

- Experimental evidence that the approach bridges the gap between RL and classical planning, achieving near‑optimal plans in sub‑exponential time on domains where existing RL planners fail.

Limitations are acknowledged. The method assumes deterministic, perfectly learned models; stochasticity or model error would require robust extensions. Milestones are defined with respect to a single maximal reward, which may not capture richer reward structures (e.g., multi‑objective or dense rewards). Finally, the reliance on relational, program‑like models restricts immediate applicability to deep‑network‑based RL where the model is a black‑box neural net. Future work is suggested in extending milestone extraction to stochastic settings, handling multiple reward tiers, integrating differentiable program analysis for neural models, and exploring automatic heuristic generation beyond the domain‑agnostic baseline.

Comments & Academic Discussion

Loading comments...

Leave a Comment